The highly anticipated NVIDIA GTC 2024 officially began with Jensen Huang taking the stage in his iconic black leather jacket.

1. Digital Twins: Computing is Transforming a $100 Trillion Industry

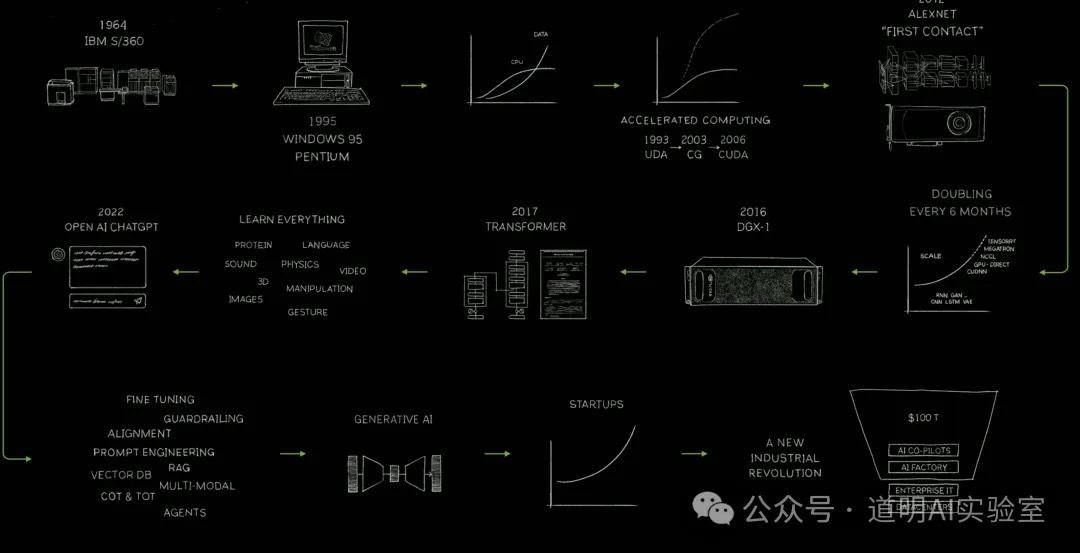

$100 trillion industries are undergoing rapid transformation because of computing—this was the message of Jensen's first PPT slide. Indeed, while I thought he was reviewing NVIDIA's history of success, he was actually pointing out a rapidly unfolding reality: computing is changing industries worth $100 trillion.

This fact didn't just start happening last year, but it is continuously accelerating.

Therefore, the first part of the speech, though much shorter than the introduction of the new Blackwell GPU, was fundamental and vital: Digital Twins.

As I have repeatedly mentioned, the most significant change is the connection between the physical and digital worlds. This connection is the Digital Twin, driven by increasingly high-speed, computing-powered simulation.

2. From GPU to GPU Systems: Blackwell is More Than a Power Boost—It’s a Massive Drop in Power Consumption

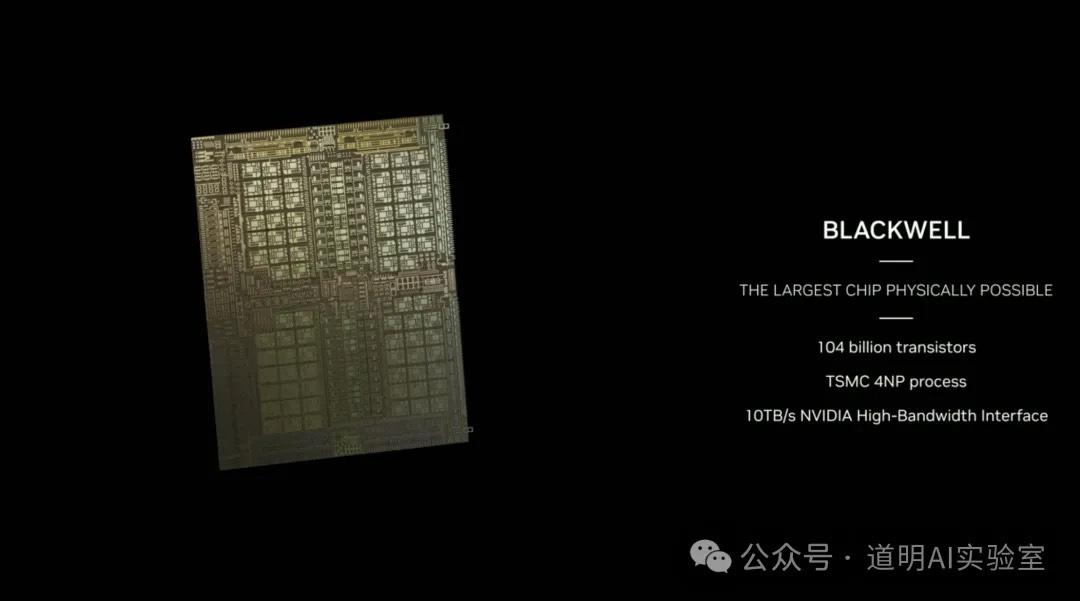

Behind the computing, of course, is NVIDIA's infrastructure. Thus, the Blackwell architecture was unveiled. The biggest change is that we might need to redefine what a "GPU" is.

First, in our normal understanding, a GPU should look like the one below. It still uses a 4nm process.

However, in the Blackwell era, the single chip above can no longer be called the GPU; it takes two connected together to form one (similar to Apple's transition from M2 Max to M2 Ultra). This might be called "B200" = "B100" + "B100" (I was still confused by the end of the keynote if it should just be called B100, but it doesn't matter).

In fact, there aren't explicit "B100" or "B200" product names, only the "Blackwell GPU."

What if you connect two "Blackwell GPUs"? What if you connect even more?

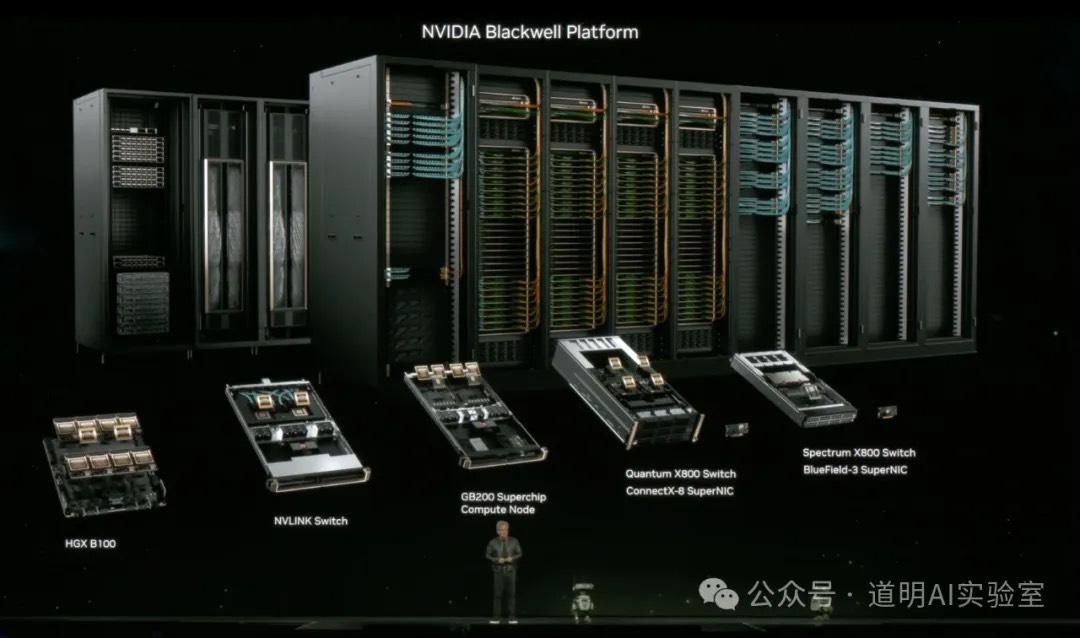

- GB200: Two "Blackwell GPUs" + one Grace CPU (whereas GH200 was one GPU + one Grace CPU).

- Single Machine: Two GB200s (4 Blackwell GPUs) linked in one machine, called a Tray or a Node.

- GB200 NVL72: Connecting 18 trays in a single rack using NVLink on the backplane results in 36 GB200s, or 72 GPUs, hence the name "GB200 NVL72." This is the ultimate "GPU."

NVIDIA has officially evolved from providing GPUs to providing GPU systems.

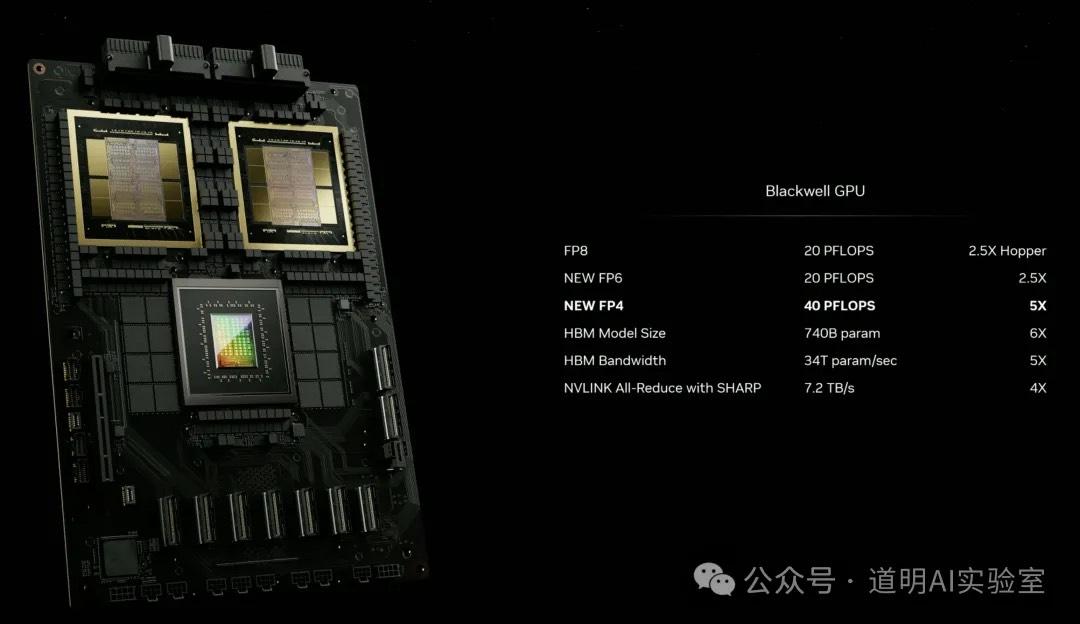

So, how is the performance?

A single "Blackwell GPU" provides 2.5x the FP8 computing power of the Hopper architecture.

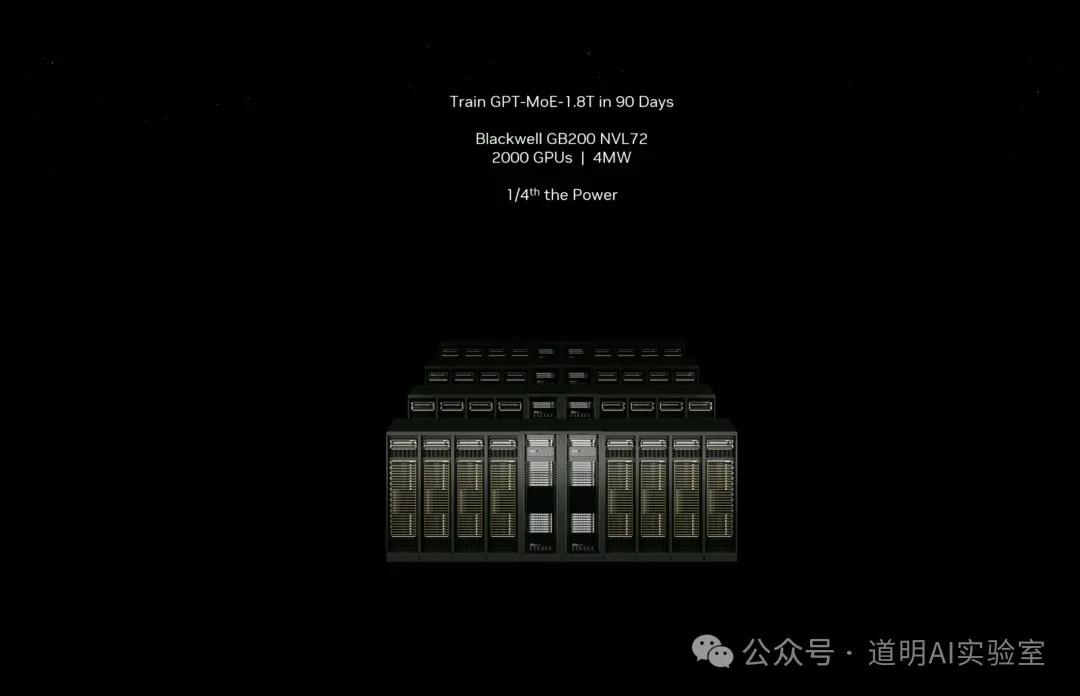

To train a model like GPT-MoE-1.8T (likely GPT-4), it would take approximately 8,000 Hopper GPUs (H100) and 90 days.

Using the GB200 NVL72 system, it would require 2,000 GPUs (with each GPU having two Blackwells, so technically 4,000 basic computing units) for the same 90 days. However, the energy consumption is only a quarter of what the current Hopper system uses.

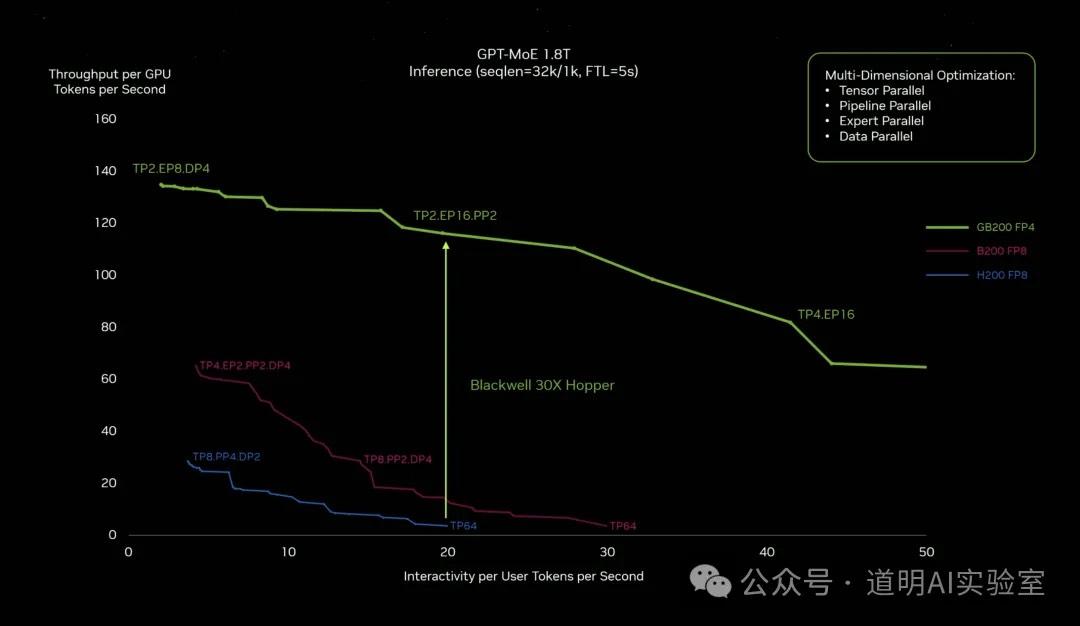

For inference, NVIDIA introduced new FP8 and FP4 precision levels. Using FP4 for inference can boost performance by 30 times.

So, who are the potential customers for these new products? The biggest players—those building clusters of thousands or even tens of thousands of GPUs.

AI is gradually returning to the "Era of Giants," isn't it?

Jensen summarized the new Blackwell system in his final slide: new GPUs, new NVLink, the new GB200 Superchip, the new X800 switch, and the new Blackwell platform.

3. Others:

NVIDIA's software development platforms (collectively), including a series of names supporting CUDA-accelerated computing, were also featured.

Autonomous driving, robotics, digital twins...

Thor, the next-generation chip for autonomous driving and robotics, will begin shipping late this year or early next year.

The biggest change is actually: Large Model Driven.

In fact, as I mentioned in my preview, after a year of "intensive learning" by the market, these changes are now quantitative rather than qualitative.

Summary:

- While information about the Blackwell architecture had already leaked—such as one GPU containing two computing units—the "GB200 NVL72" interconnect exceeded expectations. However, the target customer base for these products is becoming increasingly concentrated. If shipped quickly, it will be a significant blow to AMD.

- FP4 performance is a standout. NVIDIA has fully realized that the inference market is the next critical battlefield.

- Jensen Huang's two-hour speech/show sent a confident and clear signal: NVIDIA is the infrastructure for AI, period.

- As stated in the opening: This is a market—computing—that is rapidly changing a $100 trillion industry.

- The biggest opportunity within this is Digital Twins.

Finally, a small "Easter Egg": Robots take the stage.