TL;DR: Over the weekend, two mysterious models codenamed Sonoma Dusk Alpha and Sonoma Sky Alpha appeared on OpenRouter. Boasting a two-million-token input context window, many speculated whether these were the precursors to Gemini-3. After a series of tests and comparisons, I am inclined to believe they are likely not Gemini-3, but rather Grok. While initially impressive, the models exhibit issues typical of the Grok series: a lack of stability in the finer details, which boils down to data issues.

Detailed breakdown below:

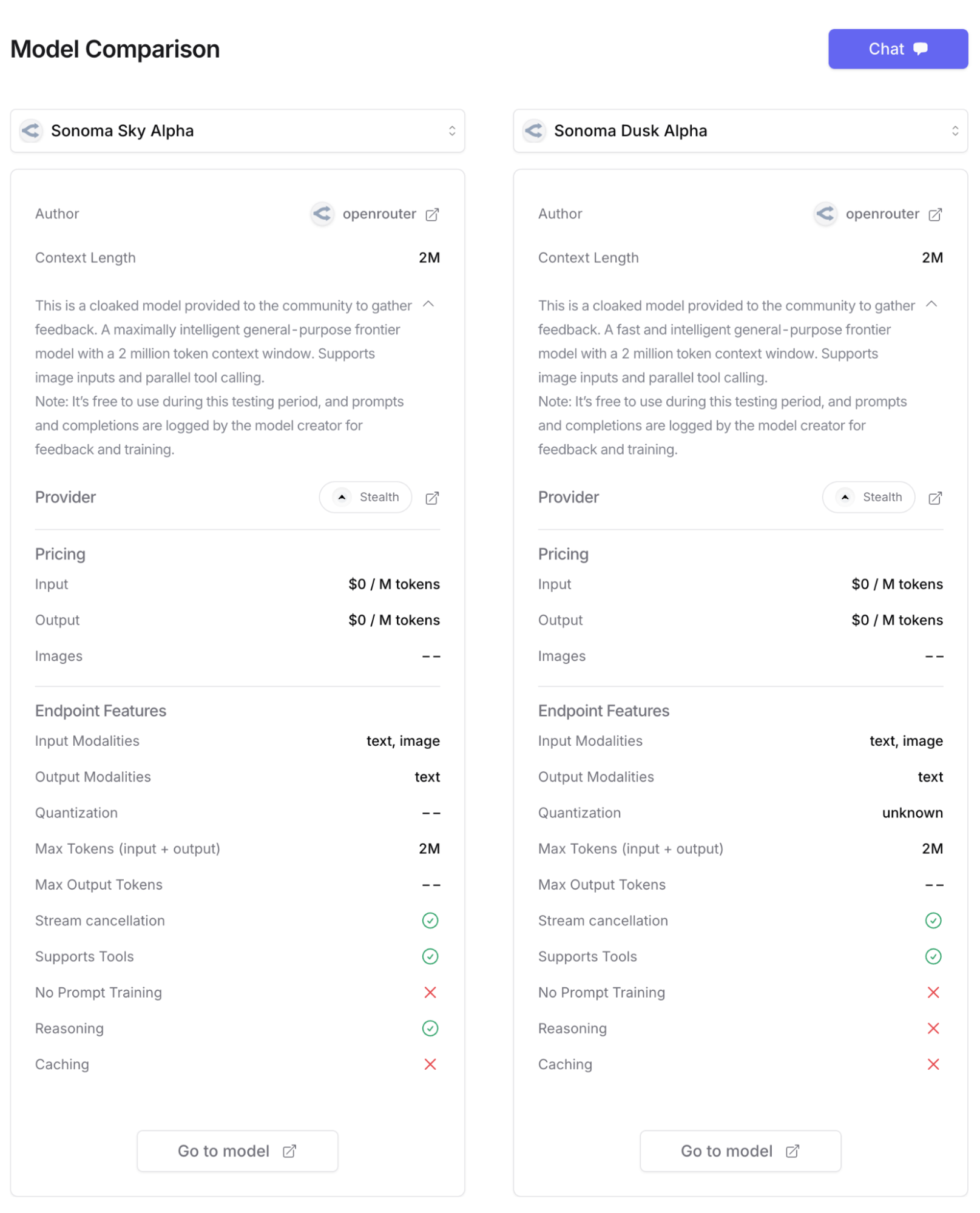

First, a brief intro to the two models: one is a reasoning-capable model and the other is not. Both support a 2M (two million) input context window, multimodal capabilities, and parallel tool calling.

As soon as these models were released, community speculation immediately pointed toward Gemini-3, partly due to the massive 2M context window and partly because many believe Gemini-3 is due for release.

However, my first test led me to exclude this possibility: I asked the models to generate a landing page for an website. To observe the models' inherent generation style, I used a very simple prompt: "AI Factory Landing Page."

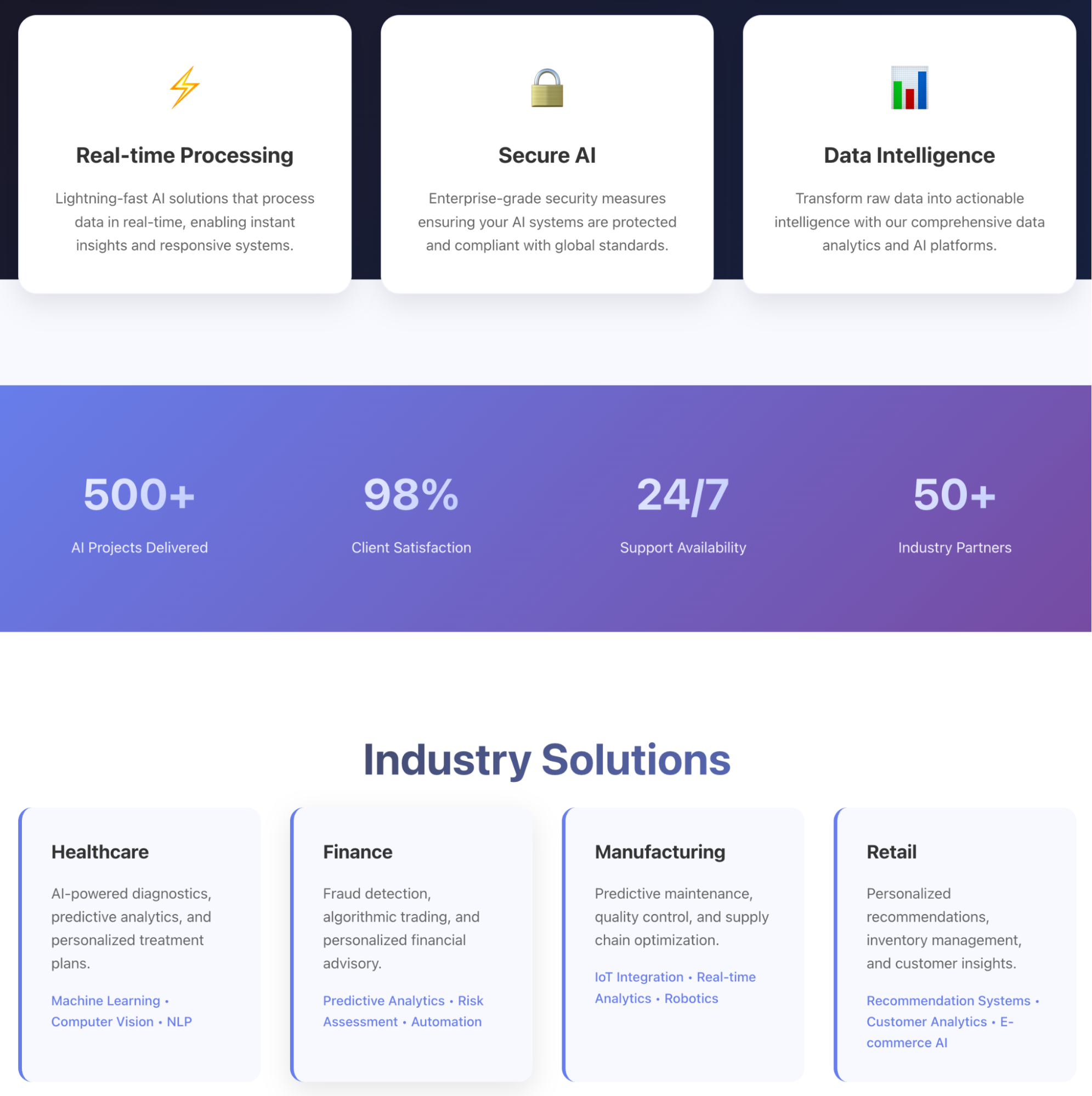

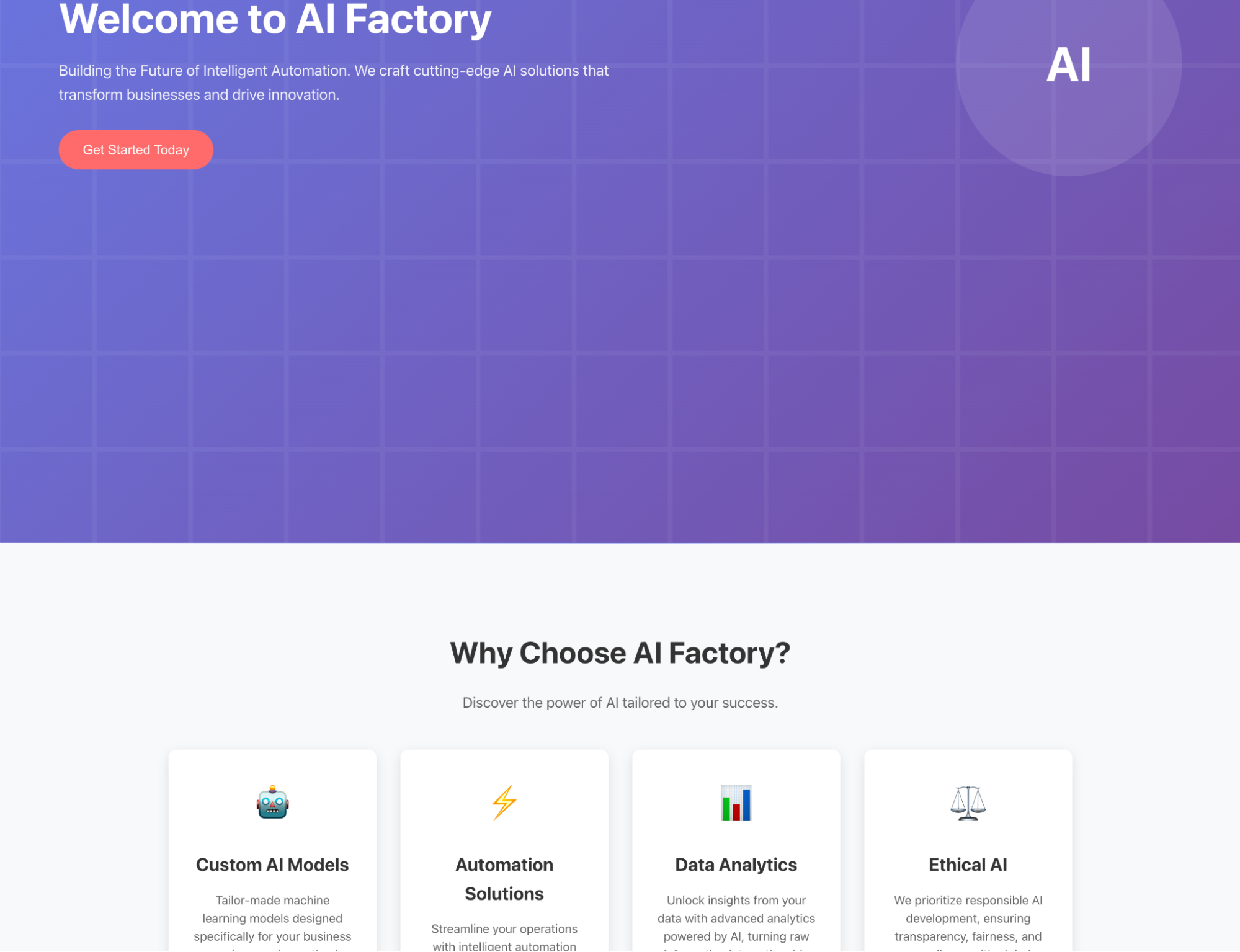

Below are screenshots of the pages generated by both models. At first glance, the style is reminiscent of Claude, utilizing the signature blue-purple Tailwind CSS aesthetic.

Granted, Claude has recently updated its style slightly. However, the use of emojis and the overall color palette remain consistent with that lineage. I have a growing suspicion about why this is the case.

In contrast, the style of Gemini-2.5 is markedly different. This is my first reason for believing Sonoma is not part of the Gemini series: during generational upgrades, a model's "aesthetic" rarely shifts so drastically.

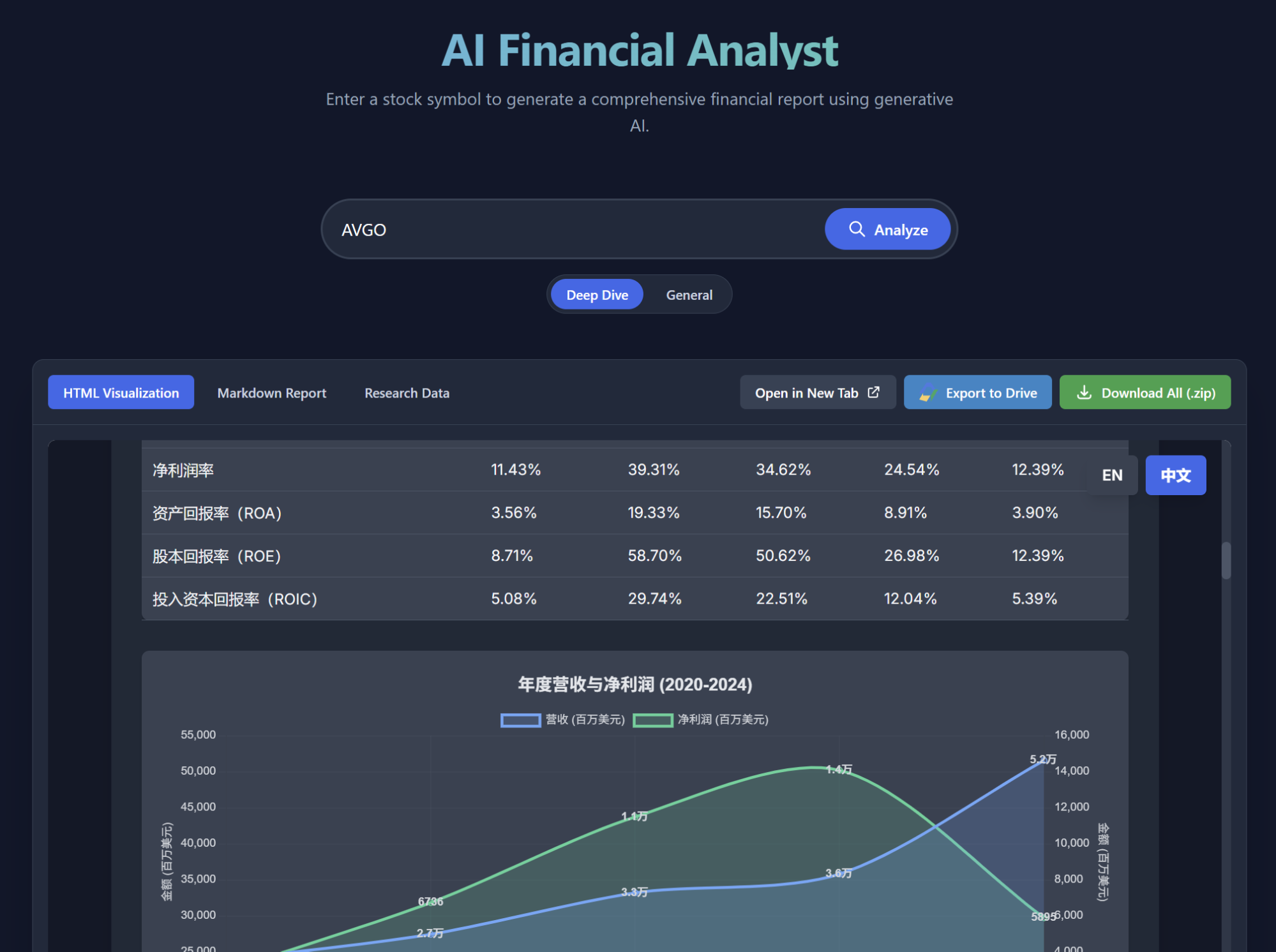

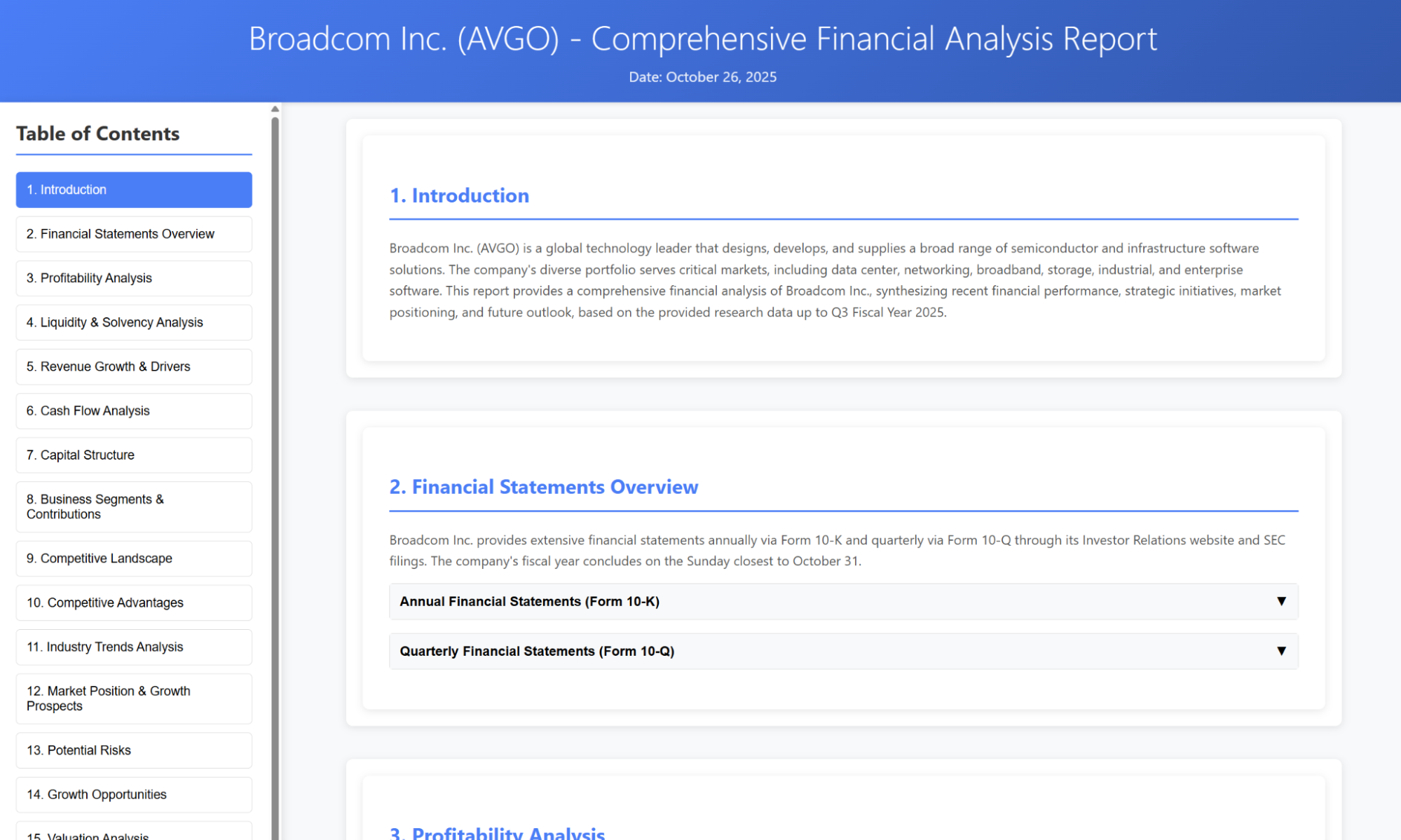

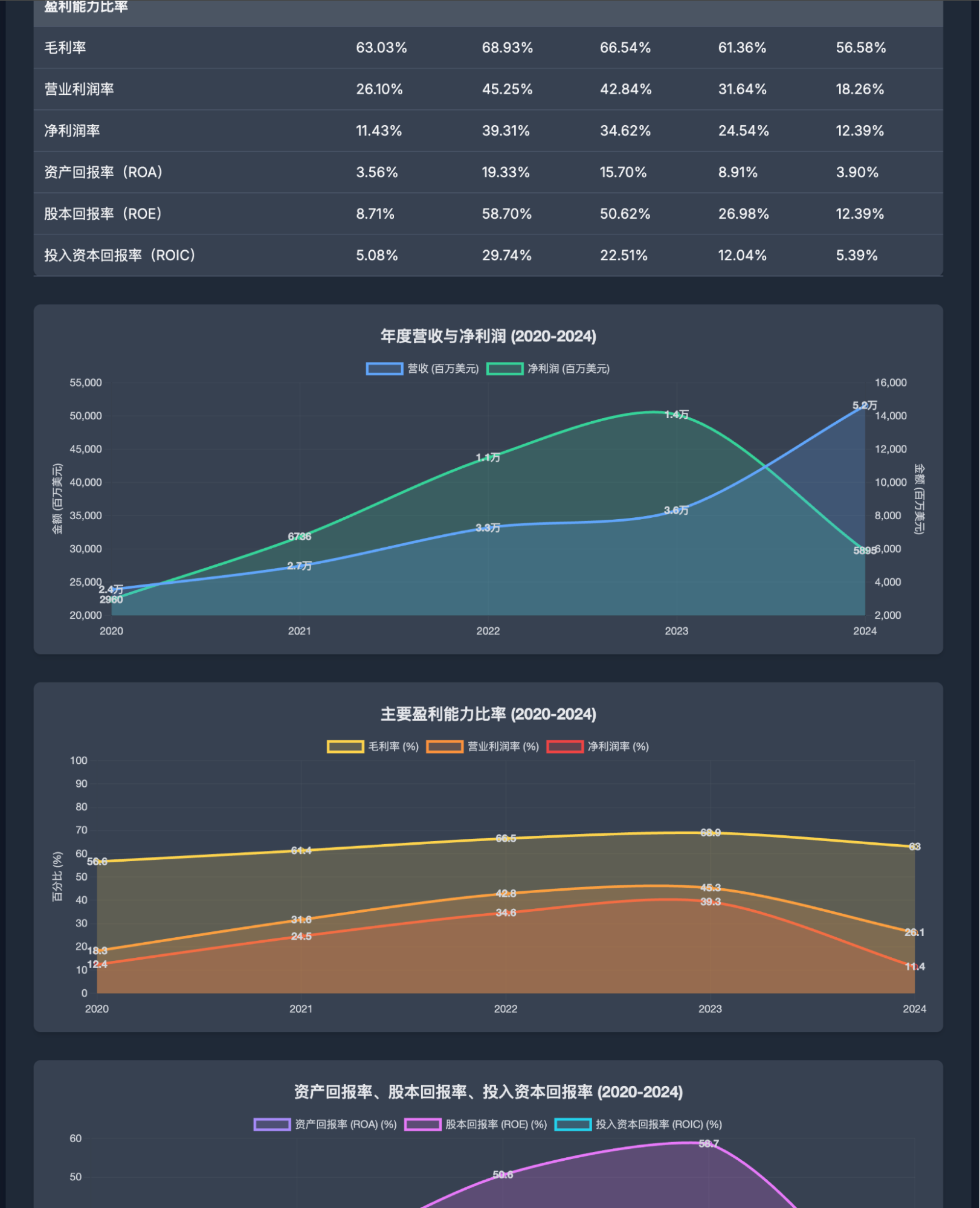

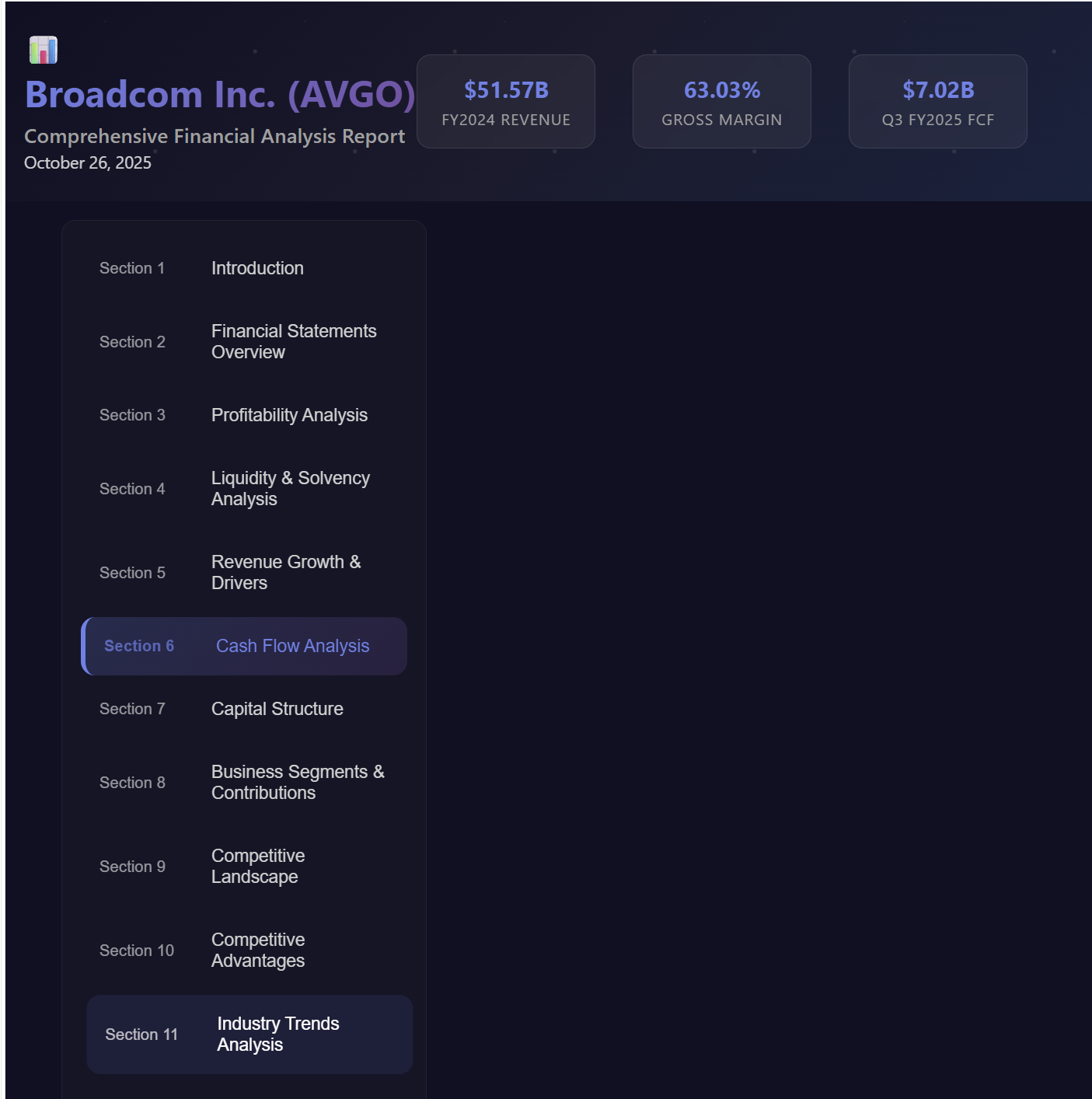

Moving into deeper testing, the differences became even more apparent. Over the weekend, I was optimizing an "AI Financial Analyst" project based on public companies in Google AI Studio's Build environment. The project uses Gemini-2.5-Flash, which notably breaks previous output length limitations. For instance, for Broadcom (AVGO), it can output a complete report across 16 sections in a single file within a 64K output context window.

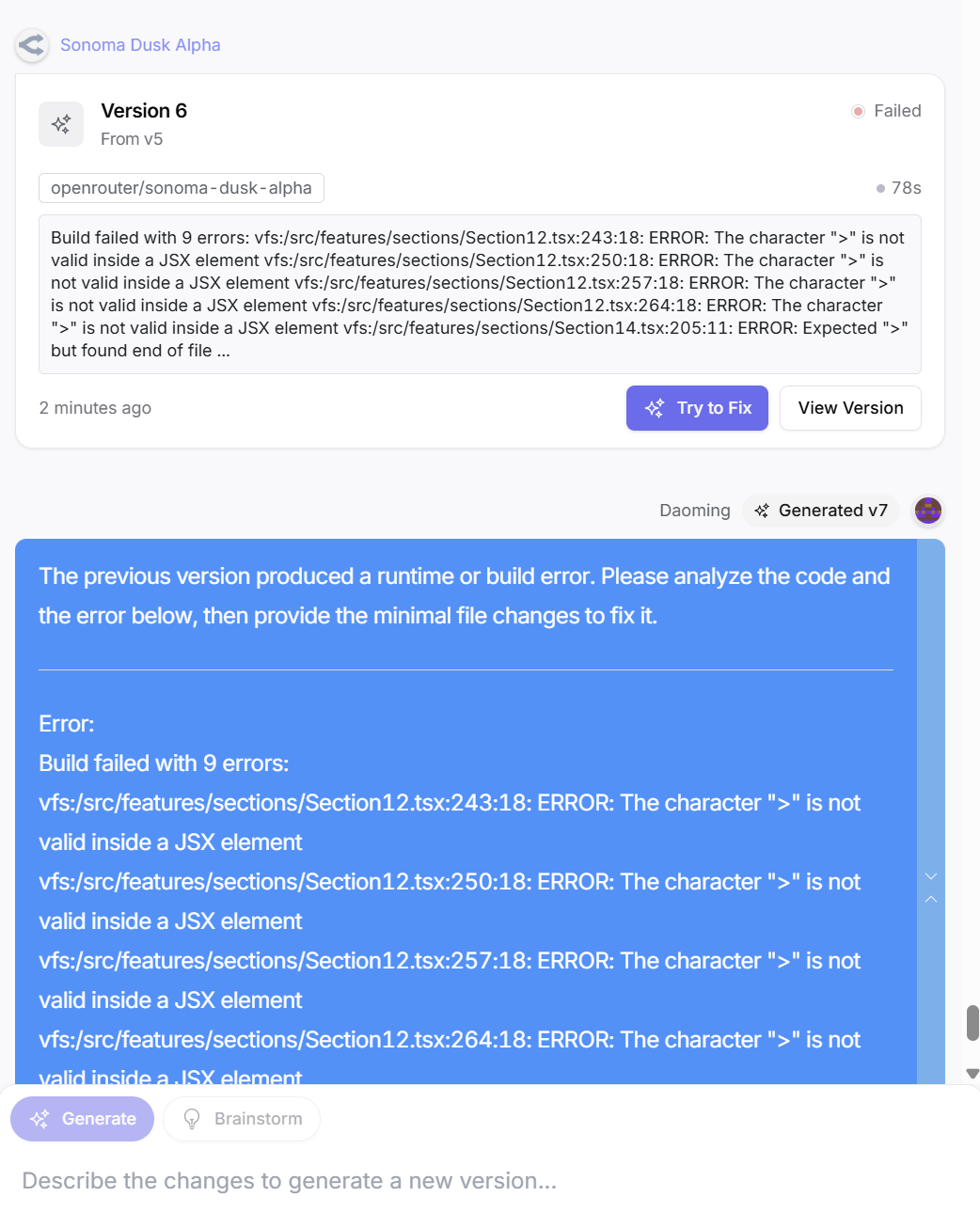

I fed the original report to both Sonoma models to generate a visualized website. While the generation speed was fast, neither version could get it right on the first try, repeatedly encountering various character encoding or syntax errors. It took six or seven "Try to Fix" prompts before the pages displayed correctly. This performance is not up to Gemini's standard, as Gemini has a very high first-pass success rate.

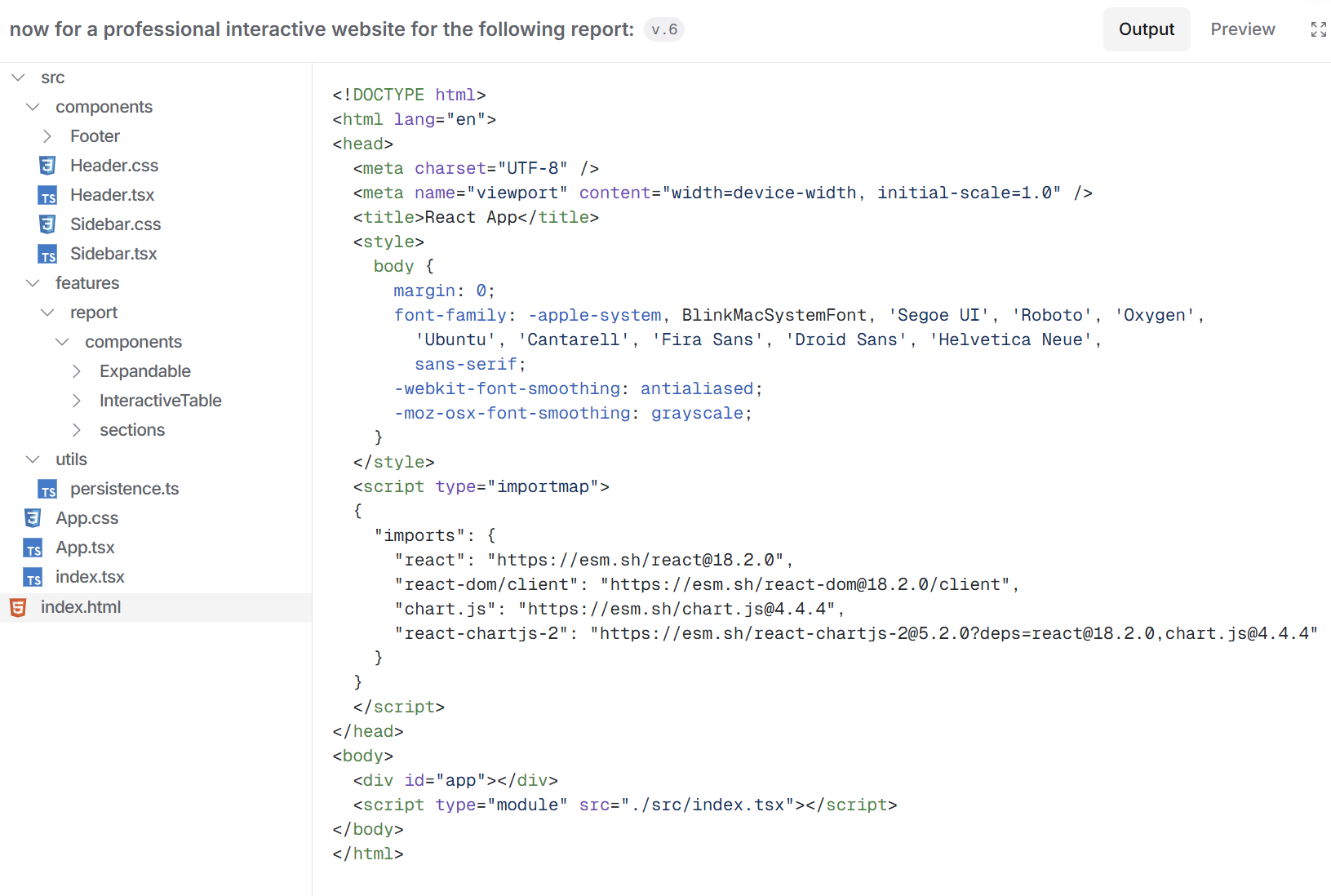

Here we can see the difference between the two models: the reasoning model "Sky" can output more content, which is directly reflected in a higher file count.

Looking at the code structure, Sky's performance is actually quite good. When I saw the code, I almost thought it was a Gemini model again because the architecture and the handling of importmap are very similar to Gemini. However, looking closely at the styling, it lacks the typical Google font usage, which dispelled that thought.

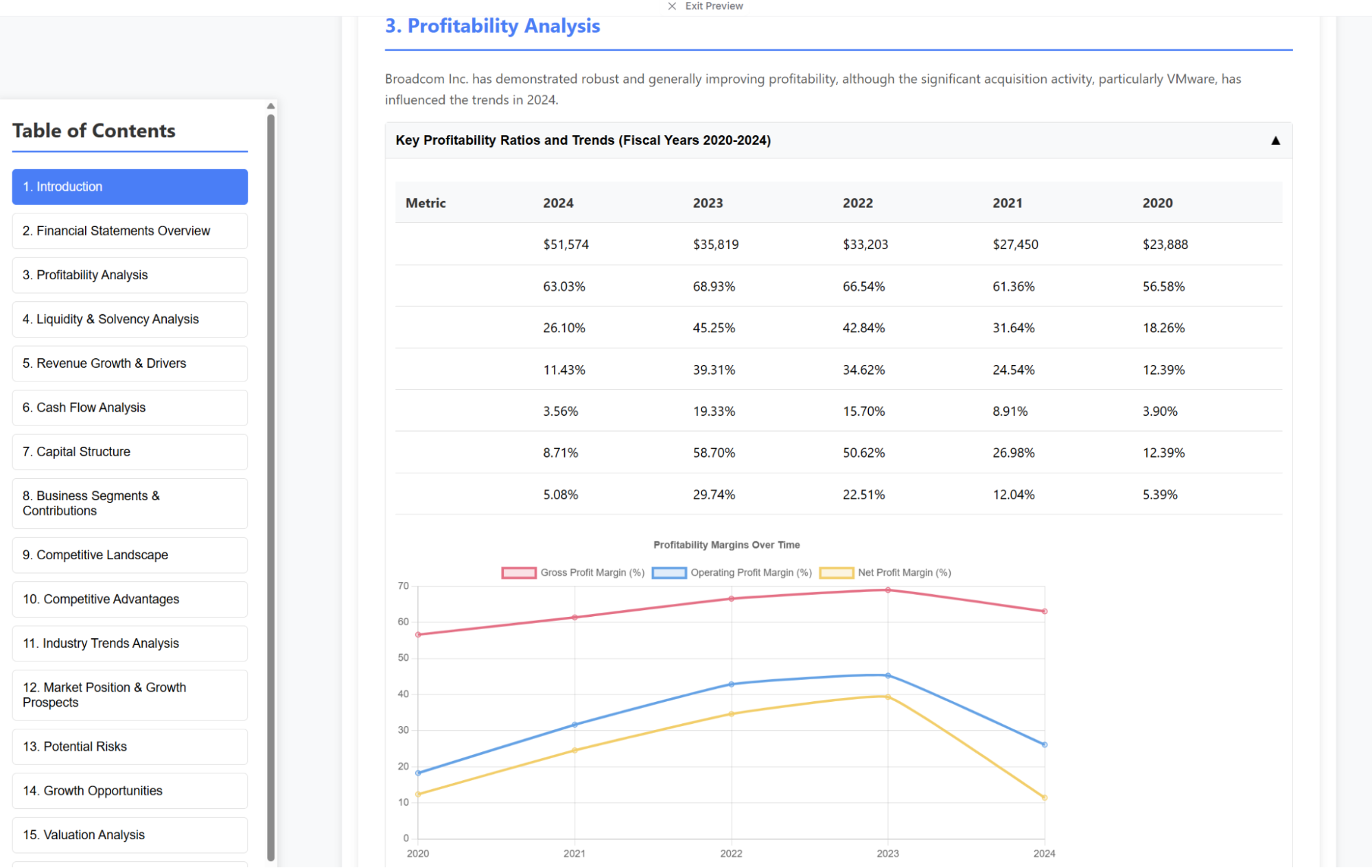

Below is the result generated by Sky. Overall it looks fine, but there are still various small display errors. Furthermore, the information density across 51 files is still inferior to Gemini-2.5-Flash's single HTML file (which was even bilingual).

For comparison, a similar section of Gemini-2.5-Flash's HTML version is shown here:

Compared to the reasoning "Sky" version, the "Dusk" version was quite poor: I requested modifications several times, but the content area simply failed to display.

I also tried giving both models prompts similar to what I gave Gemini-2.5-Flash to generate a single HTML file, but the results were a "disaster" and aren't worth posting.

Based on these observations, I am fairly certain that Sonoma is not Gemini-3. The reasons are simple: first, the aesthetic style is inconsistent with Gemini; second, its capabilities do not show a significant leap over Gemini-2.5. Additionally, the model currently has many detail-oriented issues, which are ultimately "data" problems.