In the new year, I plan to release some "shackles" on myself: seriously and simply updating my views.

Since Excalidraw released its official MCP, I deployed it on Vercel and connected it to the Claude client, which allowed me to return to the state of drawing with Excalidraw two years ago.

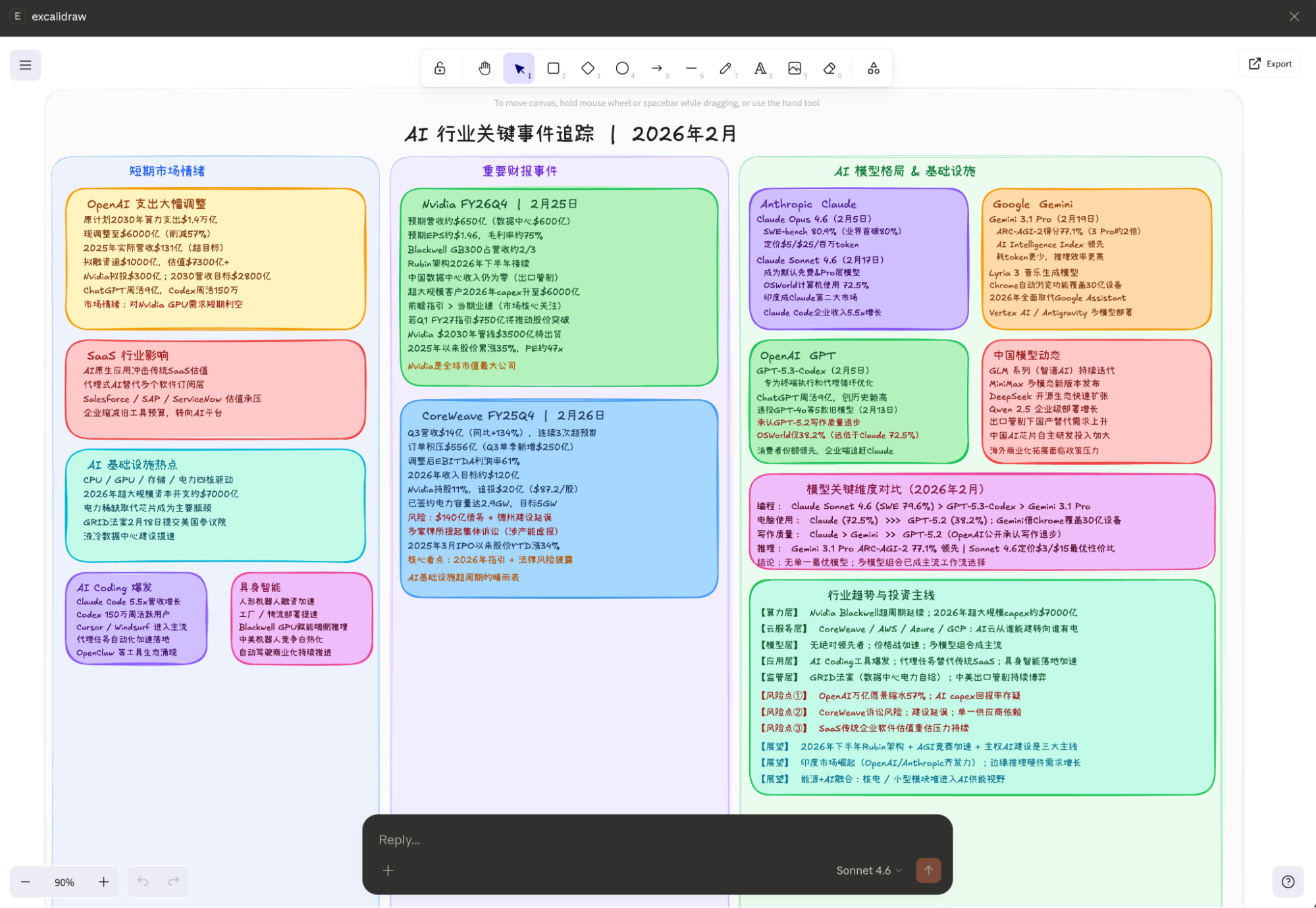

Below is a sketch I hand-drew, representing the main topics I wish to discuss.

The image below was generated by Claude-Sonnet-4.6 based on the content of the image above, automatically updated and reformatted (representing Claude's views, not mine).

Therefore, the first topic to discuss is models and their impact on the software industry, using the practice of connecting Claude to Excalidraw.

I. SaaS, OpenClaw, AI Applications, etc.

Regarding the smooth operation described above, a simple explanation is still necessary: at the beginning of 2025, I could already achieve similar results through Claude (coding) + Gemini (or ChatGPT research), but it would take me about half a day. Now, configuring the MCP server takes about ten minutes, and Claude "optimizing" my hand-drawn sketch takes less than five minutes.

So, is this a huge step forward? Of course: the barrier to entry is significantly lowered (meaning the number of benefited users increases sharply) + the degree of automation is significantly improved.

But:

This absolutely does not mean an indiscriminate blow to SaaS companies. Claude will not reinvent the many wheels similar to Excalidraw. Although MCP and Skills are used, the content is still hosted on CDNs like Vercel or Cloudflare. I didn't use private domain data here; if I did, data stack services and security services would still be needed. Therefore, just as computing power pulls the entire hardware chain, people will eventually find that in the process of AI application implementation, there is also a very important software stack infrastructure. Although we might see exaggerated revenue growth in a certain year or quarter, if we look at a longer timeframe, such as three to five years, we will find that scale growth is more objective, stability is stronger, and user moats are deeper.

OpenClaw has also exploded. In my workflow, I don't currently need such an all-in-one framework, just as I didn't use LangChain, Dify, or n8n despite recommending them; I can build "wrapper" things myself with the help of AI Coding. Therefore, from a long-term perspective, these types of applications are not worth spending too much time on; they are ultimately just passers-by.

II. Model Updates

On the Google front, Gemini-3.1-Pro and the music model Lyria-3 were released. On the Anthropic front, Claude-4.6's Opus and Sonnet were launched. OpenAI released GPT-Codex-5.3, and domestic models like GLM, Minimax, and QWen have all been updated.

In fact, benchmarks have basically lost their reference value; subjective and objective evaluations of long-duration task execution in the real world have become more important. However, there are two problems here. One is that "there is no absolute first in literature, but there is in martial arts"; Claude remains the model with the strongest programming ability, which means if the focus is on AI Coding, users will basically choose Claude. If the focus is on more knowledge and data-related multimodal processing, Gemini becomes a stable and reliable option. The other problem is that as application implementation scenarios increase, once more tools or application processes are built around a model, migration becomes increasingly difficult.

Of course, the continued progress of domestic models naturally provides more choices, but the large scale of models (MoE with trillions or more parameters) remains a significant issue, and it seems set to continue increasing in the coming years. This actually implies many fundamental problems, such as service costs and private deployment, especially against the backdrop of HBM configurations in frontier chips doubling and memory prices rising sharply.

Among model companies, perhaps only Google has enough revenue and ecosystem penetration to provide support, while Anthropic has rapid revenue growth (but still faces high risks).

The greater the progress of the model, the wider its applicability and the higher its penetration, the more it actually benefits hardware. This is a huge, unavoidable embarrassment in the development of AIGC models. Therefore, the only way to survive is to rely on massive capital expenditures to lock in scarce power and computing resources, keeping competitors out.

III. OpenAI Expenditure Reduction

This news "fermented" over the holiday. According to a CNBC report, OpenAI's operating expenditure plan through 2030 is $600 billion, a significant drop from the $1.4 trillion previously stated in multiple public appearances.

This is, of course, somewhat bearish news (though it falls within the forward-looking views I have maintained since the fourth quarter of last year), but I believe current comments have missed the point. The real point is that rational calculations and market consensus never seem to have truly believed the $1.4 trillion figure. The market has already interpreted OpenAI's "moonshots" as a bubble. Therefore, $600 billion might be slightly lower than market consensus, but the reaction naturally won't be a magnitude of 1.4 trillion to 600 billion. Furthermore, from another perspective, if $600 billion is considered to have a "high probability of implementation," it actually serves as a very good bottom line.

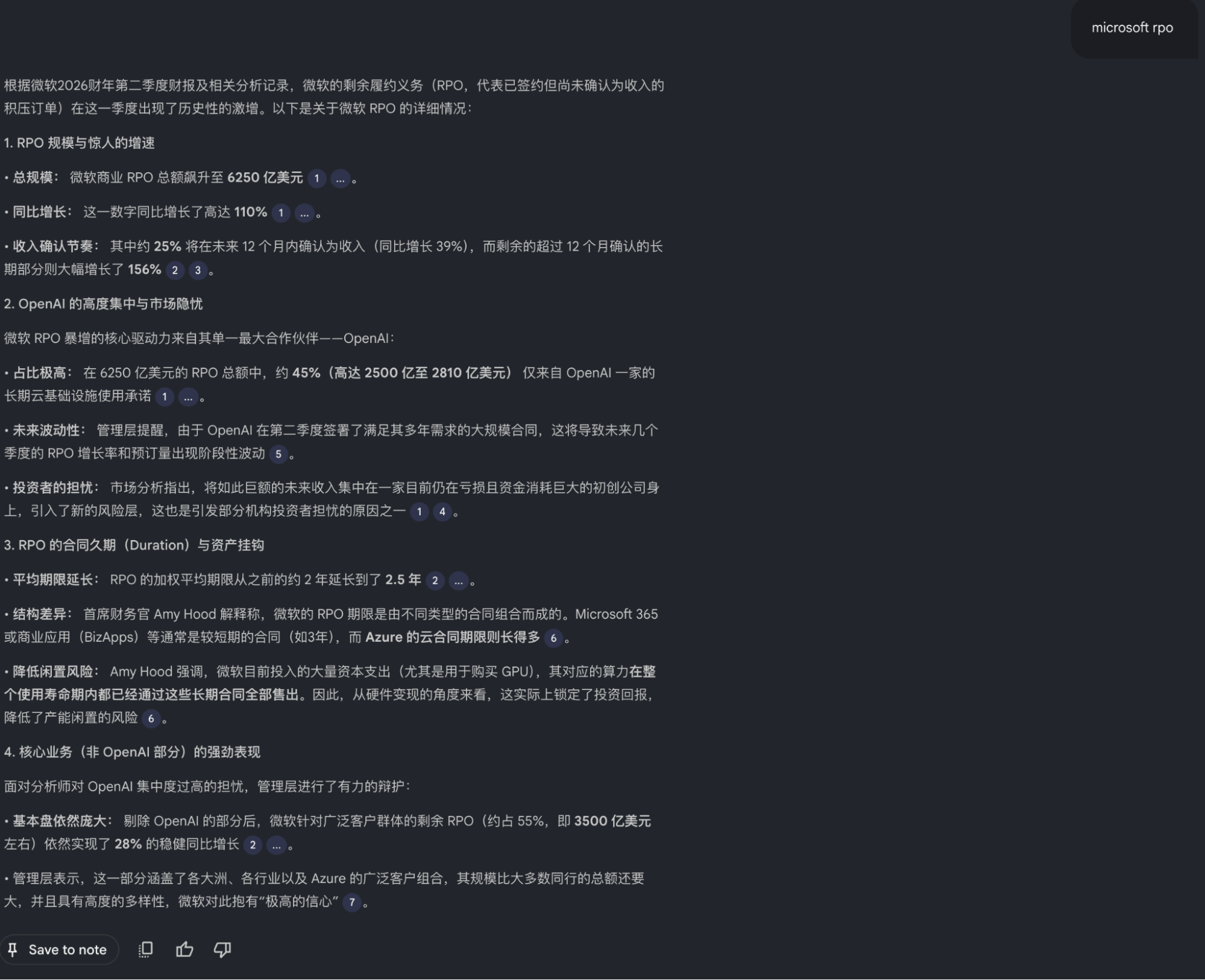

Here, I want to borrow Microsoft's RPO. Because this is a more credible number (even if I am not bullish on OpenAI, I have never listed OpenAI's bankruptcy as a likely future scenario), this RPO has an average term of 2.5 years, and OpenAI's is approximately $250-281 billion. Generally, I tend to believe this value matches the $600 billion expenditure figure before 2030 more closely. Of course, this also means that in future plans, OpenAI's dependence on Microsoft may be higher than the market originally expected. Meanwhile, combined with the new round of financing and the highly credible news that Nvidia invested about $30 billion (reported by all authoritative media), the probability of the scale of OpenAI's "cooperation" with other hardware suppliers shrinking will likely be relatively high.

Additionally, if the news is true, it could alleviate concerns about "excessive capital expenditures by big tech companies." Originally, the sharply increased CAPEX plans were a forced move in the context of a resource grab—a prisoner's dilemma. I said during offline exchanges in Q4 last year that the solution to this prisoner's dilemma is for someone like OpenAI to effectively be unable to realize its "exaggerated promises."

Thus, the market is very "smart." when Microsoft, Google, and Amazon released CAPEX plans far higher than expected, not only did their stock prices fall, but the computing power sector, which should have benefited, didn't make much of a splash either. I also said last year, and emphasized during earnings interpretations, that big tech will definitely provide very high CAPEX plans out of necessity for competition and strategic planning, but how much they actually spend will be another number. I believe the market likewise didn't "believe" those expenditure figures, and I see no need to adjust my previous predictions of the actual implementation values.

As I've said before: in the end, this world is physical.

IV. What About Embodied AI?

This world is physical. Actually, I don't really want to talk about the Spring Festival Gala robots; it's too sensitive.

At the same time, I cannot stand on my own position to talk about whether it's bullish or bearish; we should listen to the market. Because the situation is what it is, and how the market performs depends entirely on the market itself. For example, the Hong Kong market gave good feedback to large model companies (such commentary is beyond my expertise).

However, I have two thoughts on this issue, both of which are reinforced. First, as long as there is data, anything can be achieved; the performance at the Spring Festival Gala demonstrated this. Discussing whether it was pre-programmed is meaningless; those in the know understand what's going on—the technical content is not low at all; it's quite high.

The second thought is that embodied AI is much harder than I thought a few years ago—perhaps five to ten orders of magnitude harder. This view has been significantly strengthened after reading some books on precision cognitive psychology and neuroscience over the holiday, combined with my own feelings while taking photos recently. I think the real difficulty is data; we might not even know what data we are missing.

V. So, Everything Revolves Around Data

From the training phase to the application phase, models are all about data. We don't even need to discuss specific tasks like Coding, multimodality, or video generation; the entry into the AI era itself means continuous, exponential growth of data. This is simply not on the same scale as "Big Data."

Therefore, the long-term logic for power, GPUs, storage, and CPUs is all here. In comparison, power seems the most stable, as one investment yields benefits for decades. Storage has the greatest elasticity because, in the same space, the value can increase manifold (capacity, bandwidth). But these ultimately function through GPUs (ASIC or other types of computing chips) and CPUs. However, in the long run, the CPU is likely the component most constrained by physics (number of nodes), and the CPU technical architecture is too stable for any major breakthroughs in the short to medium term. Although storage is elastic, after a period of craze, its position in the industry chain can be considered mostly reflected. I have always believed that memory is a vital core, but at this stage, from a risk-reward perspective, it is probably on par with GPUs and packaging.

Of course, there is a variable involving reversed causality: how high a valuation level the market is willing to give for a "super cycle" or "significantly weakened cyclicity." However, valuation level is a result, not a cause—hence why I call it reversed causality.

We can only let the market tell us what its mood is.

VI. Important Earnings Reports

Nvidia's earnings report next week will likely exceed market expectations again. However, following up on the last sentence of the previous point, we cannot predict how the sentiment will react. But we still have clear points of focus. I believe that during the conference call, analysts will ask about the investment in OpenAI (the rumored $30 billion), OpenAI's expenditure reduction, the progress of Rubin, and so on.

Regarding the report itself, I believe gross margin and gross margin outlook will be very important observation points. With memory prices rising so much, NV's true premium power over the upstream and downstream and potential future changes will basically determine the duration of the "madness." After all, in consumer fields represented by PCs and mobile phones, we are seeing significant negative feedback for the first time.

As for CoreWeave, I cannot predict it. It is important, but in fact, its importance has declined compared to previous quarters. Its performance will at most affect a few neocloud companies but won't have a major impact on the industry chain. The focus has returned to core important companies, especially when model competition and CAPEX competition reach this magnitude.

VII. Final Point: Perhaps certainty will be the most important thing starting in 2026.

PS: You see, what I write is still different from AI output, even though my first step limited its output scope. However, I'm just saying it's different; objectively, I believe its accuracy would be higher than mine.

But I also noticed a few things: The tech feel of the Spring Festival Gala was certainly sufficient—that style of being packed full of information used to be my favorite PPT style, but I suddenly started to feel something wasn't quite right. The so-called Spring Festival Gala on Bilibili was actually just average in terms of absolute level, but you would see individual "people" and many surprises. Xiaohongshu has started featuring ads with the slogan "Real Person Answers"...

A "sense of a living person."