Starting over after "detoxing from AI" for a while always brings surprises. By detaching from a continuous state, we as humans lose many memories of continuous work, which forces us to shift our "attention." Although the goal still retains a blurry clarity, the means and processes undergo a significant shift.

In the AI era, the greatest benefit for us is: the cost of starting over is getting lower and lower.

Restarting a project means rethinking functionality, redoing architectural design, redoing...

It seems that in the AI era, another huge difference is that the aforementioned path is not very suitable: because requirements no longer need to be analyzed and designed; they only need to be implemented, and then chosen to be kept, deleted, or improved.

A human's starting point should be an image, a page, or a video/animation—it is perception, not analysis.

Then, while writing this, I got distracted and went to ChatGPT and Gemini to generate two infographics.

Even though OpenAI released the so-called GPT-Image-1.5, the gap with Nano Banana Pro is still obvious. Some people will "seriously" analyze that Gemini's "aesthetic" is better than GPT's, but for AI, what "aesthetic" is there to speak of? Everything is data. The Gemini series models simply have much more "knowledge," or rather, data, than GPT.

It is this "knowledge" corresponding to this data that allows us to search, program, and design with the help of models...

It is also this "knowledge" corresponding to this data that lets us see Google rolling out AI applications in rapid succession (like "dropping dumplings into a pot"), and then iterating quickly when the underlying model upgrades from 2.5 to 3.

If every application adds some new functions while upgrading the underlying model, the upgrade effect brought by these applications combined is not addition, but a multiplicative dimensional expansion. When the problem returns to the human, it's no longer about analyzing requirements, but a very intuitive mental image: What do I want?

The starting point is no longer design documents, but the applications themselves. For Google, these are what I consider the two most revolutionary applications of the second half of 2025: Stitch and Build. The former is responsible for UI, and the latter for implementation. It's not Vibe Coding, but Vibe Building.

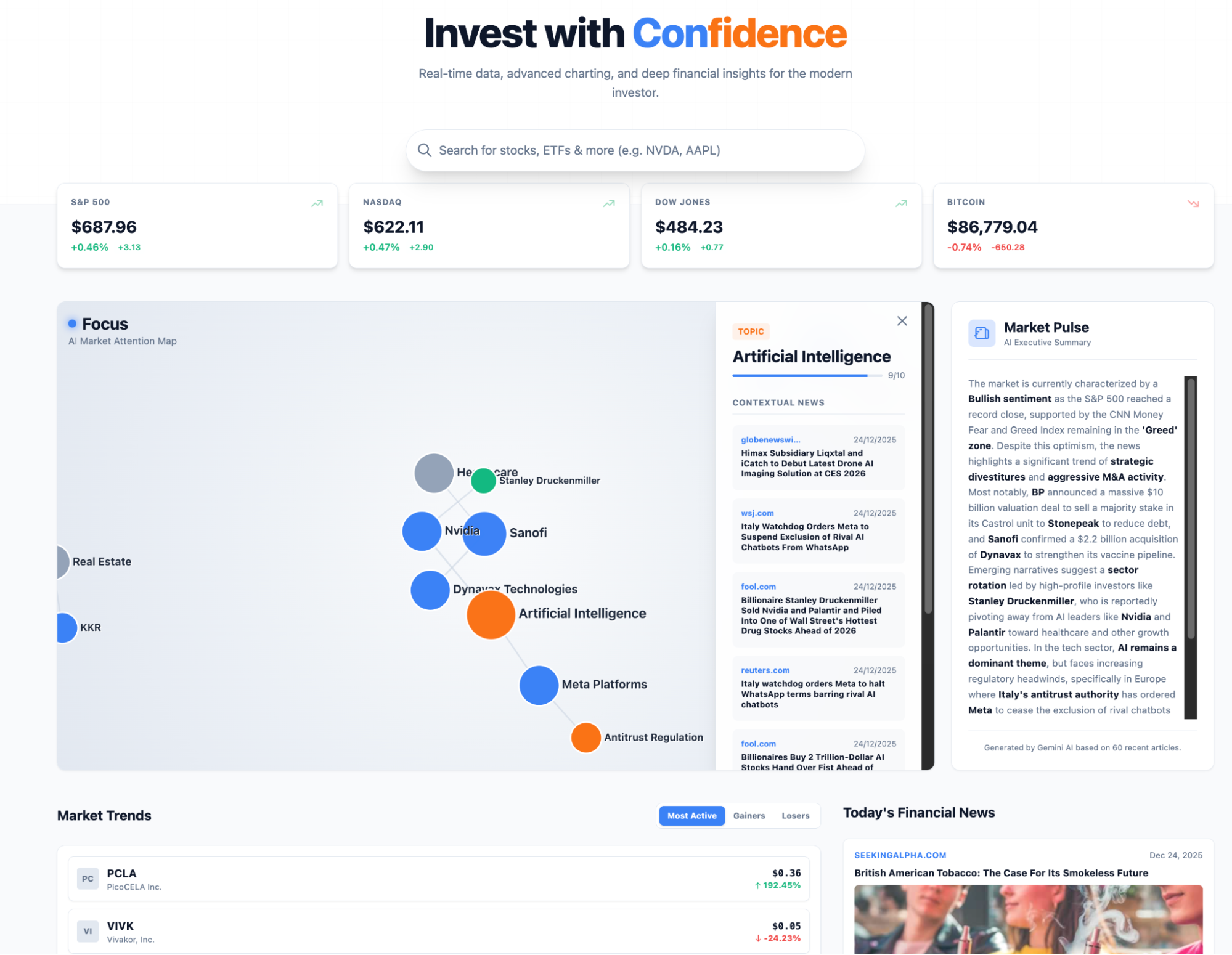

Starting from scratch, I still want to upgrade my market analysis Dashboard. I want to reorganize the structure and add some new features and workflows. I simply describe the existing screenshots and the new features I want to Stitch, and the UI design is complete. Even in the new version of Stitch, it supports direct generation of Demos; as shown in the bottom right of the image below, the interactive effects are already there.

Then, hand the prototype over to Build. Vibe Building is just that simple.

Even though AntiGravity released alongside Gemini-3 is great, and Claude Code and Codex are strong, my most frequently used software (app or tool) is still Build in AI Studio. If we are moving rapidly from Cloud Native to AI Native, then perhaps no coding tool is more suitable than Build: ultimately, it is AI that consumes massive resources, rather than software hand-cranked from code, that will drive the future. Code on top of AI is essentially a "patching" tool; it cannot be the system itself.

Simple, direct, one tool solving a class of "last mile" problems—this might not yet be called AI Native, but it's at least closer.

So, the question arises: Is AGI near? Or, is it possible that AGI is ourselves?

I stand on the right.

At the same time, I strongly recommend Stitch, and even more so the AI Design behind it. If you find it a bit too rigid, you must try Mixboard or Pomelli from Google Labs.

Because I love simple processes and UIs, and canvases that are filled up.