In the AI era, there is a general consensus: tokens have replaced traffic to become the most critical fundamental metric.

Yes, this shift is massive, signifying a transition from attention to results, and representing a fundamental change in the underlying economics.

In my plan, this piece is not intended to be a long treatise because, in a model that has yet to take definitive shape, long-winded arguments carry little weight.

It consists roughly of three simple parts: 1. Revenue models; 2. Current tracking systems; 3. Observable trend changes.

First, the revenue models.

In the traffic era: users trade their time and data for a wealth of free services. For some content, such as long-form video streaming, paid subscriptions are introduced, and users may even pay separately for specific content.

Put simply, because "user time" is a scarce commodity, it is extensively used to exchange for "free services." If users require higher-quality content, they must "pay."

However, this has been a process of continuous business "squeezing." Under free competition, long-form video platforms that charge for content reached their "imagination ceiling" long ago, rapidly becoming a "slow market" where production and operational capabilities are the core competencies. Beneath the internet facade, they are essentially media companies, as the user's "willingness to pay" is fixed. Conversely, the "free exchange of user time" model seems to have more potential: attention can rise from 1 hour per day to N hours—it almost feels like "sleep is no longer necessary."

This can be summarized by two "absurd statements": content with production costs has a clear revenue ceiling, while so-called "content" with "near-zero cost" has infinite potential.

But the core reason remains that "user time" is scarce, while user consumption power has a clear ceiling: reputation and word-of-mouth do not necessarily translate into monetization.

Now, entering the age of AI and generative technology, all content has a cost. Furthermore, within the same period, marginal costs do not decrease (as hardware and software costs are fixed).

While there is still a massive amount of free user acquisition, free services, user subscriptions, and payment for content via APIs, it ultimately boils down to one fact: token production is not free.

No matter how complex the billing method, it generally falls within an analytical framework of competition (model leadership and monopoly), cost, and supply-demand relationships. We won't discuss scenarios after the emergence of AGI or ASI; that beautiful vision of "AI completely benefiting humanity with extreme social abundance" is currently beyond our imagination.

So, simply put, it is a SWOT-like framework for tokens. However, a true analysis involves a very complex structure at both technical and economic levels. Throughout this year, I have been trying to build this structure, which has now evolved into a tracking system.

Clearly, the factors mentioned above lack stable, continuous data. However, they are sufficient for us to perform a simple quantitative analysis based on qualitative foundations.

When everything eventually returns to one question—"How many tokens were processed? What was the cost? How much revenue can be generated?"—many answers regarding the industrial chain become clear.

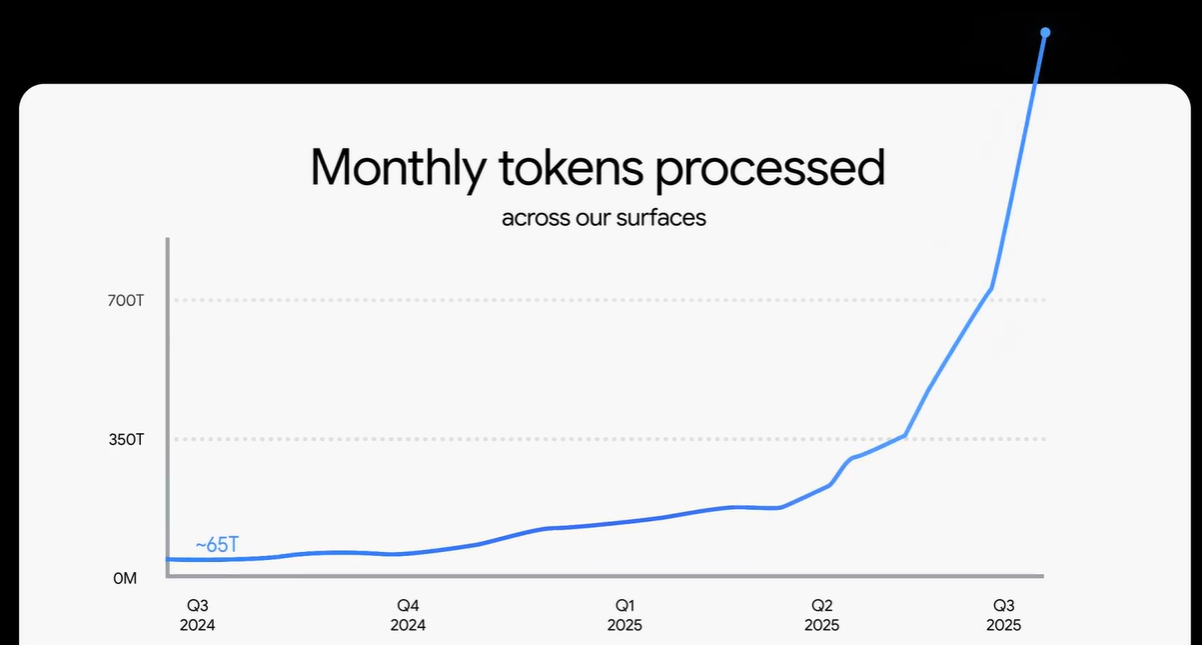

Where do we start? We start with the token processing volumes disclosed periodically by Google.

At the Google Cloud conference earlier this month, another disclosure was made: 1.3Q tokens are processed per month, which is 1,300 trillion, or 1.3 billion units of 1M (this conversion is used because tokens are priced per million).

Google drew a growth curve that looks very steep. However, if we recall correctly, they previously disclosed a figure for June 2025: 980T. So...

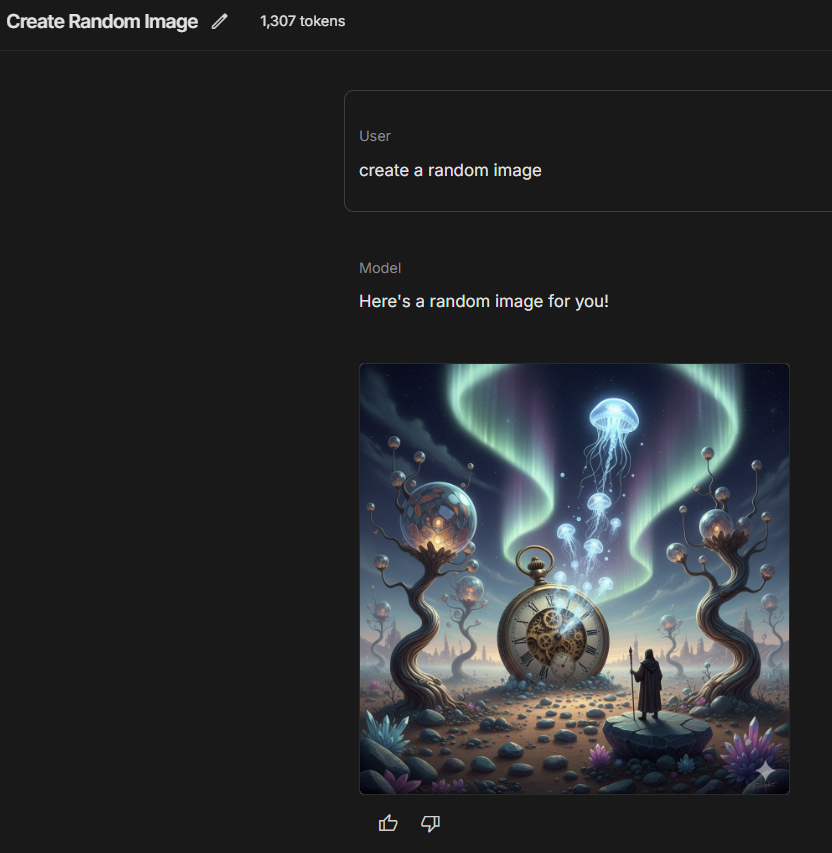

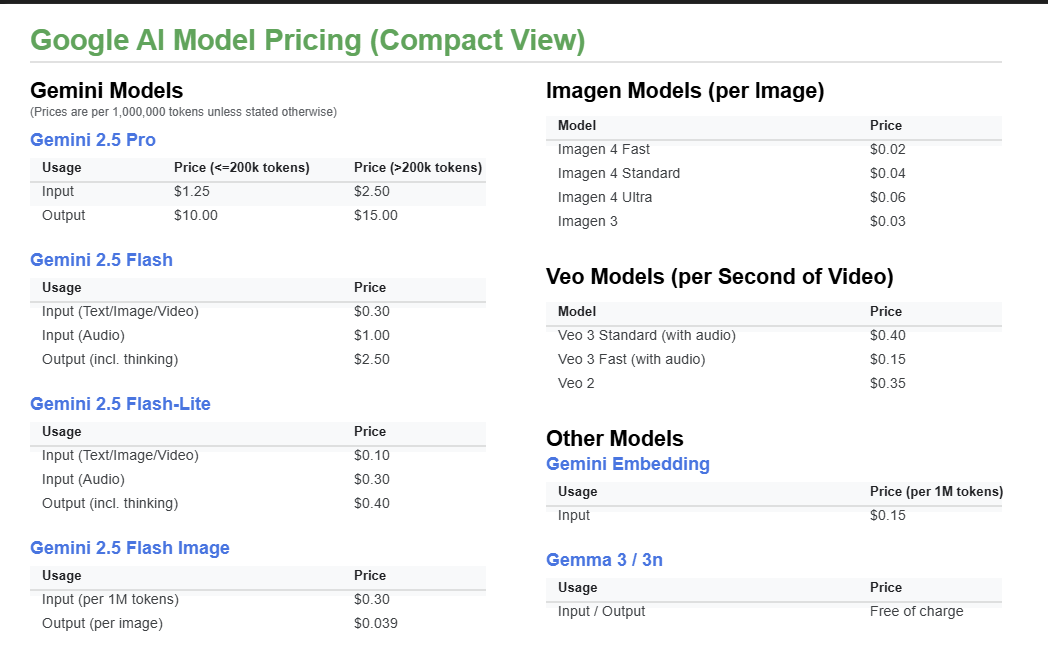

Data was also disclosed for 13 billion images and 230 million videos. Referring to information from AI-Studio, one image equals 1,300 tokens. This is basically consistent with Google's official pricing.

The Flash model output price is $2.5 per million. One million tokens can generate over 600 images, making each image roughly $0.039—the prices are consistent. Video is $0.15 per second, and a typical video lasts 8 seconds.

Therefore, we can perform a static calculation of the revenue status:

With a monthly token volume of 1.3Q—and since Google says "processed," it is reasonable to assume this includes both input and output, as well as images and videos. Using a high-estimate approach: counting images and videos separately, assuming input and output are equal (though in the long-context era, input is often much higher than output), and assuming Flash accounts for 80% and Pro for 20%. The result is 1.3B * [(0.3 + 2.5) / 2 * 0.8 + (15 + 2.5) / 2 * 0.2] = $3.73B.

To date, the potential revenue from images and videos is $0.507B and $0.276B respectively.

However, Google obviously does not realize such high revenue because a large portion is entirely "free." This includes usage by free users (including AI-Studio's free quota) and, more importantly, AI Overviews in search. In fact, the massive increase in Google's token volume is likely due to the introduction of AI Overviews in search (daily search traffic is currently just under 2B, which basically covers AI Overview. If we assume half are enabled with an average consumption of 250 tokens, that volume is at least 500B—and that is just for output. Considering AI Overviews are based on inputs from vast search results, multiplying that number by 10 wouldn't be an exaggeration).

Given Gemini's rapidly growing market share over the past few months and considering that Gemini's scenario-based token consumption might be larger than GPT's, OpenAI's current potential revenue capacity is likely significantly lower than this figure. The 2025 ARR of $12.3B reported by several media outlets seems to make sense.

As for the Claude model, its usage is primarily driven by coding; the volume is large and growing fast, but the number of power users is limited.