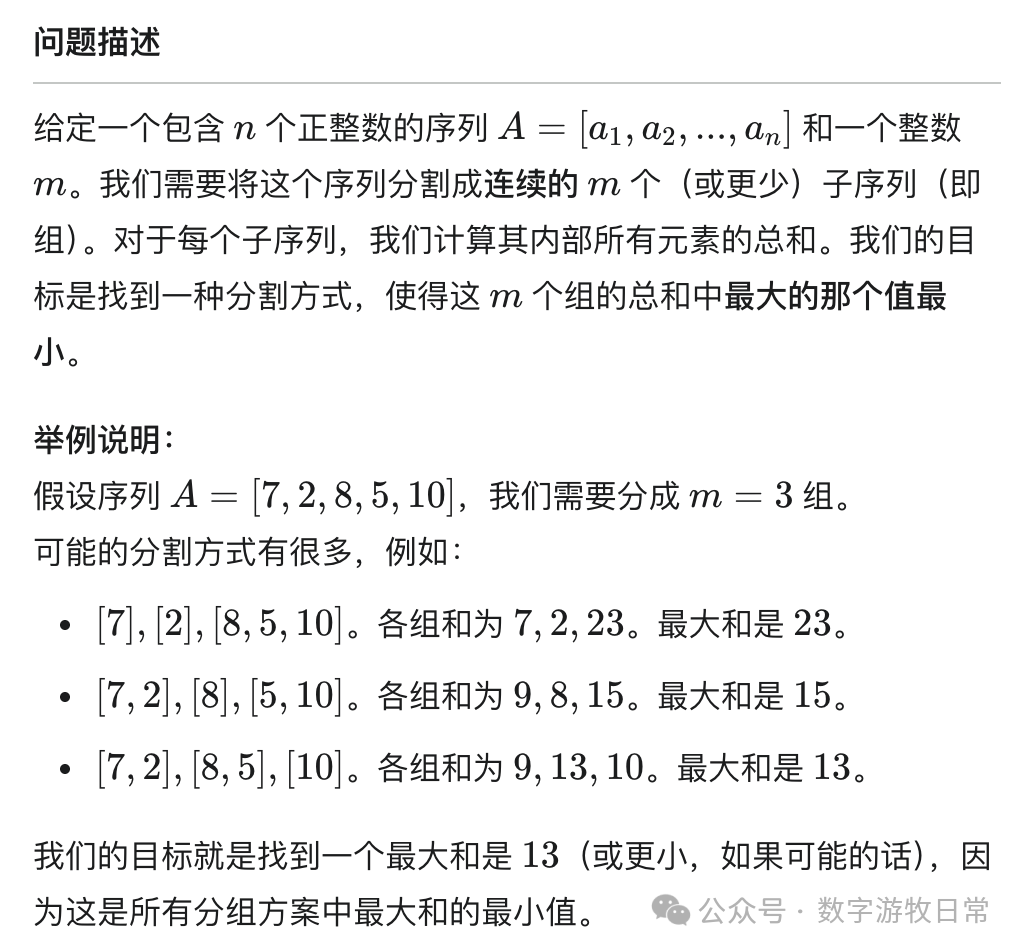

Starting with a classic mathematical or algorithmic problem:

If we happen to be confident in our mathematical abilities, our instinctive reaction should be an "abstraction" of some mathematical formula. If we happen to know how to write simple programs but haven't undergone long-term training in algorithms, we might quickly think of a solution:

- Use combinatorics to list all possibilities for dividing n numbers into M groups: essentially the stars and bars problem (placing m-1 dividers between n balls);

- Loop C(N-1, M-1) times to find the maximum value among the m group sums;

- Determine the minimum among all those maximum values;

This is a complexity that increases exponentially (specifically: O(C(n−1, m−1)·n), also known as a "combinatorial explosion"). I used Gemini to generate an animation to demonstrate this.

Even without considering algorithmic complexity, solving the above problem still requires decent mathematical knowledge and a solid programming foundation.

However, this solving process aligns with human instinctive reactions and ways of understanding and thinking about problems.

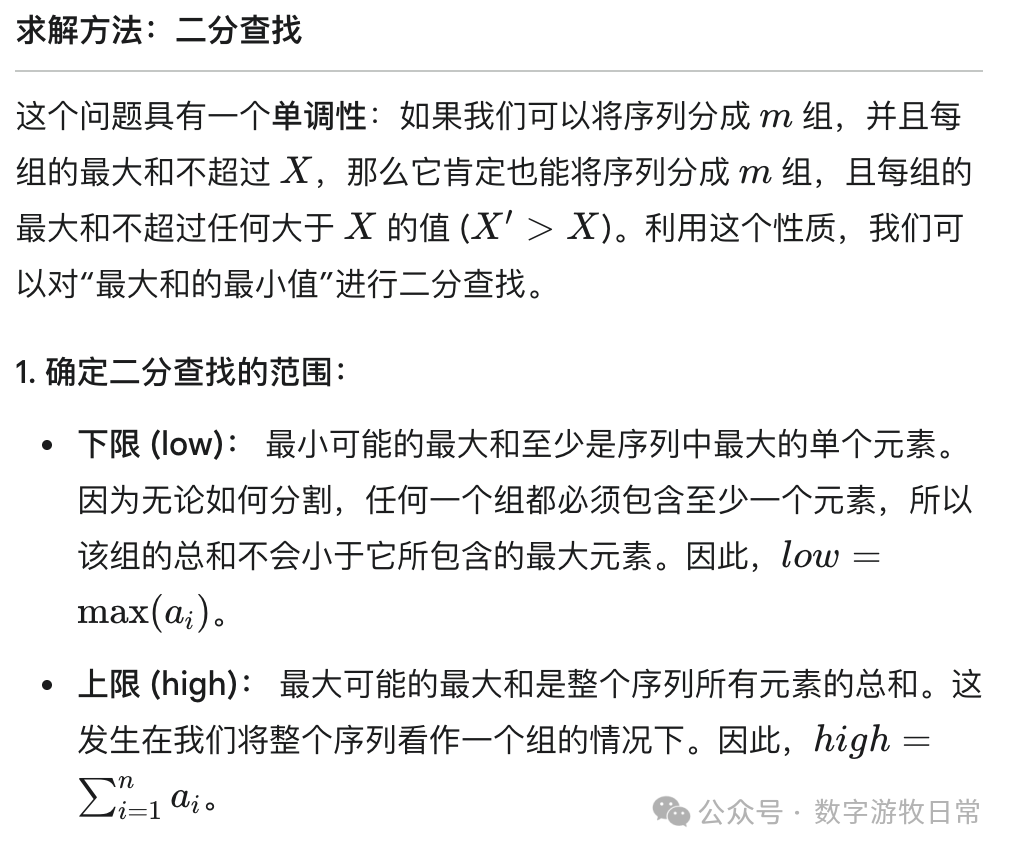

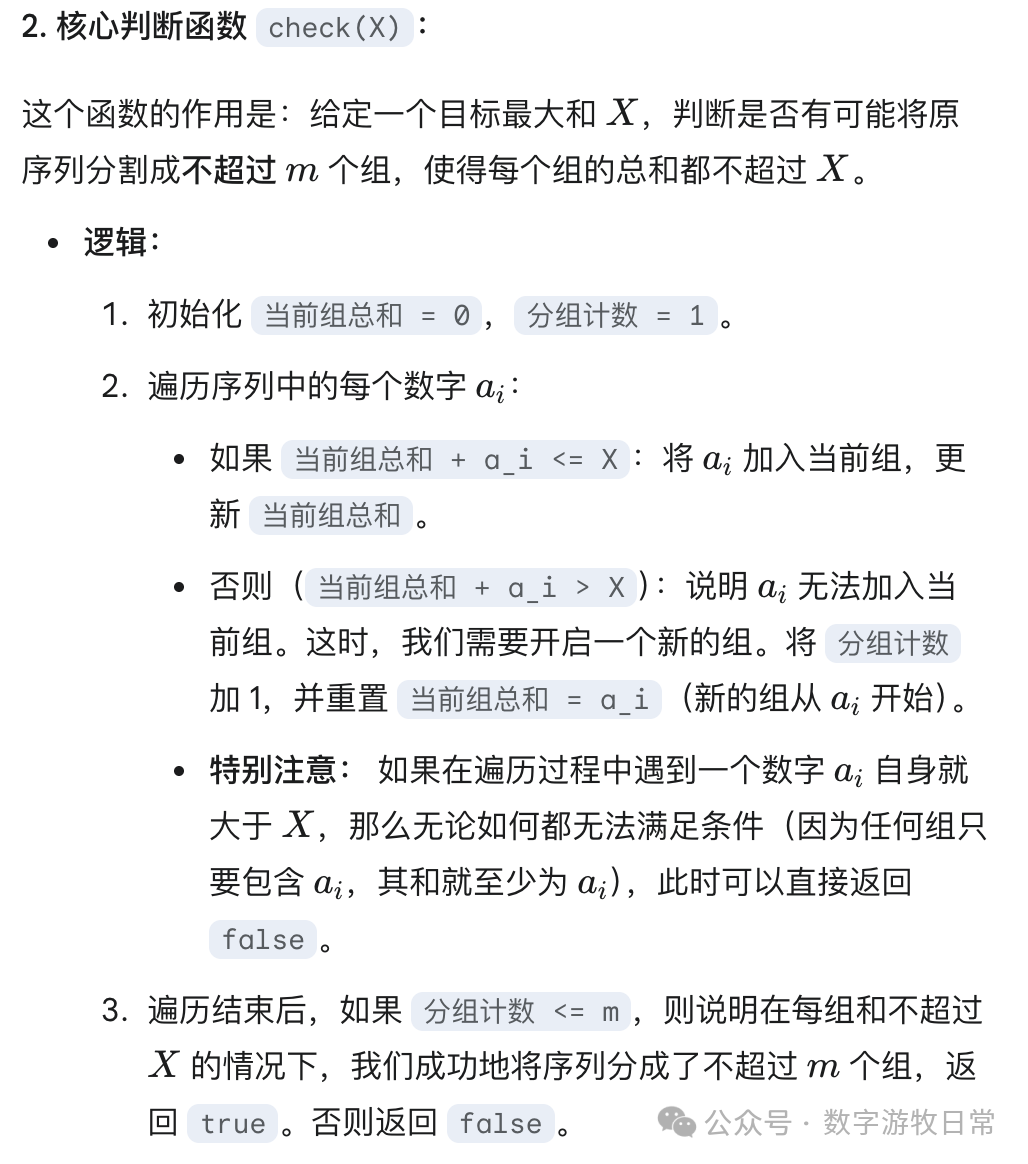

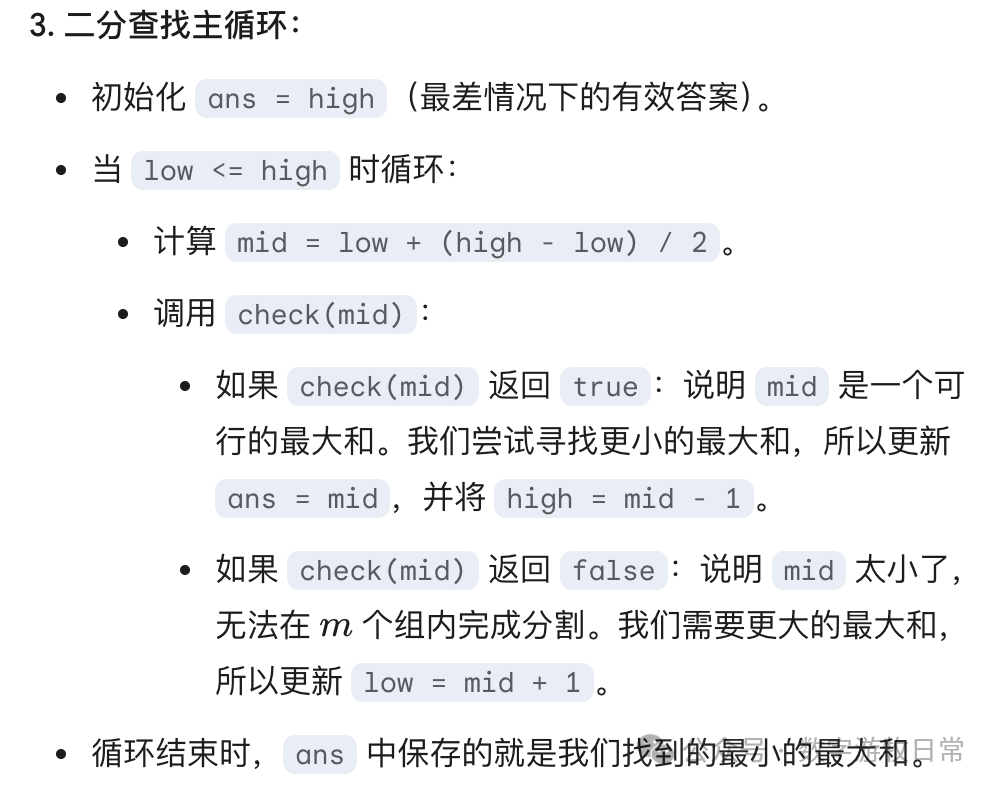

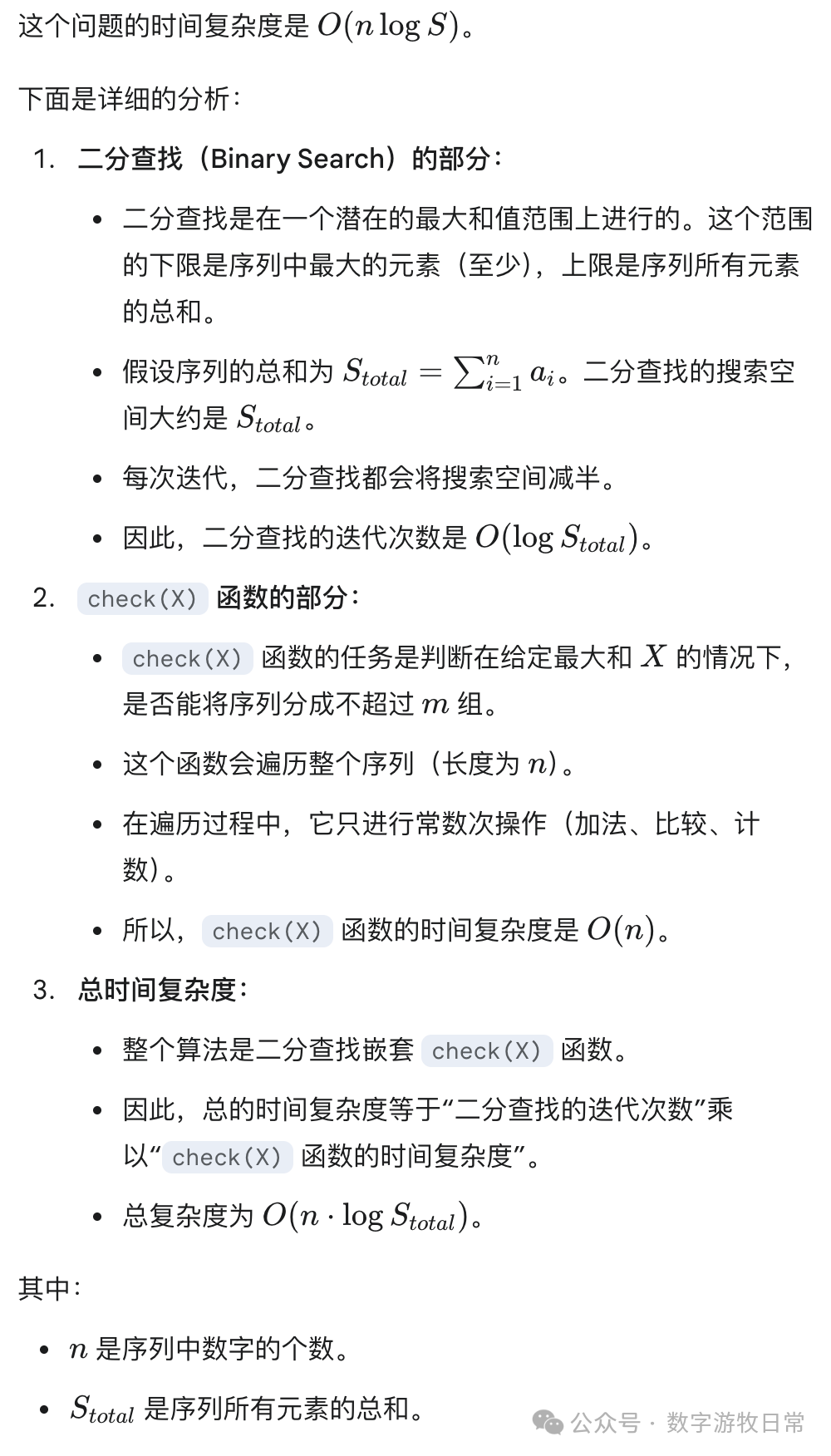

Is there a better solution? Naturally, the answer is binary search.

First, we know this value must exist and lies between the maximum of the n numbers and the sum of the n numbers. Then, we simply test every possible answer in between to see if it's the final answer.

Represented as an animation, the process looks like this:

It’s quite abstract, but its algorithmic complexity is very low.

- Often, the human brain's "intuitive" way of solving problems differs from a computer's algorithm;

- In the two solutions above, the first requires us to establish a basic mathematical model (combinatorics), followed by an intuitive but computationally heavy process. The second requires no mathematical model-building at all (only the belief that a solution exists), followed by an "abstract" programmatic implementation;

- While the second solution is clearly lower in computational cost, I believe if done by the human brain, within a certain range, the second solution is much more "brain-burning." Why a certain range? Because when n and m are large enough, enumerating all possibilities will also consume massive mental energy.

Though the first approach is more intuitive, in real-world scenarios, a typical programmer might be willing to spend an hour coding plus an hour debugging rather than spending one extra minute on preliminary mathematical modeling (combinatorics?). This is despite the fact that those extra minutes could reduce the actual problem-solving time to mere minutes, and such problems likely won't recur frequently.

The first solution is traversal (brute force)—intuitive but "stupid" (actually, combinatorics isn't stupid at all, but you know what I mean, right?). The second solution is iteration (recursion)—abstract but "beautiful" (because you're watching the program do complex work rather than simple loops—you catch my drift, right?).

Alright, that’s probably the longest and most "brain-burning" opening I've written recently. The reason is simple: after spending a long time (mostly spent accompanying the model; I wasn't brain-burned at all and could do other things simultaneously), I finally completed a project that is "huge" for any model—under the premise of "not writing a single line of code, and providing no content intervention or hints."

Looking at the pile of "garbage" I (or rather, the model) produced (I recently used this term in a report for a candidate during an internal interview; though I meant it neutrally, such a critique was clearly inappropriate—so if you happen to see this, my apologies), three thoughts struck me, corresponding to the three parts of today's title: 1) Our world is an iterator; 2) We are always searching for answers through constant "binary search iteration"; 3) Abstract "iteration" is how models are constantly replacing the human brain.

Let me briefly explain the process of "guiding" the model through arduous effort to produce this pile of "garbage":

- For every company needing research, search the entire web and extract personalized prompts for Deep Research;

- Iterate into Deep Research to generate a preliminary report;

- Iterate to screen for the "optimal" report;

- Iterate to optimize every report based on the "optimal" one;

- Iterate again to perform "search + optimization" for each optimized report;

- Iterate to extract a knowledge graph;

- Convert the knowledge graph into prompts for daily updates;

- Haven't thought that far yet...

Actually, I could keep iterating, but the model's capabilities don't support it:

- Context length is far from enough;

- Memory capability is poor, again due to insufficient context length;

- Output stability and consistency are lacking;

These factors mean I must carefully control the iteration steps. When I notice the output "deteriorating," I timely set a checkpoint and restart.

Through this, I finally obtained a set of "daily search terms" for several companies (the first instance of "garbage": these terms or prompts aren't wrong and can capture key points, but they aren't as good as those produced manually by human researchers. I estimate that "hiring" five average human researchers to work for three days at a relaxed pace could exceed this level).

Of course, even with "garbage in, garbage out," one must see if the subsequent workflow is functional (models will improve, but the iteration process remains relatively fixed).

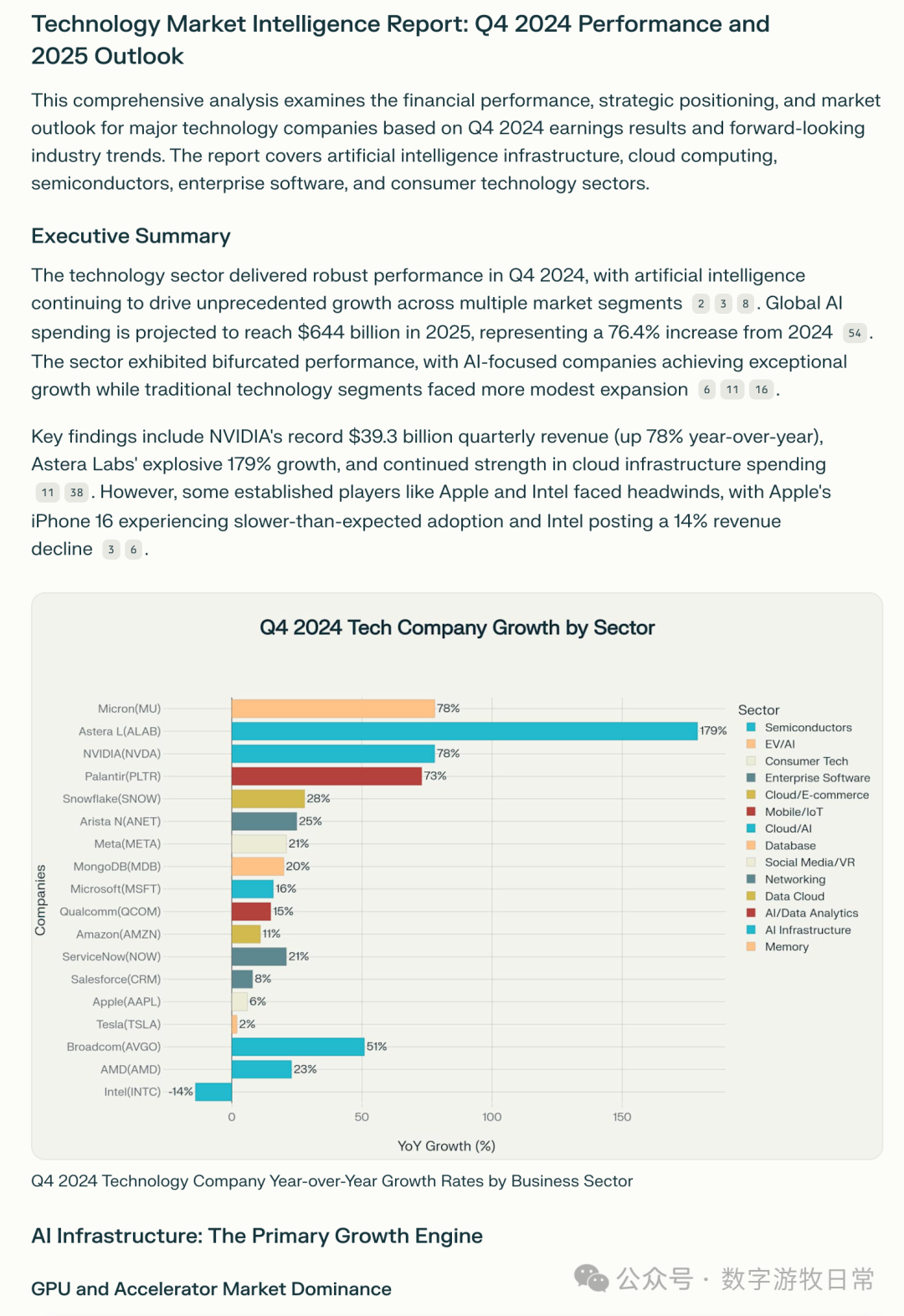

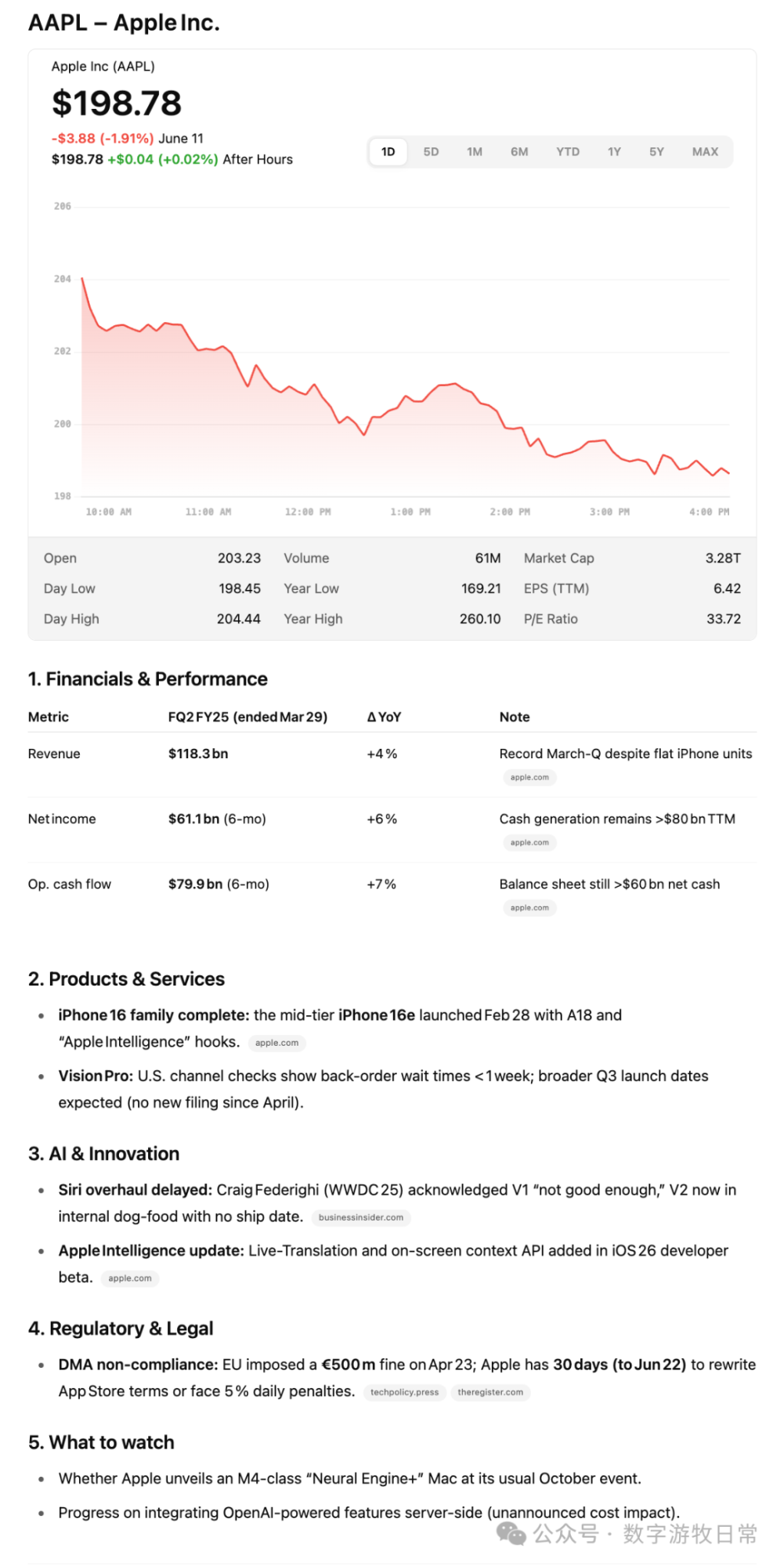

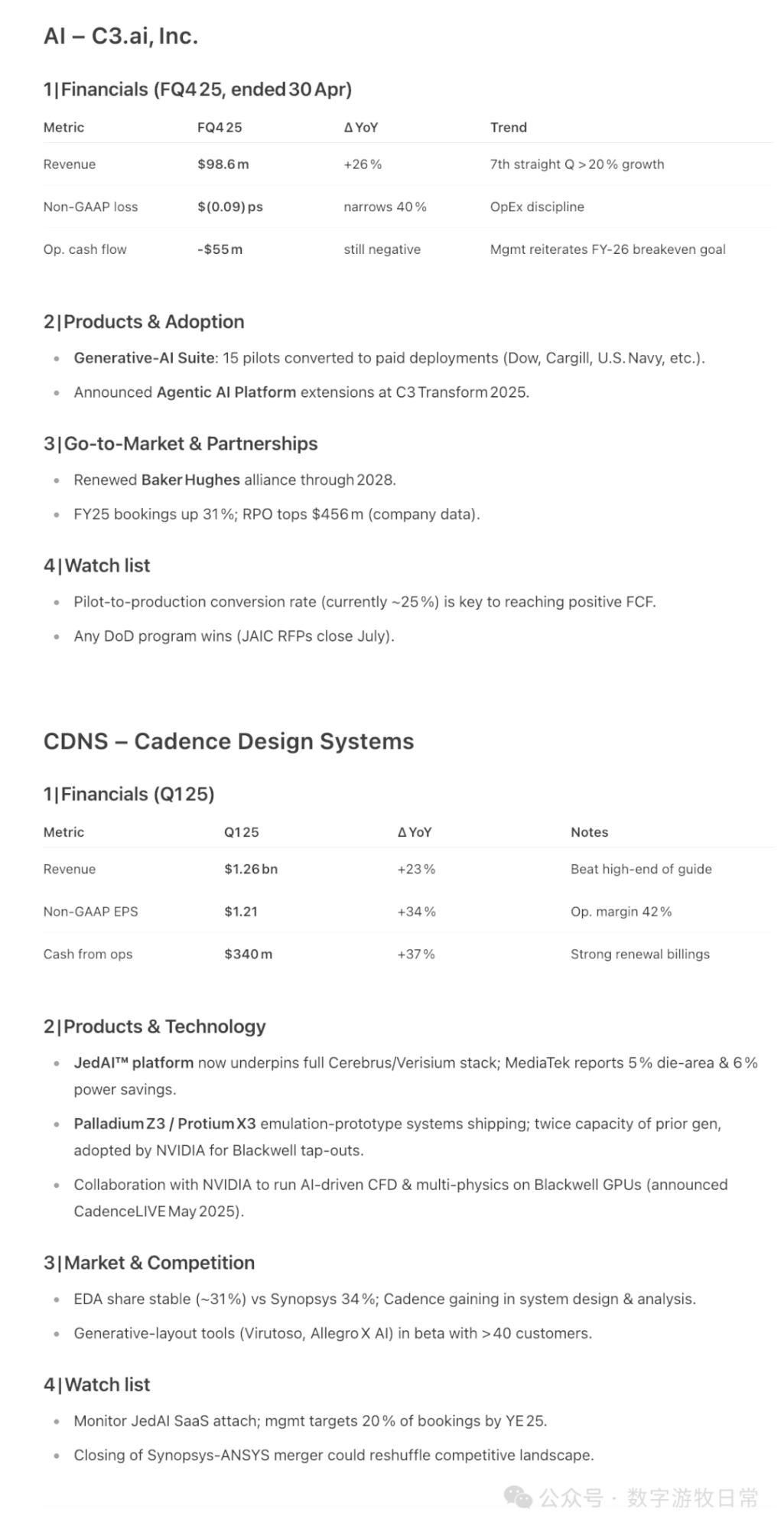

Passing the generated template to Perplexity Labs yielded these results:

Of course, Perplexity did more work in the background:

In the input, templates for dozens of companies were provided, and it returned 18. By absolute standards, it's not enough, but looking at the longitudinal speed of development, such results are improving fast enough.

Though, though, this is an Agent, and the work above is traversal (traversing prompt templates), not iteration (does nitpicking terms matter? Yes, and it's very important).

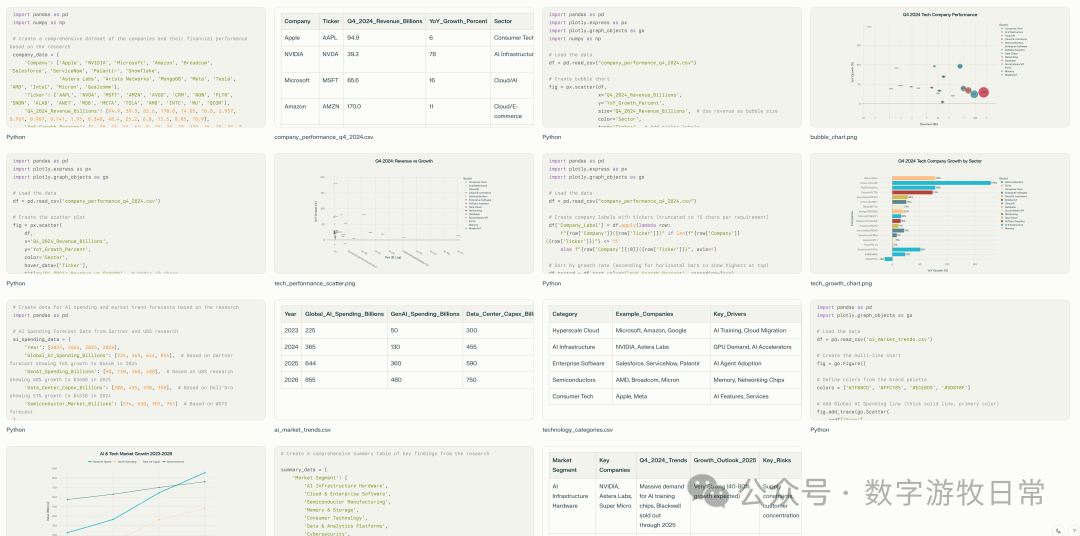

As OpenAI hyped o3-Pro to the heavens, claiming the model can directly integrate thinking, searching, tool calling, and Python code, I decided to try its results.

A batch can return results for three or four companies. The one about Apple looks like this:

There are other companies I won't list one by one.

Limited by context length, o3-pro suggested a plan where the user can request to continue.

I quite like this summary; it did indeed do these things. Then, as I did with Gemini, I entered: "Go on."

A cut-cornered "blob" came back—typical ChatGPT style. Of course, in the Advanced Voice Model updated in late May, if a user asked it to say "A" two hundred times, it would actually do it. But in the latest updates, it politely refuses.

A polite refusal. It leaves you wondering if the model really can't do it, or if it just really can't do it.

Jokes aside, o3-pro's output is generally satisfactory, certainly more useful than Perplexity's Labs. The key is "iteration"—a type of iteration that theoretically allows the user to mindlessly "go on" indefinitely.

As I am about to finish this article, o3-pro is still "iterating."

I enjoy this feeling of the human brain being "replaced" by AI because, from the start, we have been solving problems in different ways:

One is concrete traversal after abstraction,

The other is abstract iteration after concretization.

Having finished writing this, I realize this piece is also a bit... abstract.