I originally intended to write a review of Perplexity's Labs function—which is similar to Manus—after using it for a while. However, while organizing my thoughts and preparing materials, I realized there were many more topics to cover. Consequently, this article will be divided into two parts: a functional evaluation and insights into AI applications.

Let’s dive into Part One: Perplexity's Labs function.

This isn't exactly a brand-new feature; I was pushed this update back in May, so this is a relatively late review.

The page is simple, adding a "Labs" tab next to the traditional "Search" and "Deep Research." In terms of positioning, it is almost identical to Manus, which went viral earlier this year.

Since its release, it’s been clear that this feature is constantly iterating and evolving. This is what I love most about Perplexity—every few days, it feels like it has "improved again."

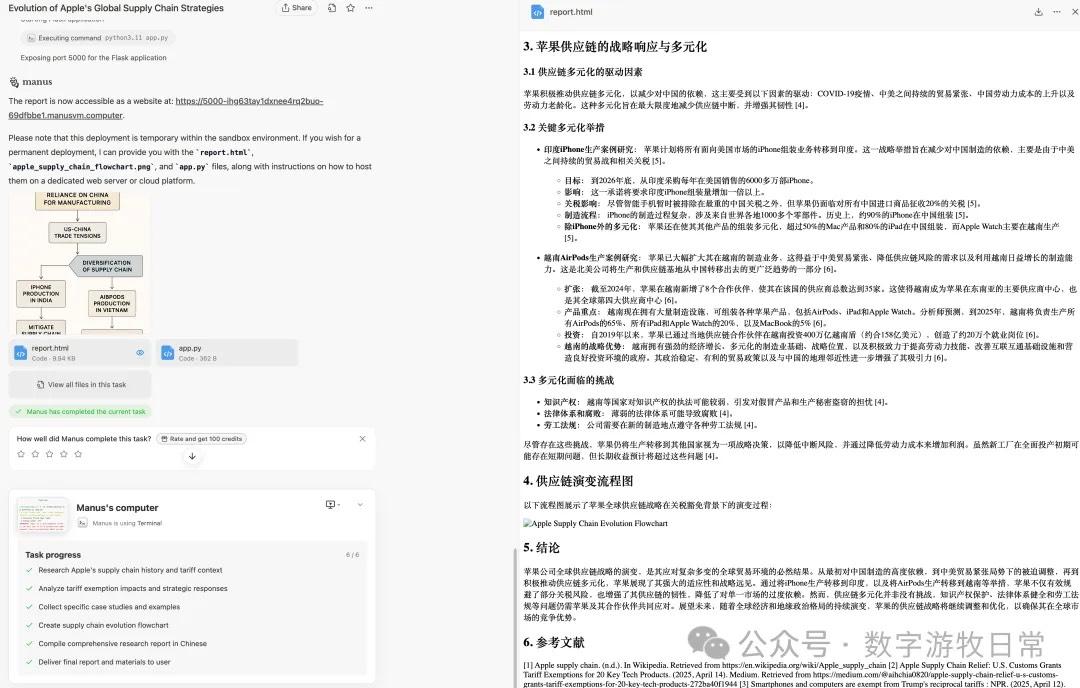

The fundamental difference between this and "Deep Research": Deep Research is purely a diligent information retrieval and summarization bot (some solutions categorize it as an Agent, but as it's often a relatively independent model, "bot" might be more appropriate). Its outputs are mostly raw materials. However, "Labs" aims directly for the final result—a report (Word, Markdown, Webpage, PDF, etc.), a PPT, or something else. This is a standard multi-agent combination that leans heavily into combining search with code generation, using more code to achieve the final presentation.

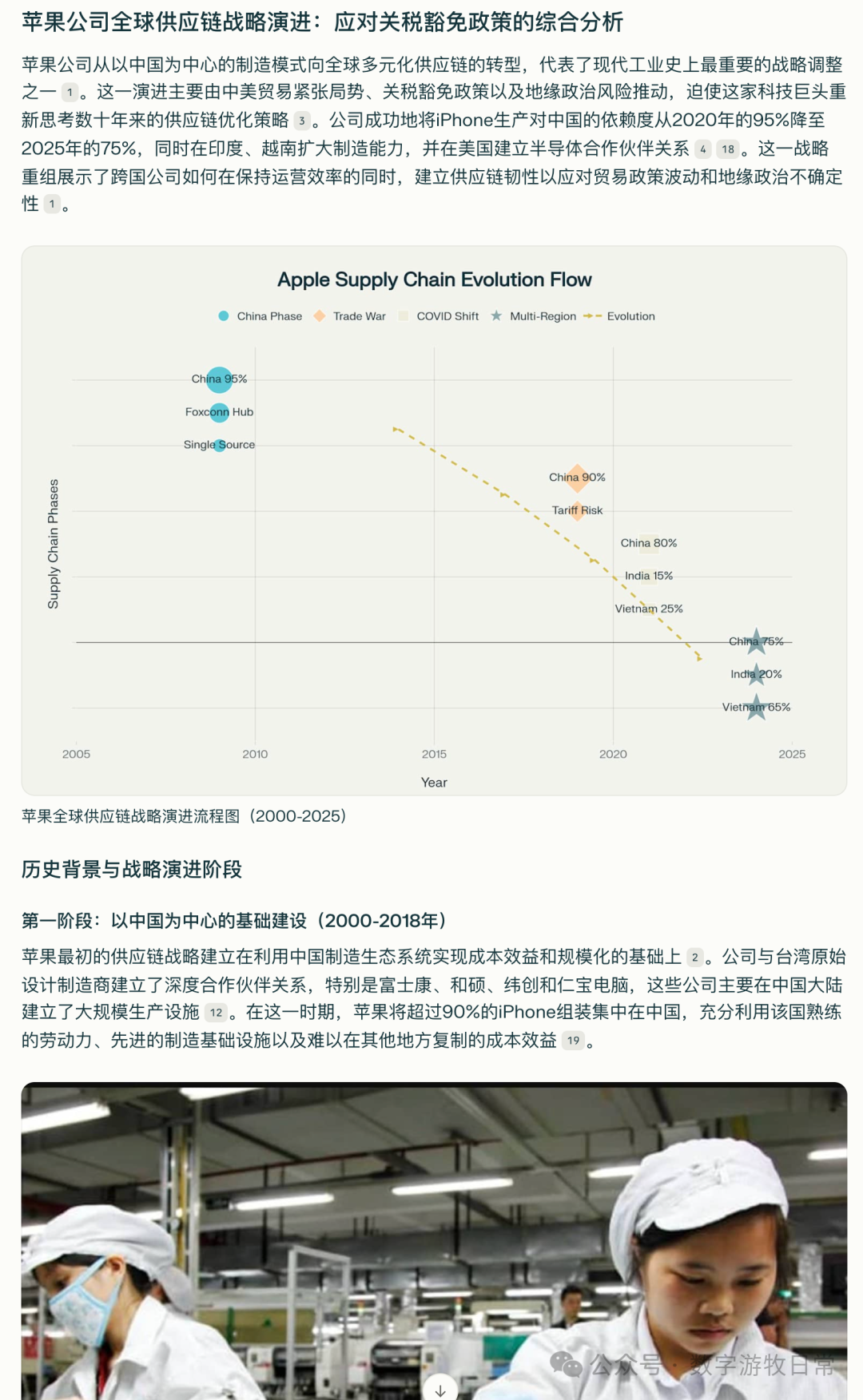

Example: The migration of Apple's supply chain.

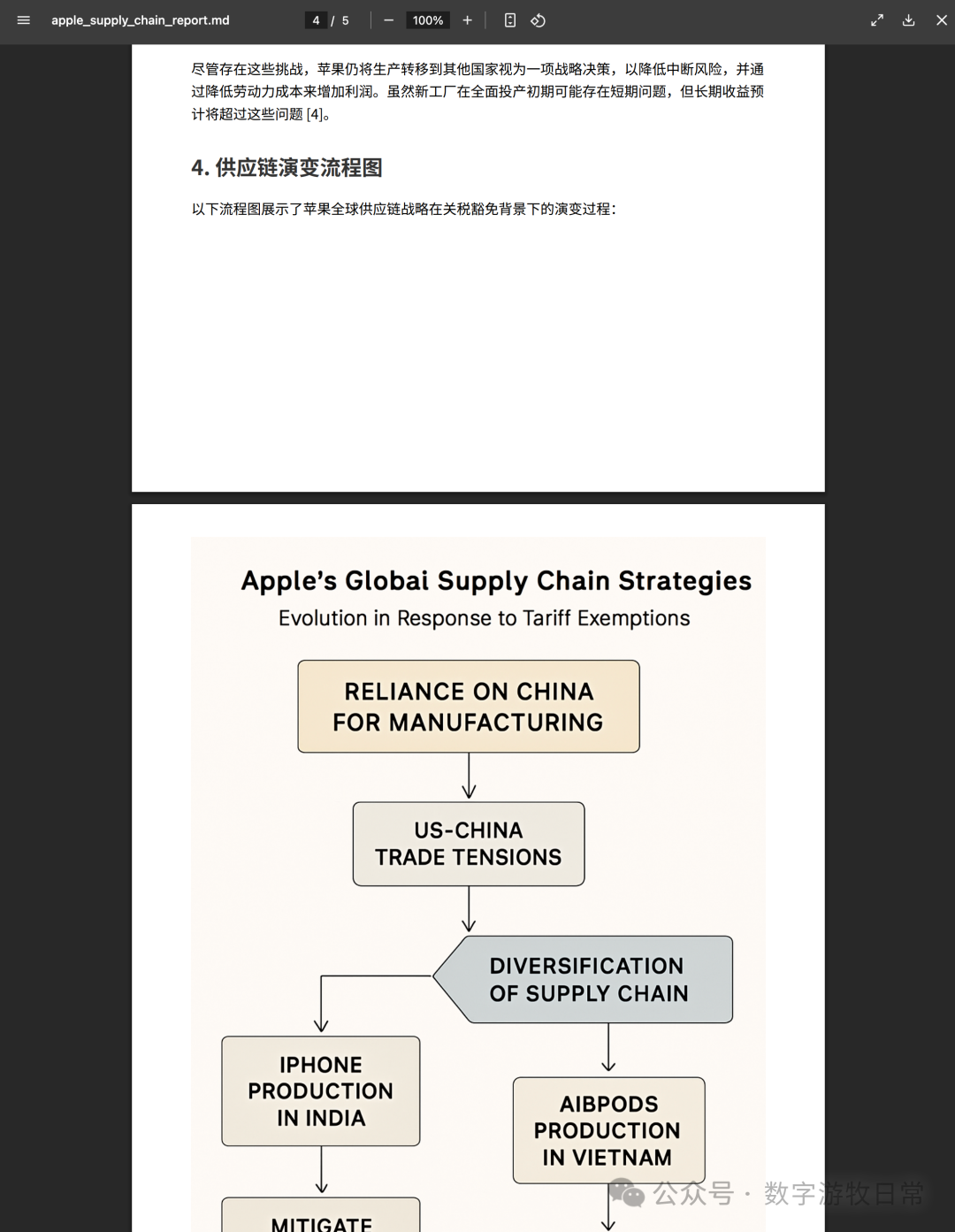

The final result is a typical analytical report rich with text and images, featuring both searched images and charts generated via code.

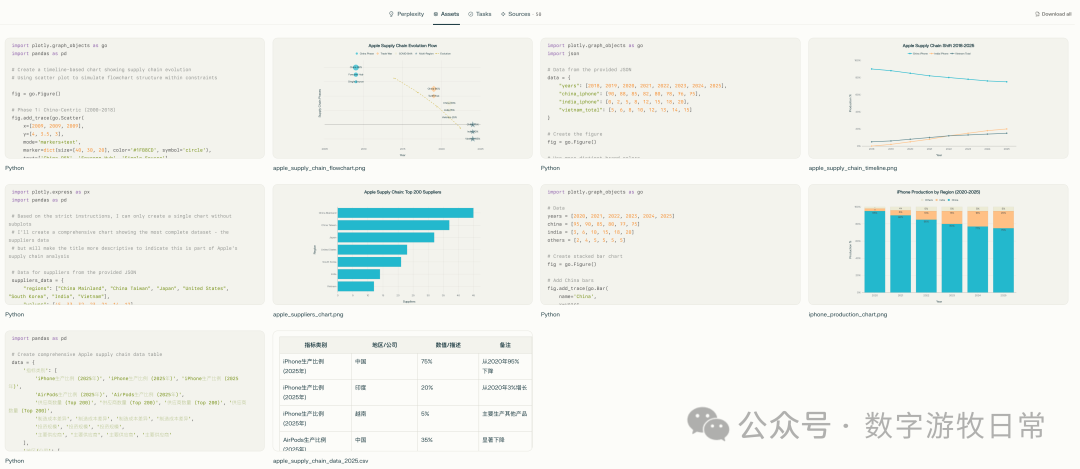

Furthermore, the documentation is complete. Beyond source links, it provides all the code and generated charts under "Assets."

It’s all Python code, which is compatible with traditional report formats that rely on static images.

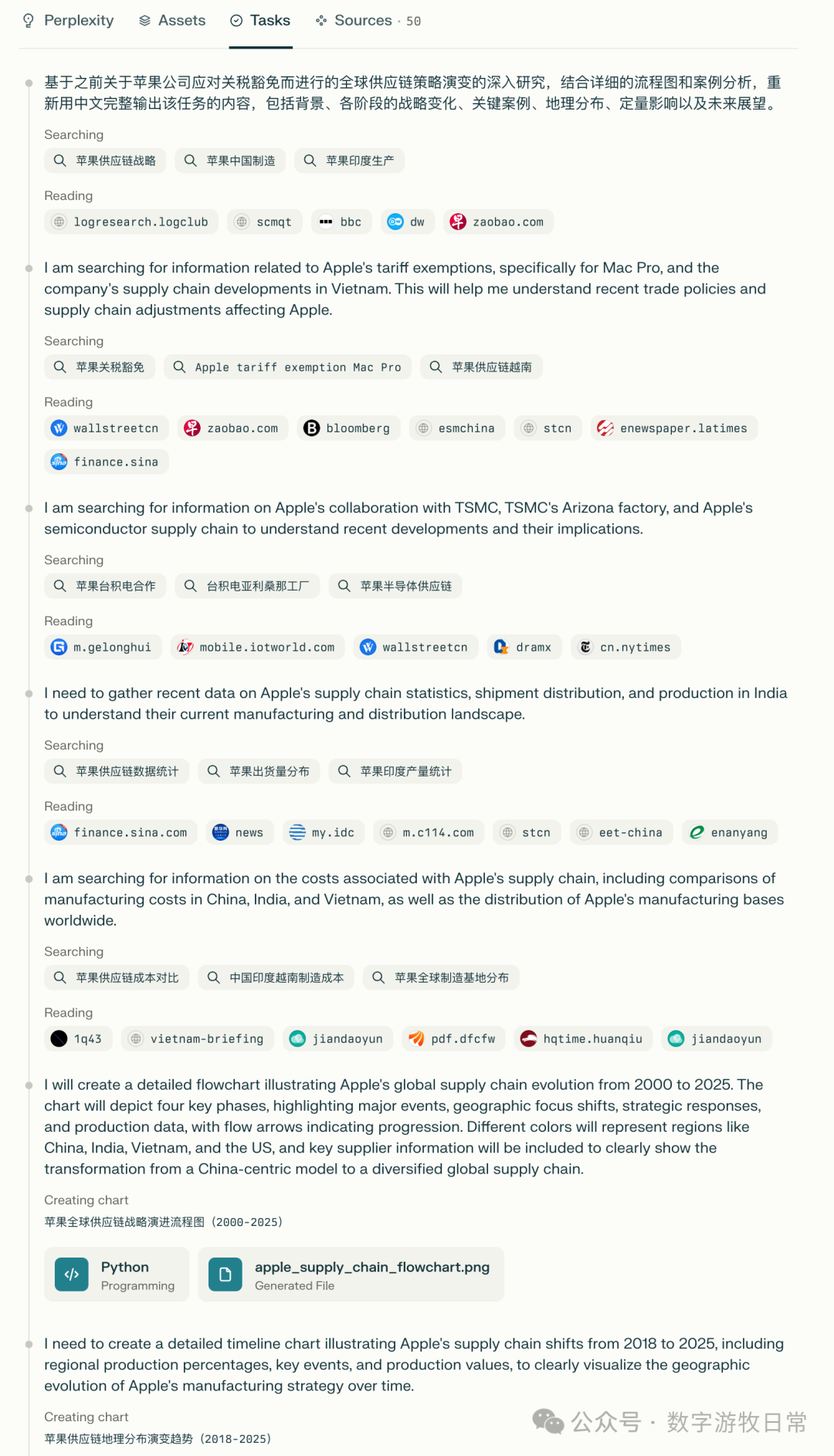

The execution process is also transparent:

Results can be exported into the following formats:

Here is the shareable Page link:

Apple Supply Chain Migration

https://www.perplexity.ai/page/ping-guo-gong-si-quan-qiu-gong-C2coWm5EQjWpUAeZdJBtcA

However, when shared to a Page, the code execution results and those images disappear. Perplexity likely needs more internal feature integration here.

Setting aside "factual descriptions and numerical accuracy" (details must be checked individually, and Perplexity's track record here isn't perfect), the maturity and final deliverables of the "Labs" feature are excellent. Compared to its launch, the content is much richer. Based on the result alone, I would give it a subjective score of 70-75; considering it was generated in minutes, I’d bump that to an 85- (A).

To be fair, I also checked the current capabilities of Manus. I assigned the same task to Manus when I started writing. Twenty minutes later, Manus was still working hard, requiring my manual assistance to access Reuters (which failed anyway).

It is still working, completing the text portion before adding images and other data.

It feels a bit like watching a story unfold.

Undoubtedly, Perplexity Labs currently crushes Manus. You cannot cut corners with professional tasks. "Computer Use" and "Browser Use" look cool, but if you invest in the wrong "skill tree" or rely too heavily on "borrowed" skills, the results are lackluster. I believe the Manus team can create better products, but that’s a conversation for another time.

The final version of the Manus result was a five-page PDF report. The charts were generated using text-to-image features, resembling GPT-4o output. Wouldn't it have been better to just generate Python code?

Of course, it deserves another chance, as there is a "Create Webpage" button.

Then again, I was wrong. Let's move on.

Perhaps this was just an example Manus isn't suited for; it doesn't mean it fails in other scenarios.

Back to Perplexity Labs.

As mentioned, the rating is quite high.

In fact, Perplexity has been focusing on content since its launch—Discover, Page, Finance, Travel, Academic, and now Labs. I initially liked the product for "diverting search preferences" (using Perplexity for gossip and daily trivia ensures my Google searches stay focused), and later for its continuous improvement.

Now, it has inspired me to reconsider the landscape of AI applications. For simplicity, I will start from my reorganized logic chain:

- Scenario: This year, my desk time has increased significantly. The reason is simple: the emergence of Deep Research and the massive leap in AI coding capabilities (marked by Claude 3.5).

- Inference Demand: The surge in inference demand—and Anthropic's rapid revenue growth through Claude—also roughly aligns with the Claude 3.5 milestone.

- Screen Time: Spending more time in front of a monitor rather than a phone might not just be my case, but a broader trend.

- Social Needs vs. Information Retrieval: Social needs have dropped noticeably. This issue was discussed 30 years ago when home computers became popular. I believe social interaction motivated by "getting information" or "completing tasks" has been heavily compressed by the rise of AI search and AI coding.

- Data: Global PC sales (including laptops) are around 250 million units annually, while smartphone sales exceed 1.2 billion. Although PC sales turned positive due to AI, the PC scenario remains much smaller than the mobile one. If we consider screen time, it might be even smaller.

- Mobile Time: What to do with the extra phone screen time? Is the guilt of "mindless scrolling" fading? But we still want to see "real people," right?

- The Narrowing Window for AI Apps Outside Base Models: Practice reinforces my long-held view: the opportunity for the AI application layer is huge, but the opportunity for independent apps is shrinking. Why? 1) As data shows, the user base is smaller than before. 2) Because the scenario is "desk-based," users have more time to customize processes and results. Even if features like Perplexity Labs perform well, they struggle to drive significant paid conversions. 3) AI models are becoming the apps themselves. ChatGPT, Gemini, and Claude are becoming complete applications (integrating tools, search, and agents). While they might be slower than third-party apps like Perplexity, their core strength lies in "model capability."

- Coexistence: AI-based production scenarios and mobile internet APP scenarios will likely run in parallel for a long time, determined by both model characteristics and human physiological/psychological limits. This is actually a good thing.

For me, the scenarios, models, and apps I use frequently are becoming more focused. If this is representative, it means the window of opportunity for third-party apps outside of the core models is getting smaller and smaller.