Within a week, I was asked several questions about time by different people:

"When will investment research be completely overturned?" I thought for a moment and cautiously said two years. In fact, massive changes have already begun.

"When will robots be able to care for the elderly at home?" I thought for a moment and confidently said five to ten years. Perhaps the ultimate solution isn't something we can linearly extrapolate right now.

"When will AGI (Artificial General Intelligence) appear?" I thought for a moment, and the answer might be: isn't it already here? It's just not "smart" enough yet, but "generalization" has already begun.

Many believe that the most important thing in the AI field in 2025 is Agentic AI. In the Chinese-speaking world, "Agent" is commonly translated as "Zhìnéngtǐ" (Intelligent Entity). I have always found this strange, though I couldn't quite put my finger on why.

However, in the book mentioned above, it clearly discusses something that has no direct relationship with what we understand as "intelligence" in Chinese.

"Agency" is more accurately translated as "Niéngdòngxìng" (Agency/Activity). Therefore, an "Agent" should not be called an "Intelligent Entity," but an "Active Entity" (Niéngdòngtǐ)—a subject capable of working autonomously.

So, it doesn't actually need "intelligence," does it? It just needs to be able to "work actively."

Today's AI models primarily achieve two functions:

Generation: Achieving the function of automatically completing tasks by continuously "predicting the next token." Currently, the most valuable application is code generation: generating hundreds of lines of executable code in minutes, something no human labor can match.

Search: Searching through knowledge "compressed" during model training is a form of search; searching information on the internet is a form of search; and searching data and materials uploaded by users is also a form of search. But search is not just a means of obtaining information; it is a way of "borrowing a brain." For someone like me who is unfamiliar with front-end development, implementing an interactive page isn't impossible, but it would take a lot of study time. Given limited time and energy, this skill isn't a priority for me. Now, through the "compressed" code and knowledge of "front-end masters" within a model, I can "borrow a brain" to implement the required front-end functions in just a few minutes.

Yes, generation and search iterate upon each other to become a theoretically "perpetual motion" active entity.

I've spent seven hundred words on this "prologue" because, for a long time, when thinking about the distant future—no matter how many variables are involved—I always seem to return to this starting point. Thus, as a foundation, it is perhaps appropriate.

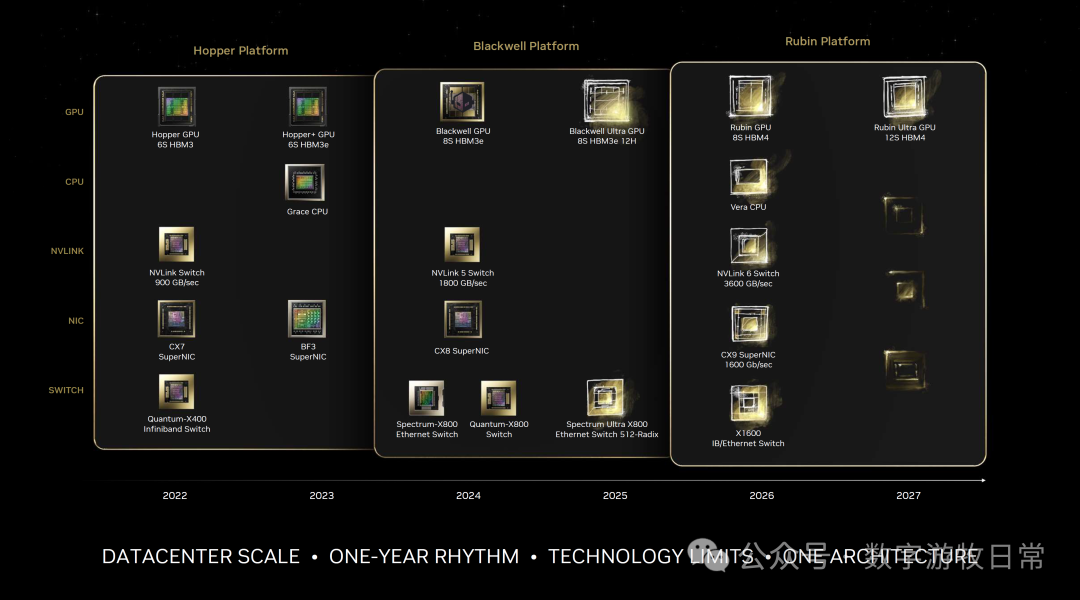

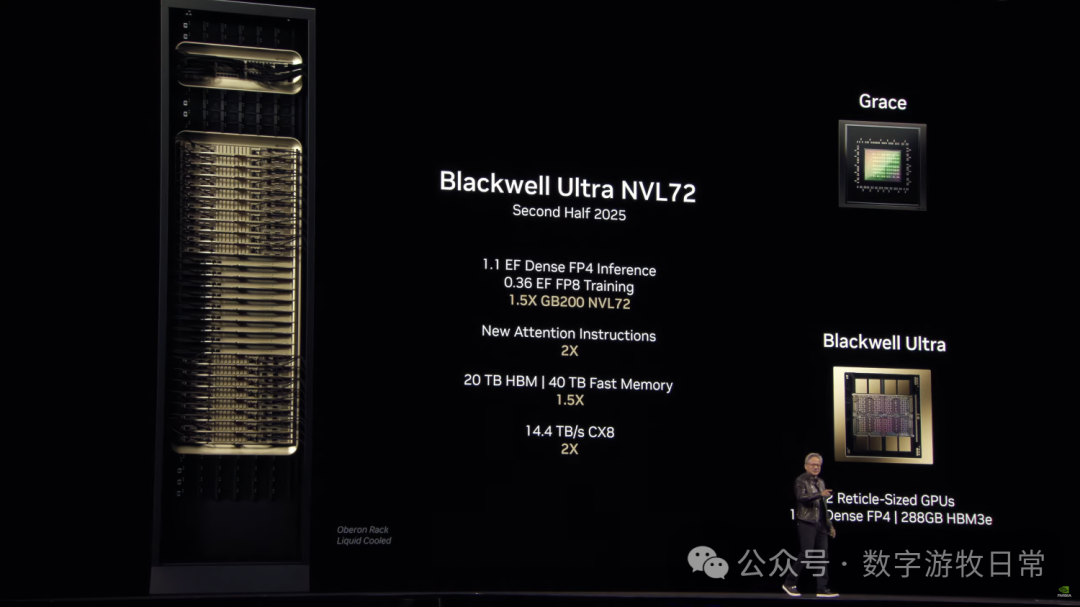

Another important piece is the NVIDIA GTC 2025 Keynote delivered by Jensen Huang early this morning. This is the third year since the release of ChatGPT, and coverage of this annual event is now nearly exhaustive. Therefore, a complete review is not the goal of this article; I will only highlight the "roadmap" mentioned in the speech.

Yes, the shipping time for the next-generation architecture, Rubin, has been delayed to Q2 2026. If we look at the experience of Blackwell from announcement to delay, the Q2 2026 expectation might also fall through. What I've been saying since the second half of last year—that "technical challenges are disrupting product rhythms"—has shifted from an expectation to a solid reality.

However, every chip company will encounter all the technical challenges NVIDIA faces today—whether they are developing in-house chips or other independent chips.

But, from the perspective of a complete system (large-scale compute clusters), there are still only two "truly usable" solutions: NVIDIA's general-purpose GPUs and Google's TPUs.

So, at this third GTC after ChatGPT, no matter how awkward Jensen Huang's two-hour speech might be, the product roadmap actually provides the hardware or "AI Infra" constraints and "predictability" for Scaling in the coming period. Therefore, for the next three to five years, NVIDIA may be the company with the strongest visibility; for everything else, it's just a matter of market perception.

Yes, under this stable expectation, we can see that if all goes well, the annual growth in computing power by the second half of 2027—compared to the GB200 NVL72 currently being deployed—will see single-node computing power grow by 1.5 x 3.3 x 14 = 69.3 times.

The significance of the single node is that it allows large clusters (within data centers) to accommodate more computing power in the same area. So, looking at it conservatively, by 2028 or 2029, we can expect a hundredfold growth in the computing power for frontier models.

Simply put, without any optimization, we can compress data a hundred times (efficiency aside), or make other trade-offs for the sake of performance and efficiency. However, within roughly five years, we can see that hardware will still provide us with a hundredfold increase in capability.

What about algorithmic optimizations? What about engineering optimizations? What about the directions of innovative attempts made by Deepseek?

In the current "human-in-the-loop" environment, whether it's code generation or deep research tasks, I've already started to fall behind the speed of AI. So, what if its capability increases at least a hundredfold in five years?

Yes, the above is the foundation for the second part I've been considering: that "singularity" has arrived, expressed concretely through the NVIDIA product rhythm that everyone cares about at GTC.

These two parts together form the basis of my answers to the three opening questions. "Predictability" has already told me the answers: two years, five to ten years, and "now."

We can already clearly see that AI's generation and search capabilities have seriously shaken the foundations of software engineering and business research. The experiments I've conducted, the articles I've published, and the videos I've generated over the past period all demonstrate this "status quo."

Since this search and generation realize a form of "brain borrowing" and all its knowledge comes from humans, will individuals move toward being more "closed" to prevent being "borrowed"? This is a matter of opinion, and I actually have my own answer.

If, in the visible five to ten years, we gradually move from "Human in the loop" to "AI iteration by themselves," what will happen to society? We probably all need to be fully prepared. I believe it won't just be about "intense competition" (Involution).

We are in a world where the digitalization rate is increasing every moment. Everything in the human field of vision that is "physical" will gradually enter the digital realm: transportation—our displacement from point A to point B—will rapidly turn into computation. Work? I'm starting to lose clarity on what this definition means for the future. Knowledge? Same logic...

Ultimately, this is a world of data and computation. Behind the massive computing power and energy consumption, only by changing production can the investment become worthwhile. Thus, this is a B2B story.

But what if that so-called B2B environment is actually composed of us as individuals? How much difference will there be between B and C?

We may be one person, but we might simultaneously use several, dozens, or even hundreds or thousands of models or "Active Entity" Agents. This won't necessarily make us better, but it will certainly change our social relationships.

Ultimately, generation and search have changed software and knowledge.