Apple finally released the new version of Mac Studio. Instead of the expected M4 Ultra, it features the M3 Ultra. I suspect the M4 Ultra will be reserved for the Mac Pro. After enduring the "growing pains" of transitioning from Intel CPUs to its own silicon (though it didn't feel painful at all), Apple is once again prioritizing the workstation needs of professional users. If they don't keep up, their proud software ecosystem could be compromised.

However, the biggest surprise is the 512GB of unified memory, rather than the anticipated 256GB. I discussed this with friends for a long time, even drawing comparisons to the 96GB VRAM 4090 cards from Huaqiangbei. Since there isn't more information yet, it's hard to comment further, but it definitely exceeded expectations.

Following the news, many comments suggested this could deploy the full version of DeepSeek R1, making it the best "AI PC" ever. Honestly, where were you all before?

As someone who was among the first to seriously practice with and recommend Apple for these tasks, this is a good time for me to stay quiet.

The conclusion is simple: this unexpected memory configuration is likely just one piece of Apple's grand plan for the AI era. Their plan is definitely more ambitious than just selling workstations.

Even if their models are late to the party, Apple holds at least two of the three most important elements of the AI era: their own compute power and their ecosystem.

The third element is the model. This brings me to pouring some cold water on Manus, which has been viral today.

The reason it’s trending is its ability to complete a product with one click—be it a PPT, a report, or other tasks.

Yes, I am still in the queue for testing, but with so many new products appearing, the "seller's demo" is usually the ceiling of its performance.

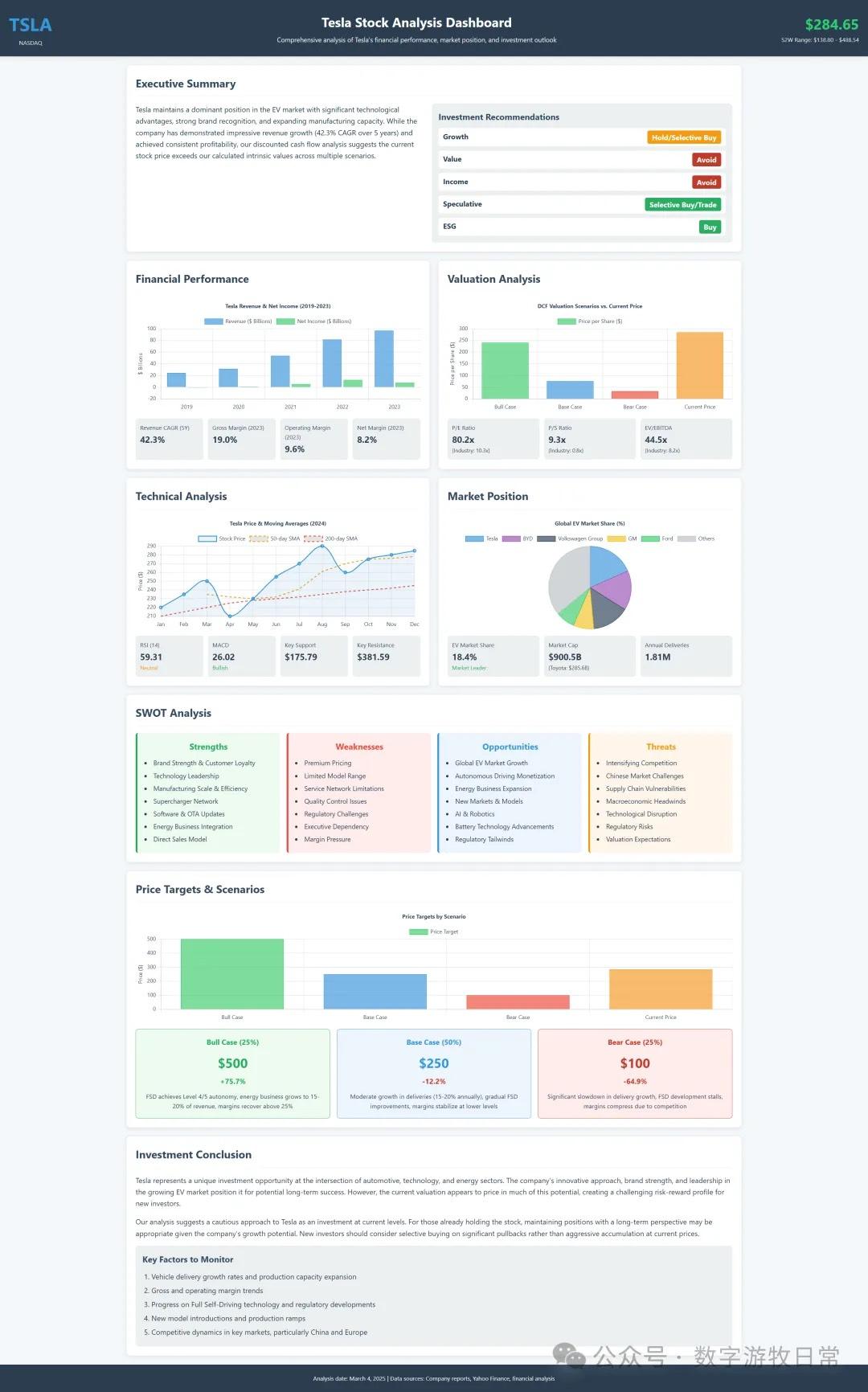

Let’s look at an example from the field I get asked about most: a Tesla report.

Below is a finished report produced by Manus; it looks very nice.

However, this very familiar style immediately reminded me of my own testing workflow: OpenAI Deep Research + Claude.

Indeed, watching Manus's reasoning process shows it’s not directly calling Deep Research, but it is certainly calling a reasoning model (likely one of the major providers). The resulting report's code style and color palette feel identical to the Claude style I know so well.

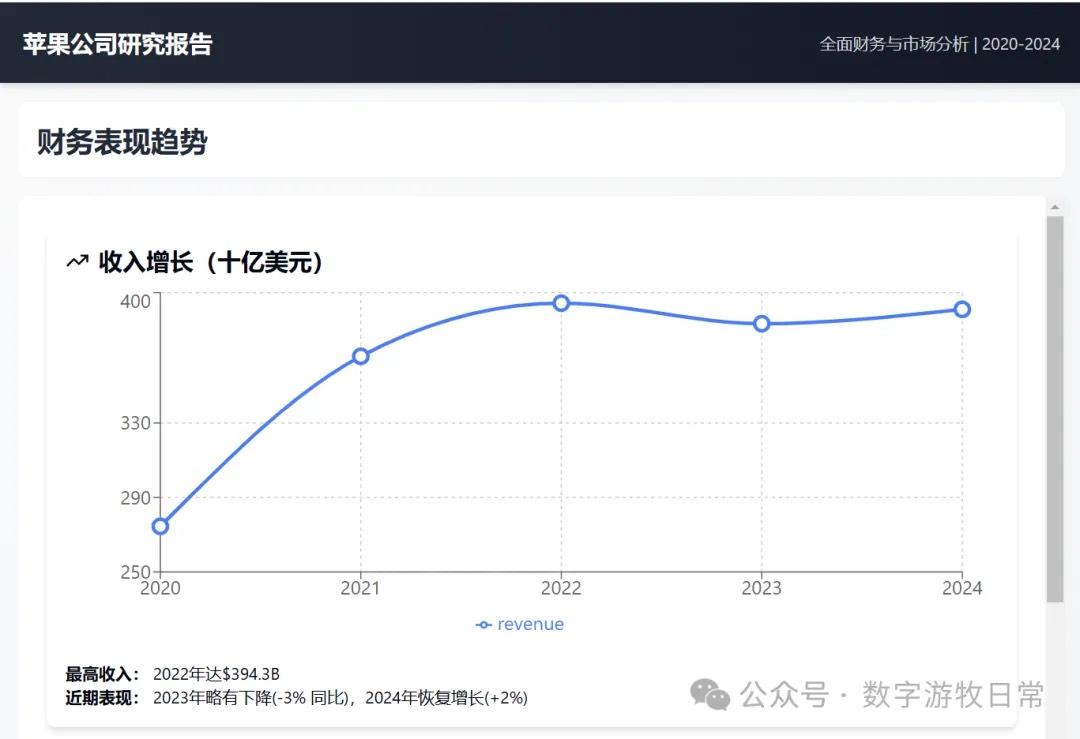

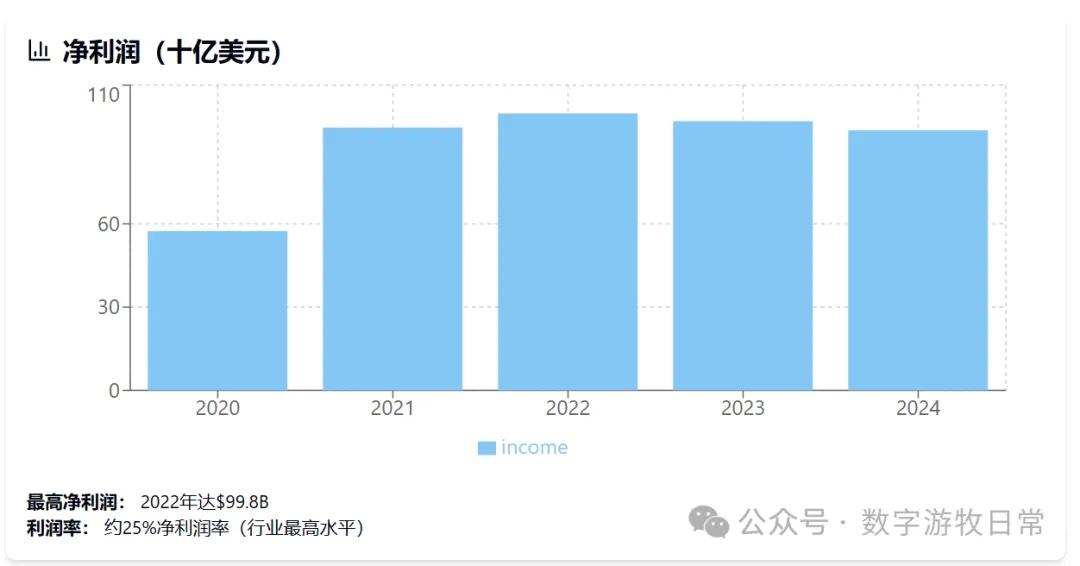

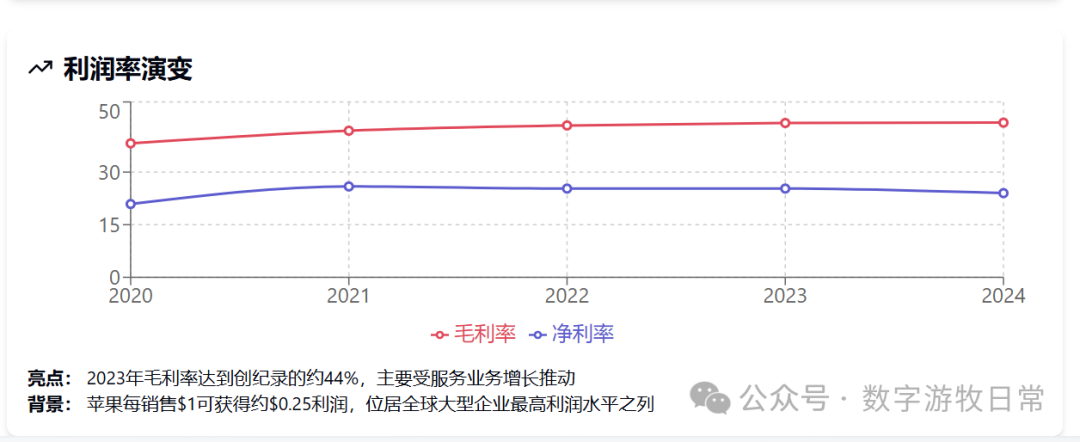

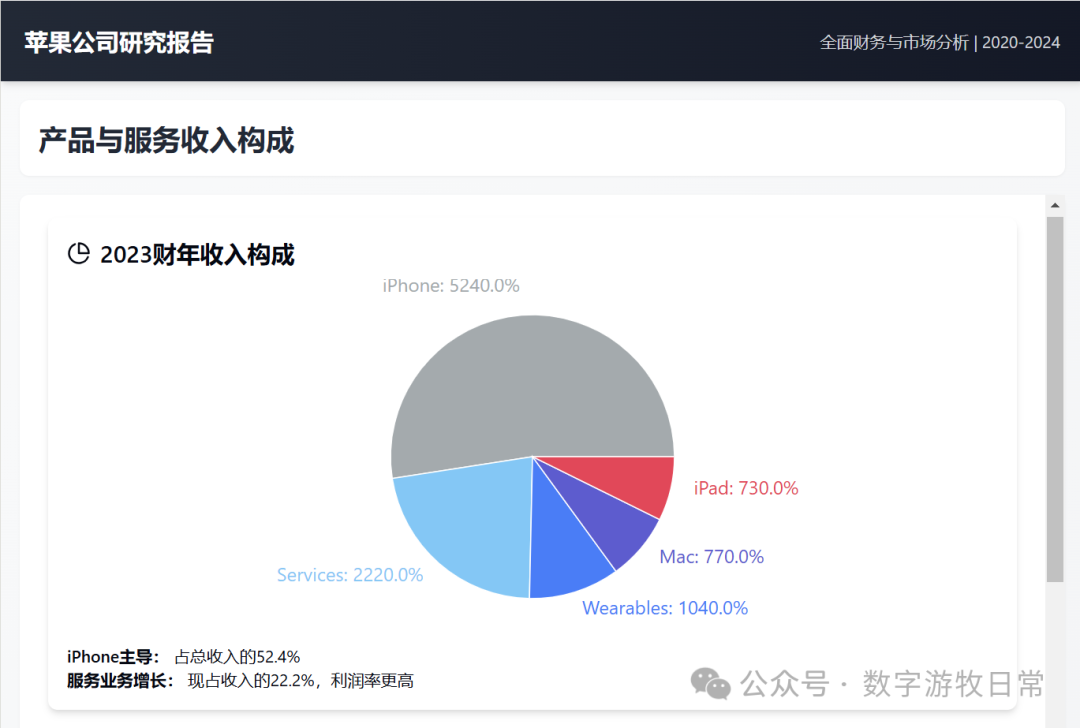

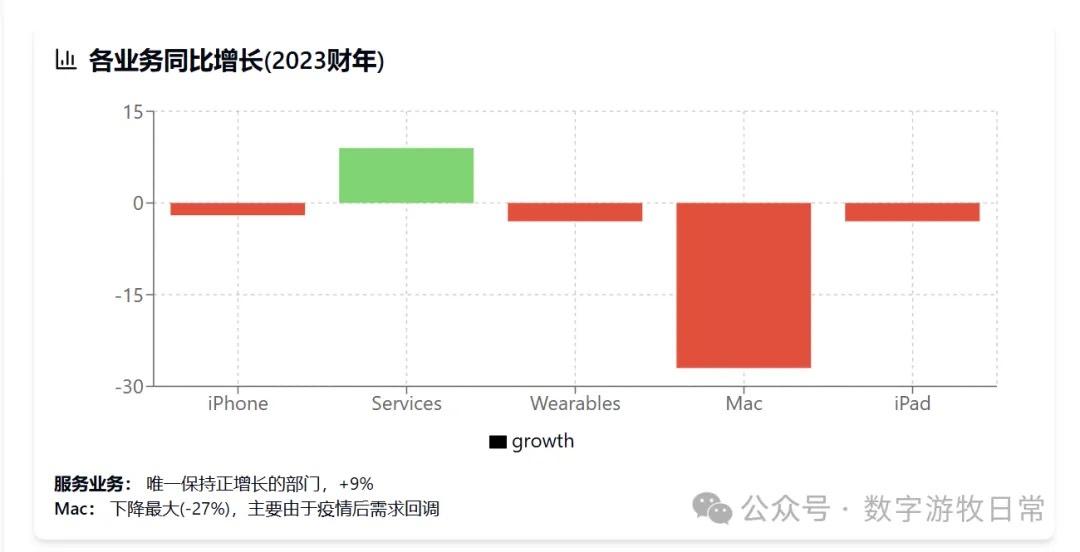

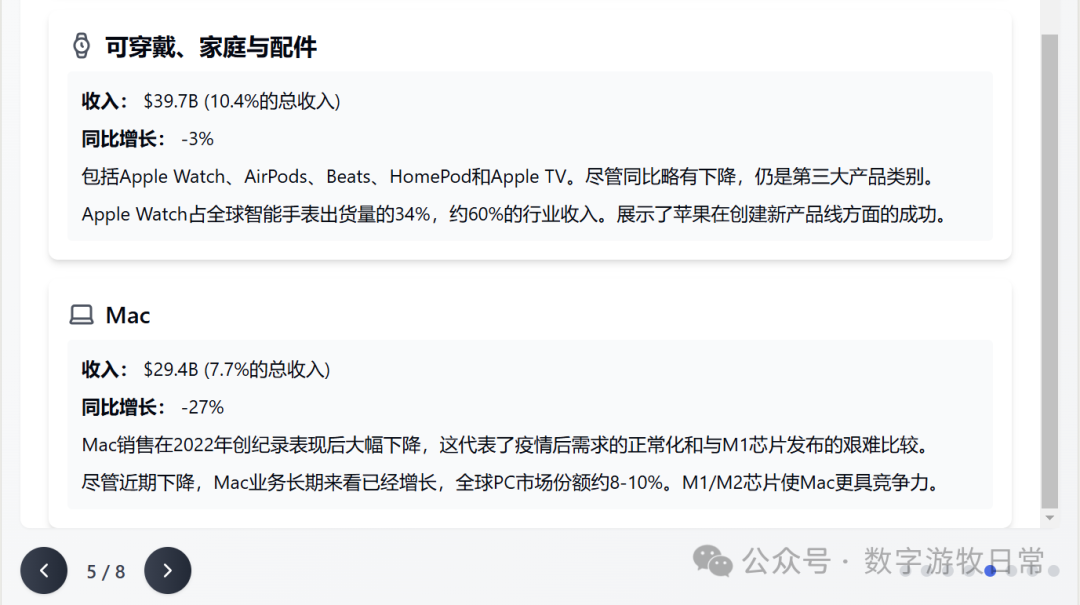

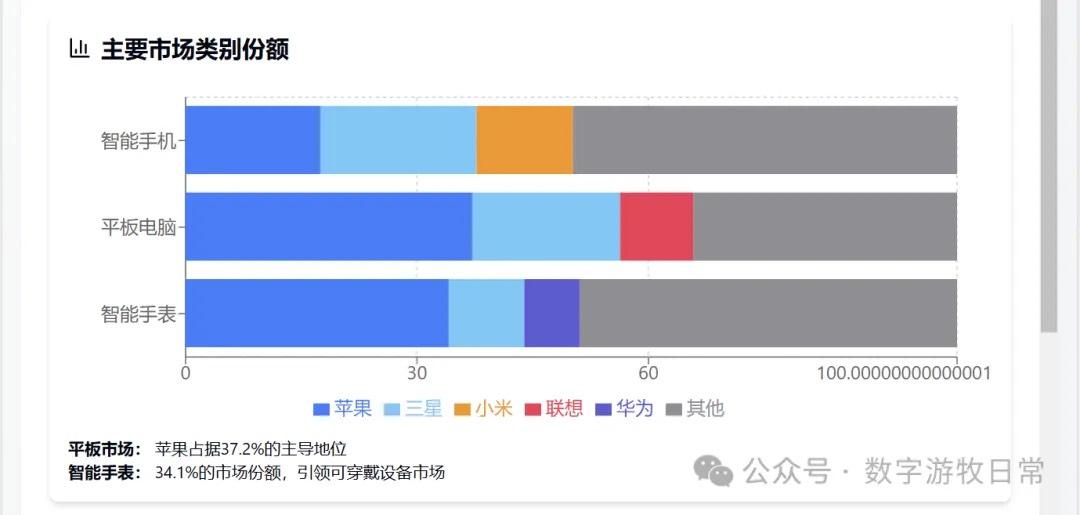

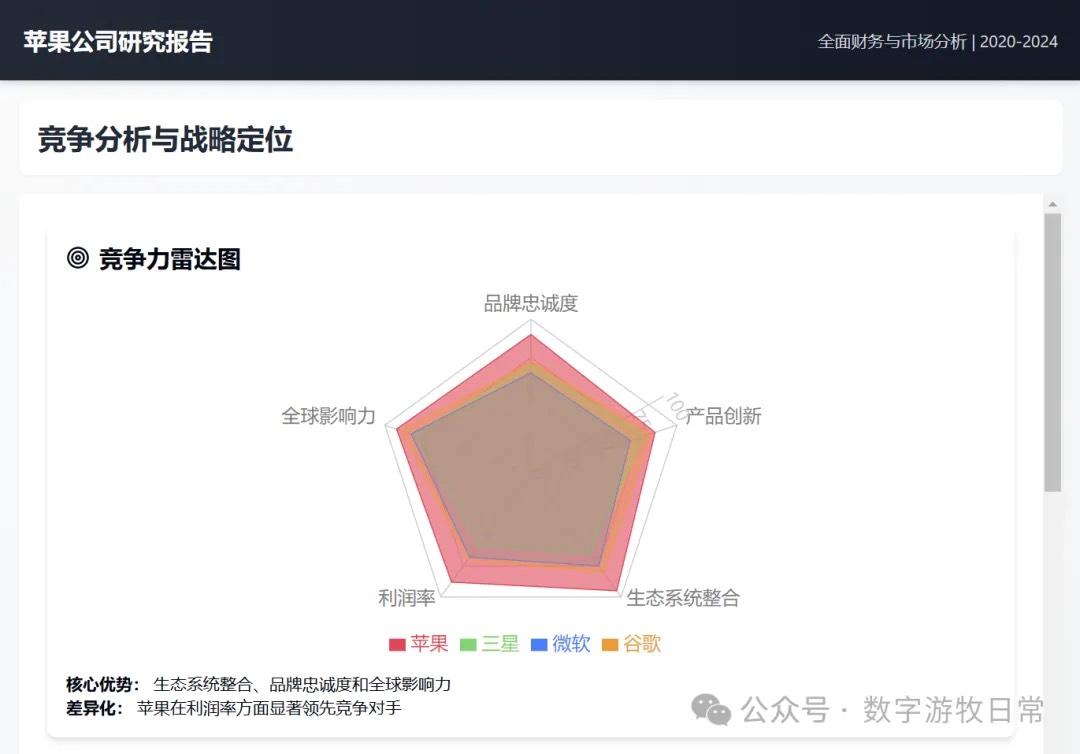

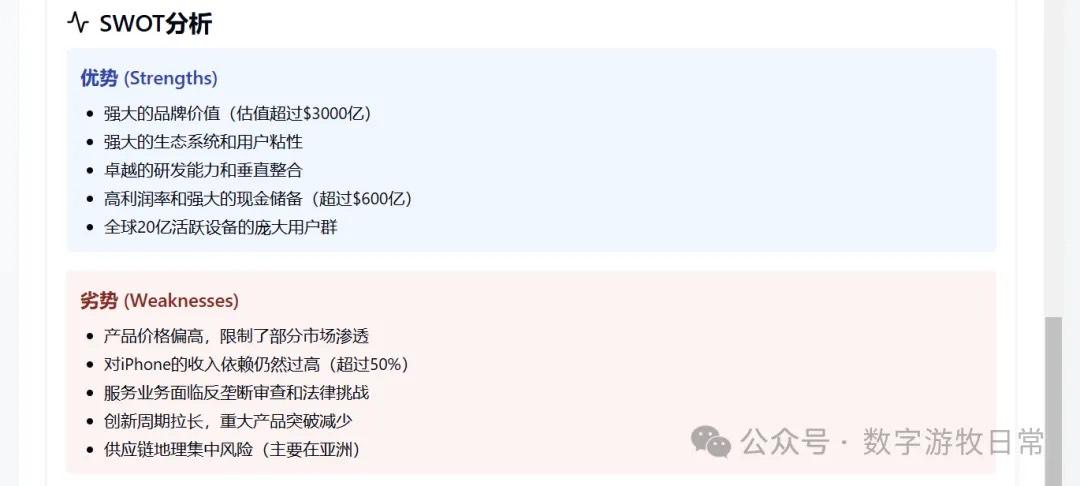

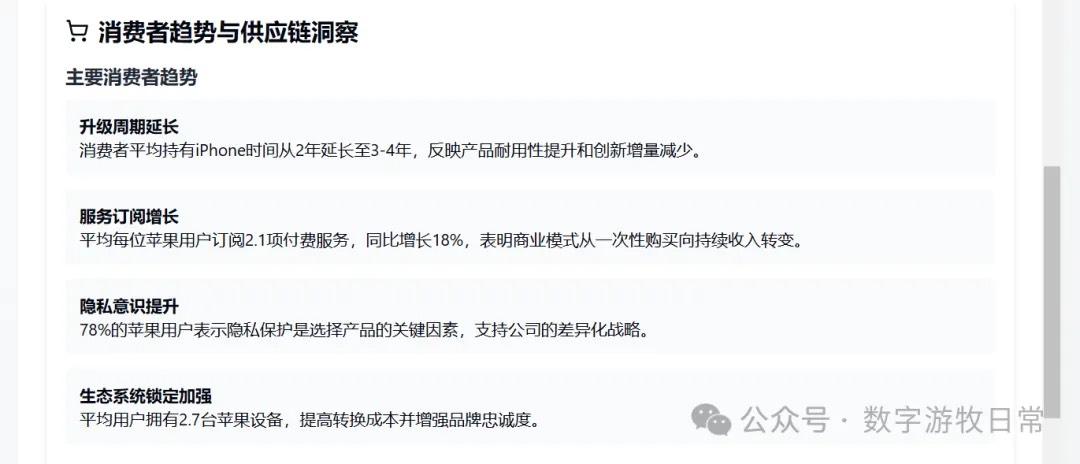

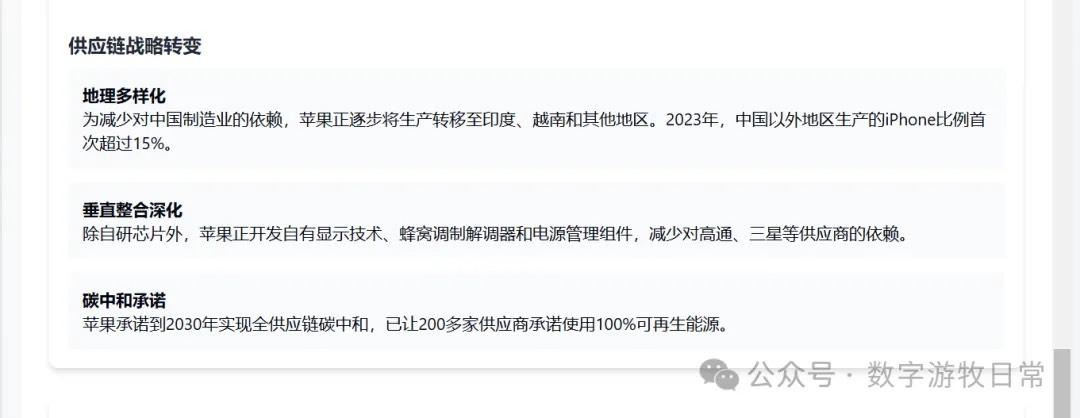

A few days ago, I published a study based on Apple's financial report: Deep Research handled the reporting, while Claude handled the visualization. AI applications might currently just belong to the category of "brute force creating miracles."

Actually, I have a more "report-style" version. Because it's quite long, here is an animation showing the effect. Naturally, aside from some potential numerical errors (which no model can avoid—not OpenAI, and certainly not Manus built on top of it), the richness of the content is higher.

Of course, if you want to see the full version, I have included screenshots below. I wrote a tool to export and edit from Claude, but it still needs optimization. I will release it when the time is right.

Frankly, as an average user, one might think the above reports are excellent, and Manus's one-click generation is very cool (it was once my ideal). But as I've mentioned in previous articles, such output cannot meet professional requirements.

I actually like Manus as a product, and I especially admire their launch style, integration, and aesthetics. However, commercially, I don't think they have a huge chance—even though they appear more promising than many other AI application startups.

The reason is simple:

You don't own the model. Since the reasoning model belongs to a company like OpenAI and code generation is a capability of top-tier models, others can create a closed-loop product to replace you in minutes. Sam Altman's comment at the end of 2023 was correct: all the tinkering on top of models will be rendered obsolete by upgrades to the models' core capabilities. Moreover, the more startups do, the more they provide free ideas to companies like OpenAI. Replacement is easier now than ever before.

I believe the team has done a lot of work on model fine-tuning and toolchain optimization. However, fine-tuning based on underlying models is only suitable for closed-loop scenarios in professional fields. An open-ended tool cannot compete with internal professional expertise.

This is a mistake I used to make: I always assumed "my needs" represented the needs of many. In reality, my needs might only represent my own. I believe the team behind Manus is full of idealistic, hard-working, and capable young people, but the market they think is huge might still be very niche.

Since today I, as a user in a specific professional field, can completely rely on the capabilities of OpenAI and Claude models to surpass Manus's output, I believe many professionals and enterprises can do the same.

It seems everyone needs Manus, yet it also seems no one really needs Manus.

Core competitiveness belongs only to the few companies that possess their own models, their own chips, or their own ecosystems—and each factor is more important than the last.

Perhaps in the future, as models mature, one or two massive application companies will emerge. But in the foreseeable present and near future, the opportunities belong only to the giants and those working for them.

This conclusion is harsh and one I am reluctant to admit (having enjoyed looking down on big corporations for over twenty years), but it is likely the most solid fundamental truth among these facts.