I have always believed that the preliminary stage of AGI consists of three main capabilities: coding, searching, and execution.

Large Language Models possess coding capabilities, and although they are still evolving, they have already met Condition 1;

Whether it's Gemini Deep Research or OpenAI's Deep Research, although they differ significantly in the completeness of results due to different computational scales and inevitably suffer from "hallucinations" in some details, they fundamentally mark a significant improvement in search capabilities, essentially meeting Condition 2;

The third condition, execution, can encompass a wide range of content. Ever since Anthropic launched Computer Use, despite being very rudimentary, it signifies that models have begun to move towards the execution phase. In this process, Google has also been systematically introducing experimental features under the Gemini model framework.

OpenAI naturally wouldn't fall behind and recently introduced the Operator module (similar to Sora, it has an independent secondary domain: operator.chatgpt.com). Today, when I finally had the chance to try it fully, I was pleasantly surprised.

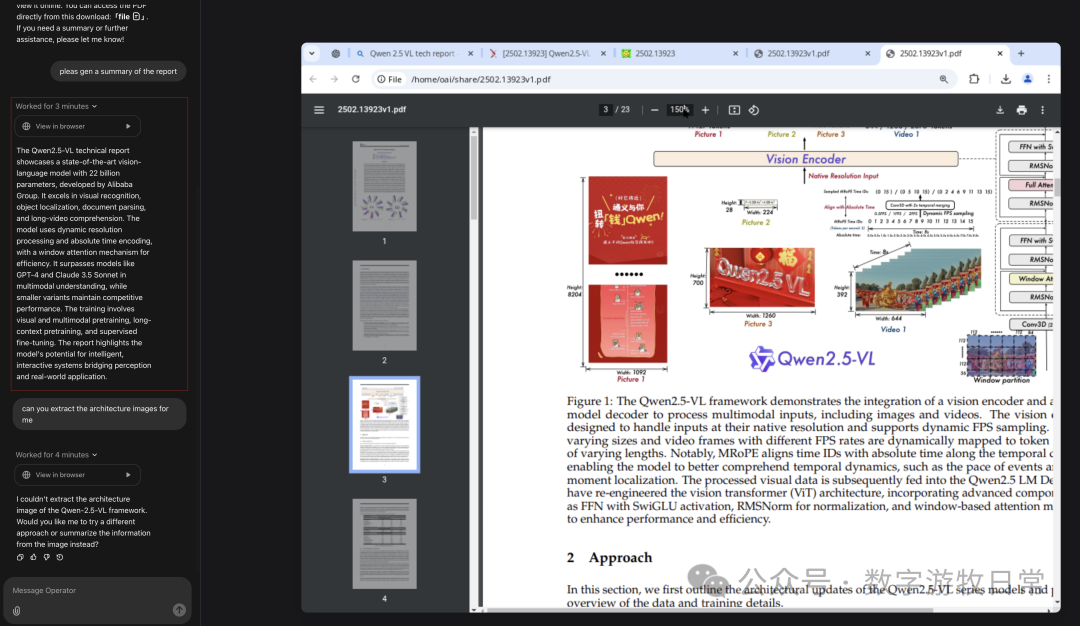

The process involved me asking Operator to read the recently released paper for Qwen 2.5 multimodal model and summarize it. This task could certainly be completed through search, but if a model can mimic human operations, the significance goes far beyond just searching.

As shown in the image above, Operator made several attempts. Due to browser permissions and its virtual machine configuration issues, it was initially unable to complete the PDF rendering. After asking me twice "whether" to continue, it finally succeeded in loading, completed the homepage browsing, and generated a summary. This summary was admittedly a bit brief, but this is likely just because the underlying large language (multimodal) model has smaller parameters and limited capability (to save on runtime compute).

Of course, I then asked a new question, requesting it to "capture" the model's architecture diagram. In the previous question, it had already seen the last page of the paper, so it went back page by page and finally found the architecture diagram on the second page. Due to a lack of tools, it was unable to complete the image saving or screenshot operation. But this isn't because the model couldn't do it, rather it couldn't find the tools. Even after I "took control" and entered the virtual machine, I couldn't find a suitable tool to complete the screenshot.

Based on the process I observed, I believe the model completed the "task" and "execution," meaning Condition 3 mentioned at the beginning of this article has also been met.

For various reasons, Operator's operation speed is very slow; the video below (mentioned in text) only replays the clicks for each step.

If you look closely at each step of its reasoning, you can clearly feel that it is much stronger than Anthropic’s Computer Use and Rabbit’s LAM model.

If a model can choose the appropriate execution path and tools based on the task, and continuously adjust based on the environment (virtual machine environment, desktop content) until the task is completed.

Then, can we say that it has actually begun to approach AGI?

I think we can. Although issues like persistent memory still stand in the way, there is good reason to believe that OpenAI likely has versions much more powerful than this Operator version (larger multimodal models supported by greater compute, with a bunch of tools pre-installed in the virtual machine).

What is missing is compute power—cheap model inference compute, and compute for "learning" from more scenario data.

It also lacks an environment to break out of the "virtual machine" and enter the "real world."

Of course, there could be a super app that automatically "operates" a phone based on user commands, but the ones who can achieve the ultimate experience in this regard will only be the mobile operating system developers.

Of course, this model could also be integrated into robots, giving robots stronger "execution power," but what is similarly missing is compute power—higher compute, but with lower energy consumption.