In fact, I have spoken about the issues with DeepSeek model inference multiple times over the past week—whether it's the high threshold for deployment, the illusion of it being "cheap," or its efficiency.

These were covered respectively in:

Deploying Large Models Locally: Only One Week from Start to Abandonment

Optimizing Model Inference Performance is Not Simple

DeepSeek Research (2): Minority Report

However, I was suddenly asked the same question several times in a single day, so I'll address it here.

Now that everyone understands the difference between the "distilled," "native," and "full-fat" (unquantized) versions, the threshold for the full-fat version is very straightforward: 671GB (model file size) / 80GB (GPU VRAM) = 8.4. This is an awkward number; eight H100s aren't enough. Therefore, the minimum configuration is one 8-GPU server plus one 4-GPU server. Usually, this means two 8-GPU servers.

A technical person might suggest: You can deploy the "quantized" version on four cards or a Mac Studio cluster, but the concurrency is poor; you have to queue up. By using two servers and installing the "full-fat version," you can support high concurrency. Furthermore, since constantly running servers are prone to crashing, adding two more for "hot backup" ensures no business disruption.

Technically, this is entirely correct.

First, let's discuss the difference between the "quantized version" and the "full-fat version":

The "quantized version" has a smaller model file because the precision used to represent each parameter is lower. For example, 4-bit uses 4-bit integers to represent each parameter (among the 671B parameters), whereas DeepSeek-R1 (actually based on V3) is BF8, using 8 bits for each floating-point parameter. This sacrifices precision but halves the resource requirements. Currently, there is also a dynamic quantization version that can reach 1.58-bit, which is roughly one-fifth the size and can be handled by four H100s.

The advantage of quantization is that it requires fewer resources and offers faster inference. The disadvantage is the drop in precision, leading to lower output quality (in most scenarios, this isn't a major issue, but if the conversation length is too long, the hallucination rate increases significantly as precision becomes insufficient).

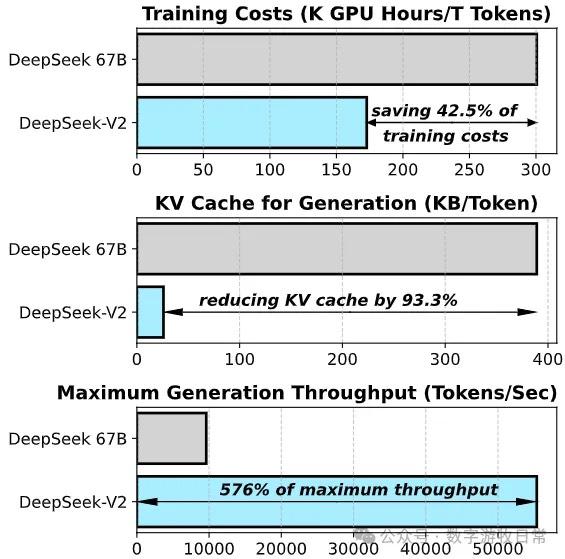

The quantized version has an even bigger drawback for DeepSeek models because the model itself is MoE (Mixture of Experts), with 24 experts of 37B each. In the inference optimization of the "full-fat version," these 24 experts can be distributed across different GPUs to balance the load and improve concurrent output. Quantization loses this huge advantage of flexible MoE deployment. Additionally, because quantization is essentially compression, the model requires more computation during runtime, leading to higher KV-Cache usage. The actual VRAM required during operation will be significantly larger than the model file size, and the cache occupancy will spike during high concurrency.

Next, let's talk about concurrency—how is it achieved?

If only one model is deployed (without a front-end load balancer), concurrency is achieved in two ways:

Cache hits: No inference is needed; results are read directly from memory.

Since different layers of the model are deployed on different GPUs or devices, for a multi-card setup barely running one model, each card can technically only process one request at a time. However, in the best-case scenario, four cards could process four requests simultaneously (provided that each request calls layers that happen to be on different GPUs at that moment).

So, strictly speaking, the number of cards is the theoretical upper limit for handling concurrent volume.

When deploying the "full-fat version" on two or more servers, MoE provides an added benefit: since each inference only calls one 37B expert model at a time, the supported concurrency increases significantly. However, this specifically applies to V3; the situation for R1 is much more complex.

I suspect this is why DeepSeek officially provided inference performance optimization results for V2 but not for V3 and R1. First, V3 must be deployed across multiple machines, shifting the bottleneck from memory bandwidth to network bandwidth; performance optimization is no longer about a single node but potentially requires load balancing for the entire cluster. Second, the thinking process of R1 involves expert model hits and routing that DeepSeek might still be continuously optimizing; there are several issues on GitHub discussing these problems.

Inference performance is a highly complex issue. If resources are only enough to run a single model, even with four 8*H100 servers, expectations for concurrent performance shouldn't be too optimistic. Currently, SGLang seems to be the fastest, but performance optimization is still ongoing.

SGLang deployment method for DeepSeek:

https://github.com/sgl-project/sglang/tree/main/benchmark/deepseek_v3

For enthusiasts, you can follow the progress and discuss optimization methods. For enterprise deployment, especially after investing in servers...

At this point, we can basically draw two conclusions:

No matter how you calculate it, providing online inference services for "full-fat" models is a losing game financially. This means that as long as the data isn't sensitive, one must "freeload" on existing services.

For local deployment, there is another pitfall: the "web search function." Therefore, the first thing to optimize is the business workflow—deciding which tasks should "freeload" via web access and which should be processed locally in batches.

Finally, one more conclusion: without a massive cluster and strong ecological support, cloud MaaS (Model-as-a-Service) has no chance. The better the service and the more customers you have, the more you lose, and the faster you "die." There is essentially no viable business model.

Returning to the clock two years ago: why do we need an ecological cloud?