I've discovered how to use Gemini and OpenAI's Deep Research: for a specific topic, they automatically organize information. For unfamiliar fields, it may be the fastest and most reliable way to learn quickly. For familiar fields, it acts as an "efficiency tool" for producing more comprehensive and fundamental content.

So, yesterday, regarding model inference performance issues, I assigned the work to OpenAI, but the heavy lifting of "full-text translation" still had to be handled by Gemini.

Complete Basic Introduction to Large Model Inference Performance

Daoming, Public Account: Digital Nomad Daily

[Deep Research-3]: Let's discuss LLM inference performance and have o3 do a "fact check."

With this combination, I can jump straight to the conclusions below:

For local deployment on personal devices (usually laptops or PCs), performance isn't the primary consideration as long as it isn't too slow (e.g., my 0.1 tokens/s last week). What matters is the balance between model capability and hardware resources (GPU, VRAM, RAM). Using the best possible model within acceptable hardware constraints is a better choice: an M4 Max-equipped MacBook should be running 70B models.

For local deployment on mobile phones, the principle is sufficiency. Given enough capability, the model size should be as small as possible (2B/3B, or even 1.5B/1B?). However, this sets a very high bar for phone manufacturers. Since the user experience is constantly perceived, it requires high integration of models, data (what Apple calls context from user profiles), and application scheduling. So far, I have three high-frequency scenarios on my phone: 1. Circle to Search (or translation); 2. Speech recognition/transcription; 3. Handwriting recognition in Samsung Notes. These are all driven by Gemini. I'm still trying out things like GMail email summaries, but organizing information always requires a quiet block of time.

For internal enterprise deployment, I've always felt this business belongs to private cloud services. It's hard for a single company to find the optimal efficiency point—low usage leaves hardware and personnel idle, while high usage leads to insufficient hardware and skyrocketing maintenance costs. If moving to a private cloud, the more appropriate method should be renting services rather than raw compute, paying by usage, ensuring easy migration, and simplifying internal performance evaluation models.

Cloud deployment, or so-called MaaS (Model as a Service). MaaS is not as simple as building or renting a data center, buying some servers, downloading an "open-source model" (open weights), and providing API calls with a billing module. In fact, it’s quite complex: proprietary models, proprietary hardware, proprietary optimization algorithms, value-added SaaS services, and downstream ecosystems—you need at least one of these elements, otherwise, why would users choose you? Of course, there's also proprietary resources, like cheap land, cheap electricity, or being close enough to users. But the core remains two points: model capability and total package cost.

This has essentially evolved into:

- SaaS services that sell both software/services and bundled CSP hardware rentals.

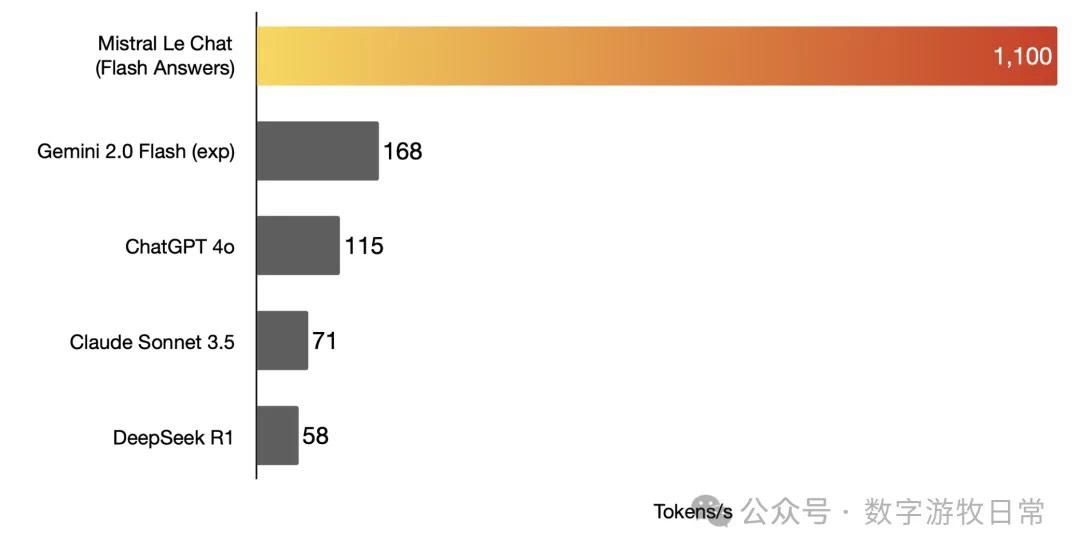

- Model invocation services on specialized inference chips (Groq and Cerebras). SRAM-based chips must be deployed by the providers themselves, but their inference speed is incredibly fast. However, if the Transformer architecture is replaced, the usability of this hardware will significantly decrease.

- API services brought by downstream ecosystems. Currently, Google and Samsung seem to be moving the fastest. Although both are Gemini-driven, Samsung rebranded it as "Galaxy AI." Samsung doesn't charge for it, likely because Google doesn't charge separately either (except for the Gemini Advanced version). Google has its own models, its own TPUs, and its own ecosystem. Because of this, the market still has high expectations for Apple: a better ecosystem, its own chips, iCloud services, and its own models will eventually arrive and be "good enough."

Interestingly, the combination of proprietary chips and proprietary models is a double-edged sword. Only if it's "cheap and abundant" will there be users and a sustainable business model, because users likely won't rent compute directly; they will rent API services (even when called within apps). If the model stops leading, API calls will vanish, and the proprietary chip becomes a "liability." Or if the chip falls behind, high inference costs will "deter users."

Of course, deep integration with the most advanced compute plus value-added services seems like it can survive for a while, whether by renting out compute or providing third-party model API services. Most importantly, each generation of hardware upgrades by top-tier companies can reduce inference costs by over 90%. If a data center has sufficient scale and user volume at that point, it can further improve efficiency and reduce costs.

Ultimately, if everyone is just "playing the middleman," it comes down to who has the lowest cost. The most advanced compute is clearly the biggest contributor to cost reduction, followed by data center and user scale—the larger the scale, the more room for optimization and lower costs.

Point being: if even large data centers have insufficient utilization and high call costs, what role is left for "small, resource-strapped" data centers?

The more they are used, the more they lose.

After all, examples of leaving the rat race in big cities like Beijing or Shanghai to live a happy "pastoral life" mostly just exist on social media.