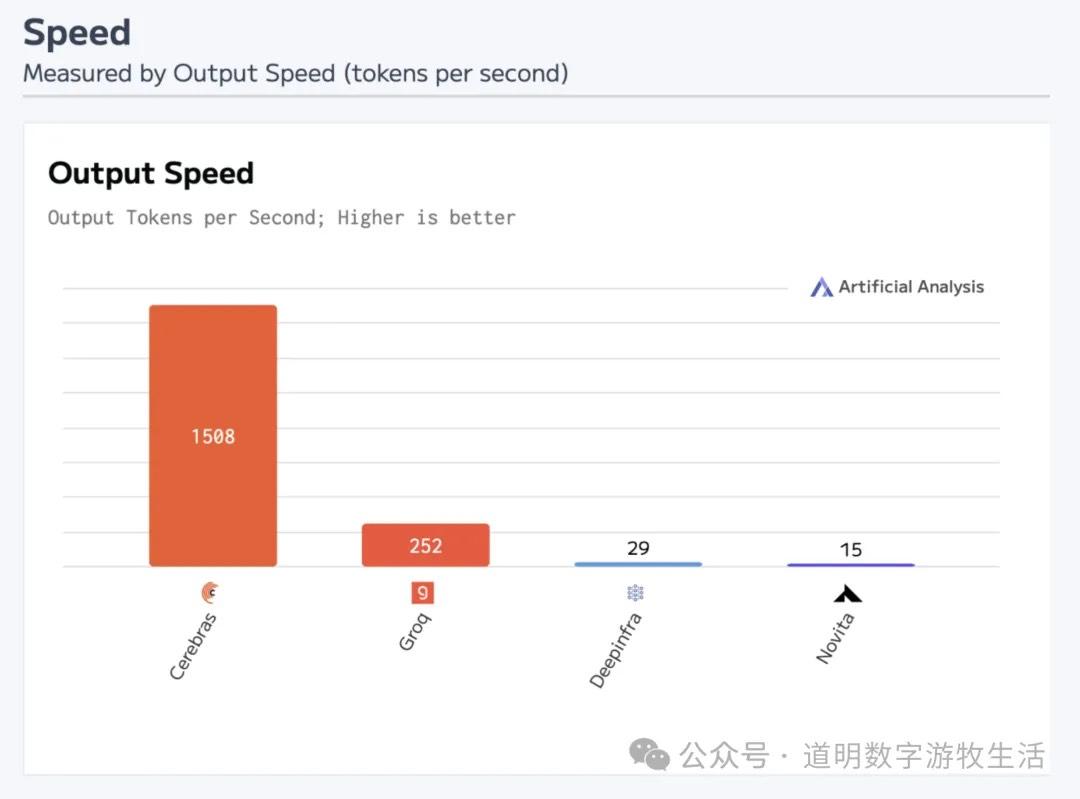

Following the announcements from Groq and Cerebras regarding support for DeepSeek-R1, and their expected "ceiling" values for inference speed, the short-term technological shockwave caused by DeepSeek has essentially come to an end.

However, because technology and capital markets are so deeply intertwined, the psychological aftershocks seem far from over:

Is this bad news for computing power and NVIDIA? Because it looks like we don't actually need that many GPUs;

Since the "barriers" don't seem that high, does it still make sense for big companies to spend so much capital on training?

Answering these questions is truly difficult; there are too many threads, and logic can easily become muddled.

Actually, the fundamental reason for the massive shockwave caused by DeepSeek is this: on the surface, OpenAI models haven't shown explicit, massive progress for over six months (though we know frontier models are becoming more useful), and o1 and o3 are still just experiments on important paths, none having reached a "ChatGPT moment."

Hypothetically, if one or more of OpenAI, Google, or Anthropic were to release a next-generation model in a short time (three months?) that easily improved various benchmarks by more than ten points, even if the cost were ten times higher, the market might not think the "Pinduoduo of models" was that remarkable.

From any perspective, there is no doubt that since the second half of 2024, the progress speed of models has significantly lagged behind the previous expectation curve. The main reason is obviously the instability of OpenAI as a de facto "startup crew," but an important secondary reason is the series of technical issues encountered during the deployment of NVIDIA's Blackwell clusters.

So, it's not entirely unfair for "computing power," represented by NVIDIA, to take the fall for market skepticism: the promise of "20-30x training and inference power increase in large clusters, with 4x energy efficiency" has been a pending future for nearly a year.

The only way to counter skepticism is through better models. When a student the "top student" helped gets a higher final exam score, the top student has no choice but to prove themselves by competing in higher-level competitions.

Then comes the second question: since the path to the "next generation" is full of uncertainty, and even if reached, one might be quickly caught, should massive capital expenditure continue?

Microsoft and Meta provided clear answers in their earnings calls: Continue!

Why?

Because "more data, more computing power" is the path with the strongest certainty, or the only visible one: for decades, or since the invention of the computer, every algorithmic innovation has stemmed from "hardware bottlenecks," but the scaling laws of innovative algorithms will consume all hardware improvements in the shortest time—big data being a previous example.

Despite more competitors, it seems that in a year or two, the market will still consume almost all of the "best computing power." in the field of compute, the core contradiction is not "is it needed," but the time gap between the "paper specs" at a launch event and the "actual compute" that is truly deployed. If this gap approaches the generational upgrade time on a hardware company's roadmap (a Moore cycle?), then demand will disappear for an entire generation.

These are the real issues to focus on. In fact, it has been the primary underlying logic of the "smart market" over the past six months.

This covers all of the computing power issue and half of the capital expenditure issue.

The other half of the capital expenditure problem is: monetization.

The past two years seem to have proven one thing: it is impossible to make money purely by selling large language models.

The essence of selling LLMs is selling compressed data (everyone is essentially doing "distillation," and in the end, there is no good solution other than administrative "usage restrictions"). "Exclusive data" can sell for a high price, while data that "everyone has" is worthless.

From a product perspective, these data must be attached to carriers that can "open up scenarios." This is why I favored AI hardware and the edge from the start, and why I have been less optimistic about Microsoft. Microsoft's past success was the success of the Wintel alliance, followed by the monopoly of Office based on the Windows monopoly, and then the position of Azure cloud based on "enterprise office digitization." The reason Azure cloud maintained high growth when large enterprises cut capital expenditures after 2023 is also simple: its monopoly on OpenAI.

"Far ahead" software and experiences can create scenarios, build ecological monopolies, and then open up more monetization paths. Players who are most urgent to increase capital expenditure represent a strong desire to "break through via technical innovation," and they are "betting" big. Although the success rate isn't high, the rewards of success are large enough that the massive "expected return" can support a massive narrative.

If the market starts to lower "expected returns," the story becomes even more interesting. However, looking back over the last half-year, have these "fundamental" factors changed significantly? Will they change significantly in the future? Not really. It's just that a "bucket of cold water" is occasionally needed to cool down an overheating fever.

DeepSeek’s appearance is more like that "bucket of cold water." If not DeepSeek, it would have been someone else, like the "less fortunate" QWen, MiniMax, or Kimi...

If you look at benchmarks, domestic models have taken off. I believe the technical gap is small; after all, even abroad, many people use QWen2 for local deployment. This is a normal manifestation of technical and data spillover, which is why I have been saying since 2023 that the gap is roughly six months.

As mentioned before, so-called LLMs are essentially "data compressors." OpenAI's business is not selling models, but selling data. Data inevitably becomes cheaper to sell, and publicly sold data cannot remain secret.

Actually, OpenAI is well aware of this problem, but if time were wound back two years, their options were likely limited. Moreover, with massive capital pouring in from all directions, choosing to go "closed source" to generate revenue rather than "open source" to solidify a base is likely the choice anyone would have made.

So, returning to the models themselves, there are roughly two solutions: compress more data; and give models personalized memory (which I am reluctant to call "intelligence").

The former involves finding more massive "new data," such as images, video, sound, and spatial information; the latter involves combining the model with scenarios to "fuse" with more scenario-specific data.

The former is still part of what "pre-training" is preparing for; at least the progress of Sora indicates that data demand is larger than expected, not smaller.

The latter is what "post-training" reinforcement learning truly aims to solve. It is the ultimate goal of thinking models like o1 and o3, though it remains unclear if it can be achieved through the methods used by o1 and o3.

From a "decompression" perspective, multi-modal or "physical world" data reconstruction requires very high fidelity. Sora's "wow" seller showcase vs. its frequent "buyer showcase" failures likely proves this: why do relatively simple, focused scenarios look good, while large-scale scenes look full of loopholes?

Essentially, those riddled loopholes are the result of "not enough data, filling with randomness."

During the holidays, I tried to illustrate this with the following example.

Therefore, if we remain "materialists," data remains one of the most important foundations toward so-called AGI.

Then, another question: where is personalized memory (or the "intelligence" I'm reluctant to name)? It is in every usage scenario.

This appeared in narrow applications, specifically the evolution from AlphaGo to AlphaZero. The former compressed enough game records, and the play process was a reconstruction ("exhaustion") of various scenarios through calculation; the latter trained itself through deep reinforcement learning (RL on deep neural networks) during "play," strengthening memory through "rewards" from game results, eventually turning play into a sort of "conditioned reflex."

Because the scenario of Go is very focused and "rewards" are easy to set, this method is not only theoretically feasible but also practically successful. Today, the greater value of AlphaZero is not proving AI exceeds humans (no longer needs proof in Go), but acting as the most powerful coach for player training. The goal of "AI making people better without harming them" has been achieved, at least in such narrow fields.

But back to "AGI," the problem is much more complex. Although deep RL is theoretically still feasible, in practice, setting aside the issue of massive general scenarios, even the same question in different scenarios for different people has different answers. "Reward" settings cannot be one-size-fits-all.

This is a primary reason why the importance of spatial and embodied intelligence has risen rapidly recently. It is also why OpenAI is attempting the o1 and o3 path.

However, regarding thinking models like o1 and o3, I still feel this path looks blurry. First, it is still a cloud model mode that is "the same for everyone"; second, obviously, the "reward data" used for training is still pitifully scarce.

I believe OpenAI spent a lot of time and cost training o1 and o3, but any way you look at it, these "thinking models" seem like large "prompt models": the training process might just be about how to better translate a user's plain language into prompts that help "GPT-4o" output better results.

I also believe that a model truly possessing "thinking ability" after reinforcement learning would be very difficult to "distill." But a "prompt model" is essentially still a data compressor, just selling so-called "thinking data." However, for now, the actual data volume of this "prompt model" is much smaller than an LLM, and the decompression cost might be much lower.

I also believe that if given enough "reward" data, "thinker models" might "emerge" with thinking abilities. But where will this data come from? Especially personalized "reward" data?

The answer might be very focused: After the release of the ChatGPT "Assistant" feature, there is a hardware gap between OpenAI and Agents.

PS: Due to length and various reasons, this piece leaves some "loose ends" on certain issues.