With CES about to open, the prelude to AI in 2025 must naturally be initiated by NVIDIA, starting with Jensen Huang's keynote speech.

Regarding NVIDIA's software platform and ecosystem, it has always been difficult to describe clearly: a very good design, but a vague user experience.

Therefore, let's focus on two new hardware releases: the Blackwell-based consumer graphics card RTX 50 series, which is available now; and a future product, the AIPC concept Project DIGITS.

The RTX 50 Series and AI Inference Demands

Firstly, the 50 series cards have always been positioned in NVIDIA's gaming business line. However, since the AI boom in 2023, a massive demand for local inference emerged. The 40 series cards, sharing the same architecture (Ada Lovelace) as the H100 at the time, became the highest-performance choice.

In terms of pure GPU capability, the flagship 4090 is actually stronger than the H100. However, because it uses DDR6X memory which has lower bandwidth than HBM, its comprehensive inference performance is slightly inferior. Comparing the two, the 40 series' advantage is its versatility in gaming, image/video processing, and 3D rendering—it is a proper graphics card. Its weakness is the maximum 24GB VRAM, which prevents loading larger models, and the lack of NVLink, meaning no high-speed interconnect between cards. The H100's advantage lies in its 80GB HBM memory and NVLink support for large clusters; its weakness is that it cannot function as a graphics card.

Additionally, the 40 series can be fitted into high-end desktops with relatively easy control over power consumption, cooling, and noise. The H100 requires at least a high-end workstation or server, with much stricter demands on the data center environment.

Thus, the H100 is strictly for AI, while the 40 series is the top choice for gaming, video processing, and 3D rendering while handling general AI applications. NVIDIA also has the RTX A-series; for instance, the RTX 6000 Ada shares the same architecture as the 40 series but comes with 48GB VRAM—double that of the 4090—though with slightly lower memory bandwidth. Its rendering speed and AI inference performance are slightly lower than the 4090 (within a 10% margin), but it consumes less power, is more compact, and has more memory, making it the best choice for high-end individuals or studios caught between the H100 and the 40 series.

With that background, let's turn to the protagonist: the Blackwell-based 50 series cards. This review focuses specifically on the flagship model: the 5090.

Since NVIDIA announced the Blackwell architecture early last year, many have had high expectations for the 50 series, especially regarding its AI capabilities.

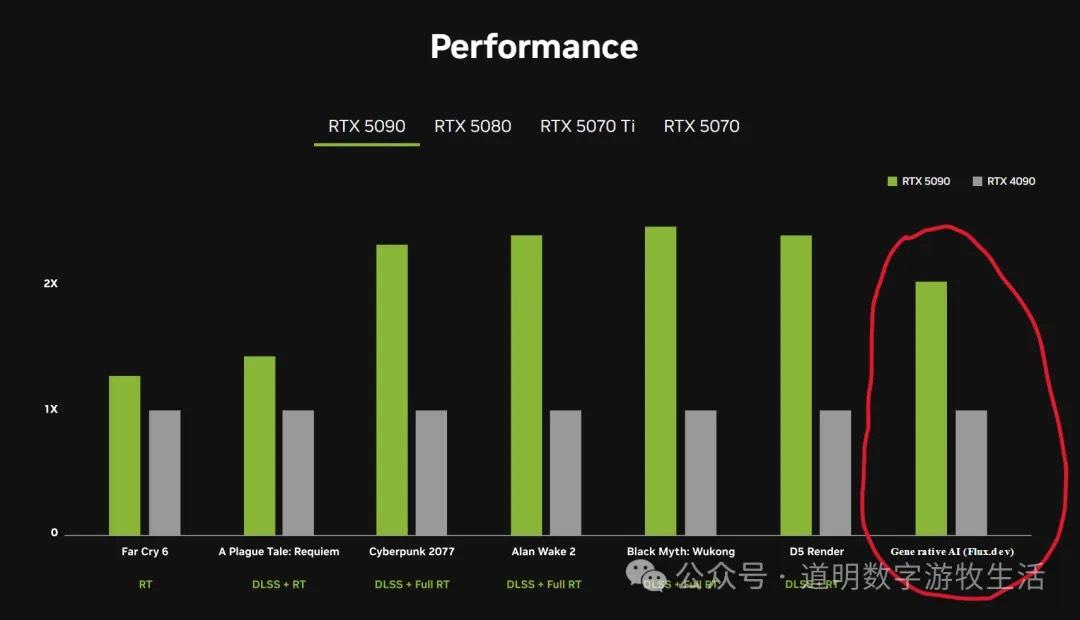

The performance comparison highlights image generation using the Flux.1 model. The keynote highlighted that generating an image takes 15 seconds on a 4090, while on a 5090, using the same prompts and parameters, it takes just over 5 seconds.

Looking at these charts, the performance boost seems substantial, especially when combining gaming and image generation. However, while gaming performance is a "blind spot" for me, the testing environment notes reveal that the 40 series used DLSS FG (Frame Generation) while the 50 series used DLSS MFG (Multiple Frames Generation). This suggests the gains are more about algorithmic improvements than hardware capability.

The two items on the left side of the performance chart probably better reflect actual hardware gains: roughly 20% to less than 30%.

This aligns with our understanding when Blackwell was first announced: on a per-die basis, Blackwell's improvement over Hopper is about 25%, largely due to an increase in die size.

So, what about the image generation speed? The test conditions clarify (though the press release is vague and the font in the chart is tiny): the 40 series used FP8, while the 50 series used FP4. If the speed comparison is 15s vs 6s (2.5x), normalizing the precision back to the same level reveals a 1.25x or 25% performance uplift.

20%-30%—that is the true hardware performance increase of the Blackwell architecture.

Indeed, when Blackwell was announced last year, many (including myself) fantasized about the 50 series featuring dual-die chips like the B200. That did not happen.

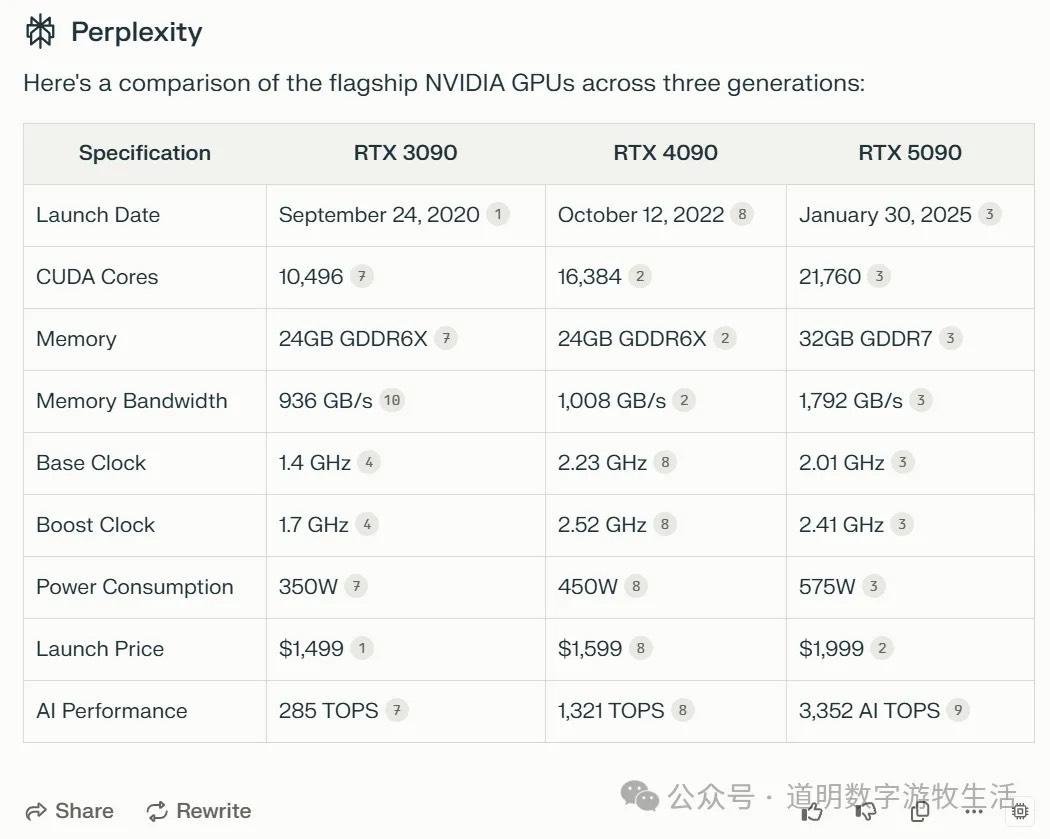

Combining the last three generations starting from the 30 series, we see an interesting trend: the most significant leap was from the 30 series to the 40 series. Due to various reasons, the price of the 4090 has continued to rise in China.

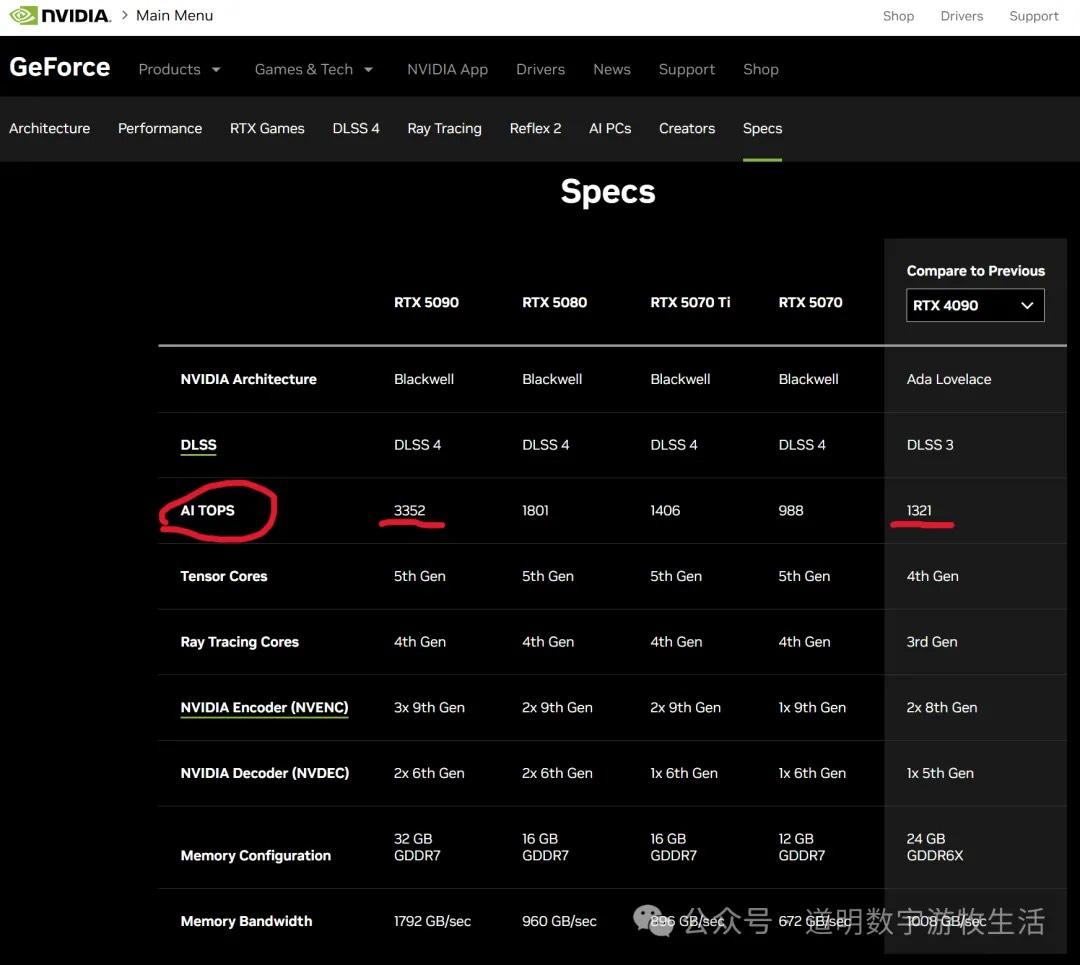

NVIDIA no longer discloses FLOPS performance across different precisions in its specs, opting instead for the concept of "AI TOPS." They compare the 5090's 3352 TOPS at FP4 to the 4090's 1321 TOPS at FP8. Converting back to FP8, it's 1676 TOPS vs 1321 TOPS—an improvement of less than 27%.

Looking at the launch cycles, the gap between the 40 and 50 series has extended by about two months compared to the 30 to 40 series gap. The truth behind the glossy exterior: there is no "black magic." Looking at power consumption: 575W vs 450W is an increase of over 27%.

Market investors are smart, and NVIDIA's stock performance after the keynote says it all. Of course, the 50 series has its selling points: if you need DLSS 4 and FP4, the 40 series doesn't have them.

In simpler terms: if the 4090 isn't meeting your gaming needs, the 5090 is the inevitable choice. For AI inference, if FP4 precision suffices, the 5090 could potentially halve your operational costs compared to the 4090.

The Second Release: Project DIGITS

This was a surprise. The market knew NVIDIA and MediaTek were working on an SoC. After Apple's new Mac Mini launch, more people realized the massive potential for small desktop AIPCs. In terms of form factor and aesthetics, this product looks promising.

Performance-wise, it hits 1 PetaFLOPs at FP4. While less than one-third of the 5090, considering its size and power draw, it's actually quite good. The highlight is the ConnectX chip. Although it's a future product and NVIDIA hasn't released actual network specs, it theoretically supports 400Gbps of inter-machine interconnect bandwidth.

It targets the Apple M4 Max's 128GB unified memory. On paper, it has stronger CPU and GPU performance (the CPU is a 20-core MediaTek chip, outnumbering the M4 Max's 16 cores, though I predict it won't beat the M4 Max in real usage. Apple doesn't disclose GPU TFLOPS, but based on memory bandwidth and real-world use, the M4 Max might be weaker than NVIDIA's GB10).

Thus, NVIDIA's DIGITS may beat the M4 in GPU performance and interconnect bandwidth, with matching memory capacity and potentially higher memory bandwidth. While DIGITS offers better value, Apple's ecosystem is far superior.

Currently, only Apple's macOS truly supports ARM-based SoCs well. Both Linux and Windows have poor ARM support. Desktop systems rely on ecosystems; there are likely fewer than 5 million users worldwide willing to tinker with low-level system issues. Even though NVIDIA provides good ecosystem support for Linux through the Orin series, handling deployments and bug fixes still requires extensive Linux development experience.

Furthermore, DIGITS won't ship until at least May, and by this summer, Apple's M5 will arrive. While I am excited about this product, I am skeptical about its market share—especially since it's currently just a "future product."

Reliable sources suggest Apple is already redesigning its SoCs and interconnect capabilities. The path is clear for them. Once again, Project DIGITS isn't bringing any revolutionary "black magic."

Conclusion

The greatest value of the Blackwell architecture announced last year lies in dual-die interconnectivity and GPU-to-GPU scaling. Remove those, and the harsh reality of Moore's Law slowing down becomes apparent. More frighteningly, on a single-chip basis, the pure hardware price-to-performance ratio has not improved.

Starting in 2025, the two biggest things to watch from NVIDIA are the actual deployment of Blackwell mega-clusters (like the rumored 30,000 or 100,000 card clusters) and the next-generation Rubin architecture. However, as the discussion shifts toward AI application deployment, the constraints of infrastructure and software ecosystems—the "soft power"—will become increasingly visible.