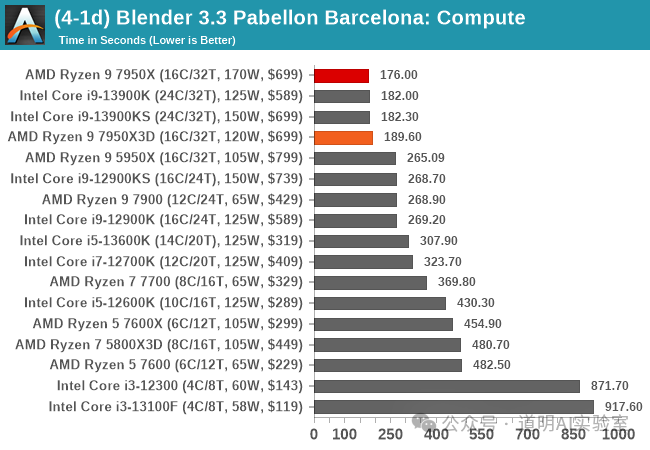

Since the weekend, media reports have surfaced that the AMD 7950X CPU (16 cores, 32 threads, Ryzen 9 series) has gone out of stock due to its outstanding "mining" performance (mining the "Qubic" coin yields approximately $3/day after electricity costs; the 7950X is priced around $500-600).

In fact, it's not just AMD's new generation flagship 7950X that shows significant performance improvements (the previous generation 5950 was released in late 2020); Intel's 14th generation i9 series chips have also substantially enhanced their graphics rendering capabilities.

Test results above are sourced from: https://www.anandtech.com/show/18747/the-amd-ryzen-9-7950x3d-review-amd-s-fastest-gaming-processor/5

Only time-intensive scenarios are selected as references.

Commentary:

- There is a strong correlation between "mining," graphics rendering, and AI inference capabilities. Consequently, the aforementioned news naturally impacts AMD and leads smoothly to the concept of the AI PC.

- Strictly speaking, servers, workstations, desktops (PCs), laptops, and other computing devices can be seen as similar, yet they have clear distinctions. However, the current definition of an "AI PC" is relatively vague. For now, let's expand it: the AI PC form factor mainly includes workstations, desktops, and laptops. The biggest difference from previous similar products is that AI PCs are capable of, or rather more suitable for, model inference.

- Key elements of inference capability: Memory capacity and speed; chip support for neural network computations; and power consumption.

- Therefore, I have always believed the best AI PC is powered by Apple's M-series chips. Whether for laptops or desktops, this view has not only remained unchanged but is actually strengthening: more memory, lower power consumption, more efficient computing units, and the underlying computing ecosystem support provided by Apple (not the App Store type, but neural network computing software packages optimized specifically for their own chips).

- Since the primary application of AI PCs currently remains office work, people lean towards laptops in terms of form factor, which places higher demands on weight, heat dissipation, and battery life. This is why Intel launched the Meteor Lake architecture products, the Core Ultra 7/9 series—an SoC with a hybrid of multiple manufacturing processes and specialized computing units to control power consumption. Of course, AMD launched related products even earlier.

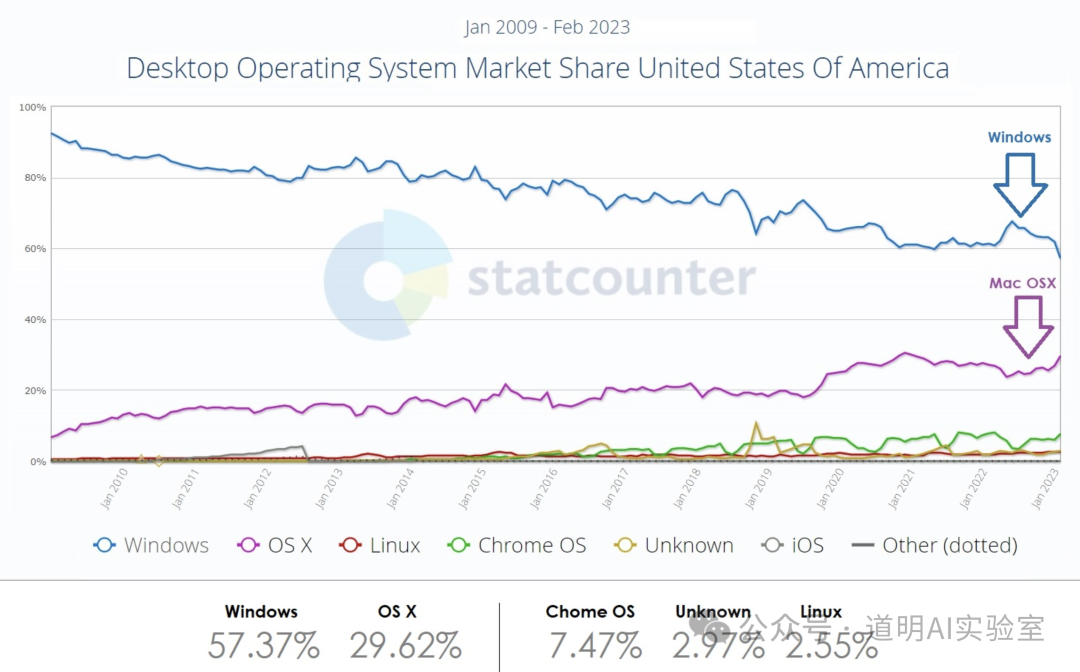

- If evaluated comprehensively, Apple's chips are undisputed "versatile powerhouses." The only drawback might be that more users are accustomed to Windows. However, since the introduction of Apple's proprietary M-chips, macOS market share has returned to a fast upward trajectory, while Windows share has rapidly declined.

Source: statcounter

- Of course, in the past year, Windows market share has seen a noticeable recovery due to AI. The credit belongs neither to Microsoft, Intel, nor AMD; it belongs entirely to NVIDIA. To use an NVIDIA card, you need an Intel or AMD CPU, and in the desktop market, the OS choice is essentially limited to Windows.

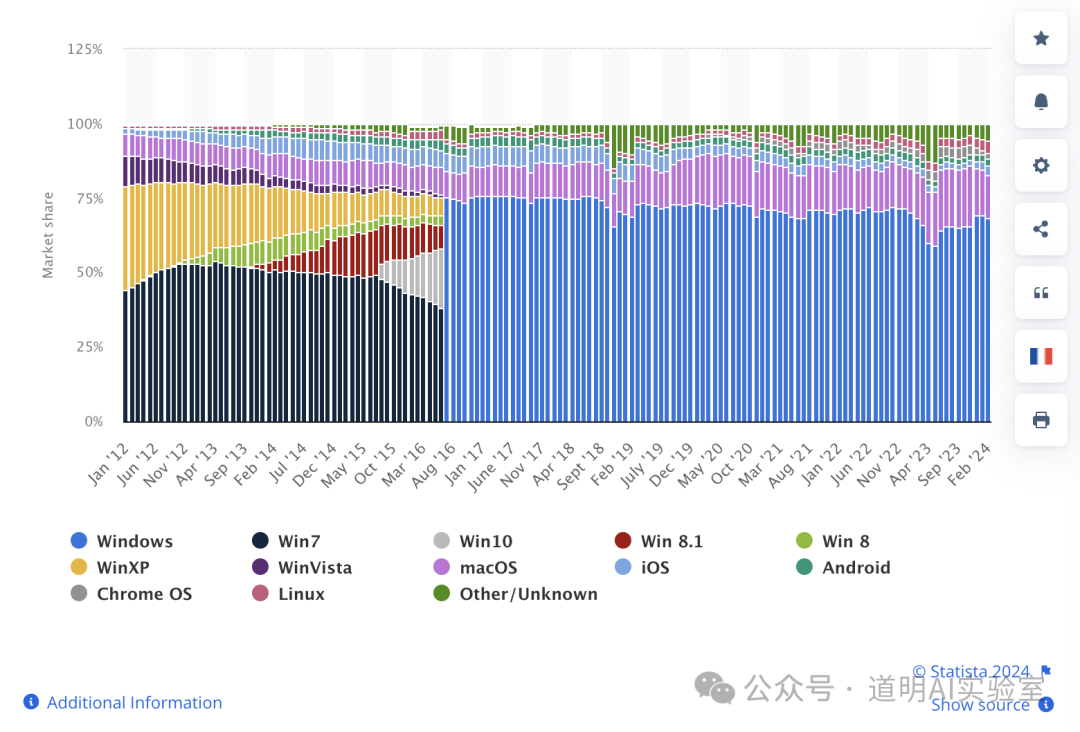

Source: statistia

- This presents a very interesting phenomenon. As mentioned in the previous NVIDIA outlook, in the desktop market, the direct competitors are NVIDIA and Apple, rather than Intel or AMD versus Apple. The reason is simple: at the same power consumption, Apple chips possess the strongest capability. But if consumers want to use more powerful NVIDIA graphics cards, they must choose between Windows and Linux operating systems. However, the opportunity in the desktop inference market is just beginning, so both NVIDIA and Apple have huge opportunities (Intel and AMD cannot afford to miss out, and Qualcomm sees new opportunities, hence everyone is making moves). In this application field, the trend of ARM replacing X86 has been established.

- Returning slightly to the opening issue: If the requirement for an AI PC is limited to large model inference, Apple chips have proven that ARM-based SoCs are the better choice. However, we see an emerging trend: AI is rapidly entering the multimodal or image/video application era. The link between models and video/game content production is becoming tighter. Inference and graphics rendering will become the two most important demands. Rendering clearly places higher requirements on the chip. It requires not just NVIDIA cards (represented by the 40-series gaming cards or L40S workstation cards, rather than the training-oriented A100 or H100) and more CUDA ecosystem support, but also CPUs with sufficient multi-core computing and rendering power, as well as higher memory bandwidth to support frequent data exchange between the CPU and GPU (in fact, in many graphics rendering scenarios, the bottleneck is more often the CPU). Intel and AMD have clearly realized this and are emphasizing graphics rendering capabilities in their latest products (Intel's Core 14th Gen, AMD's Zen 4 architecture). The results are reflected in AMD CPUs being able to mine "Qubic" and in Blender benchmark results.

- From a broader time horizon, chips will likely move toward a more diverse era. Even the same manufacturer will launch completely different chips for different niche segments, which is more economical for users. To summarize:

- In the inference market, power consumption, weight, heat dissipation, and battery life become more important metrics. ARM-based SoCs will have better prospects; Qualcomm is actively entering, and NVIDIA is also gearing up.

- In the professional film and game production market, the demand for GPUs drives the demand for CPUs. The CUDA ecosystem remains the most difficult barrier to break in this field. Intel and AMD seem poised to benefit from the growth of NVIDIA's consumer-grade inference market.

- Simultaneously, we see ARM chips, represented by the Ampere series, rising rapidly in high-end server and workstation CPUs. Although currently mainly targeting the virtual systems market due to more computing cores, it provides massive room for imagination, which can be glimpsed from NVIDIA's Jetson series chips.

The rapid evolution and coopetition between chips, operating systems, and scenarios across multiple dimensions are far more interesting—and offer much larger opportunities—than the AI training market. The excitement may have only just begun.